Kai Yang

564 posts

Kai Yang

@YangK_ai

AI | Entrepreneur | Lifelong Learner | Astrophotographer | @Scale_AI | Ex-@LandingAI — Opinions are my own

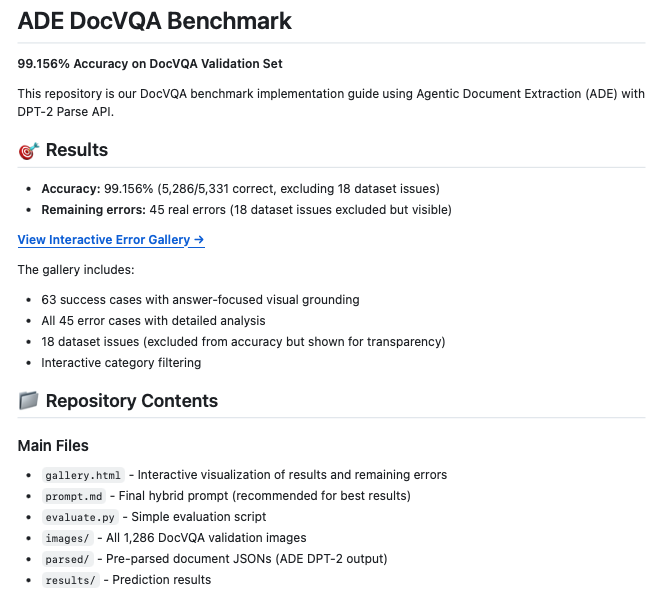

Welcome to the home of all things @scale_AI research — focused on data, evaluation, safety, and post-training that moves frontier models forward. We’ll share benchmarks, insights, and work intended to be useful to the broader research community. labs.scale.com/?utm_source=hu…

AI agents are getting put to work on real tasks. Training them to do that well is harder than it looks. For the past year at @scale_AI, we've been building environments where agents can practice real workflows - simulated worlds that mirror real software, real processes, and real decisions. They can fail, try again, and get better before they touch production. This process is how these agents eventually become reliable for the world’s most important decisions. Today we're officially launching Scale RL Environments. Nearly half of our new training projects already run through them. We're building more this year. See what your agents can learn: scale.com/blog/rl-enviro…

Speech isn’t just text read out loud. 💬 Real conversations are dynamic, full of interruptions, and context-rich — and benchmarks should match. Introducing Audio MultiChallenge (Audio MC), the first benchmark built to test how well native Speech-to-Speech models handle real conversations.

Today we’re taking a big step on the path toward AGI and releasing Gemini 3— our most intelligent model yet. With Gemini 3, you can bring any idea to life. It is state-of-the-art in reasoning, the best model in the world for multimodal understanding, and our best agentic and vibe coding model.

Below is an additional reading list if you want to read more about robot foundation models: Additional Reading List - Brohan, et al., 2022, [RT-1: Robotics Transformer for Real-World Control at Scale](arxiv.org/abs/2212.06817) - Brohan, et al., 2023, [RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control](arxiv.org/abs/2307.15818) - Open X-Embodiment Collaboration, 2023, [Open X-Embodiment: Robotic Learning Datasets and RT-X Models](arxiv.org/abs/2310.08864) - Chi, et al. 2023, [Diffusion Policy: Visuomotor Policy Learning via Action Diffusion](arxiv.org/abs/2303.04137) - Liu, et al., 2024, [RDT-1B: a Diffusion Foundation Model for Bimanual Manipulation](arxiv.org/pdf/2410.07864) - Etukuru, et al., 2024, [Robot Utility Models: General Policies for Zero-Shot Deployment in New Environments](arxiv.org/abs/2409.05865) - Kim, et al. 2024, [OpenVLA: An Open-Source Vision-Language-Action Model](arxiv.org/abs/2406.09246) - Cheang, et al., 2024, [GR-2: A Generative Video-Language-Action Model with Web-Scale Knowledge for Robot Manipulation](arxiv.org/abs/2410.06158) - Octo Model Team, 2024, [Octo: An Open-Source Generalist Robot Policy](arxiv.org/abs/2405.12213) - Fang, et al., 2025, [Robix: A Unified Model for Robot Interaction, Reasoning and Planning](arxiv.org/abs/2509.01106) - NVIDIA, 2025, [GR00T N1: An Open Foundation Model for Generalist Humanoid Robots](arxiv.org/abs/2503.14734) - Yang, et al., 2025, [FP3: A 3D Foundation Policy for Robotic Manipulation](arxiv.org/pdf/2503.08950) - arxiv.org/abs/2508.07917 - Lee, et al. 2025, [MolmoAct: Action Reasoning Models that can Reason in Space](arxiv.org/abs/2508.07917) Additional Resources - [Short Blog on VLAs](itcanthink.substack.com/p/vision-langu…) by Chris Paxton - [U of T Robotics Institute Seminar on Robotics Foundation Models](youtube.com/watch?v=EYLdC3…) by Sergey Levine