Yilong Chen

28 posts

Yilong Chen

@Yichen4NLP

PhD Student at UCAS. Research on efficiency and generalization in LLM architectures. Still a lot to explore in model architect.

(2/7) 💵 With training costs exceeding $100M for GPT-4, efficient alternatives matter. We show that diffusion LMs unlock a new paradigm for compute-optimal language pre-training.

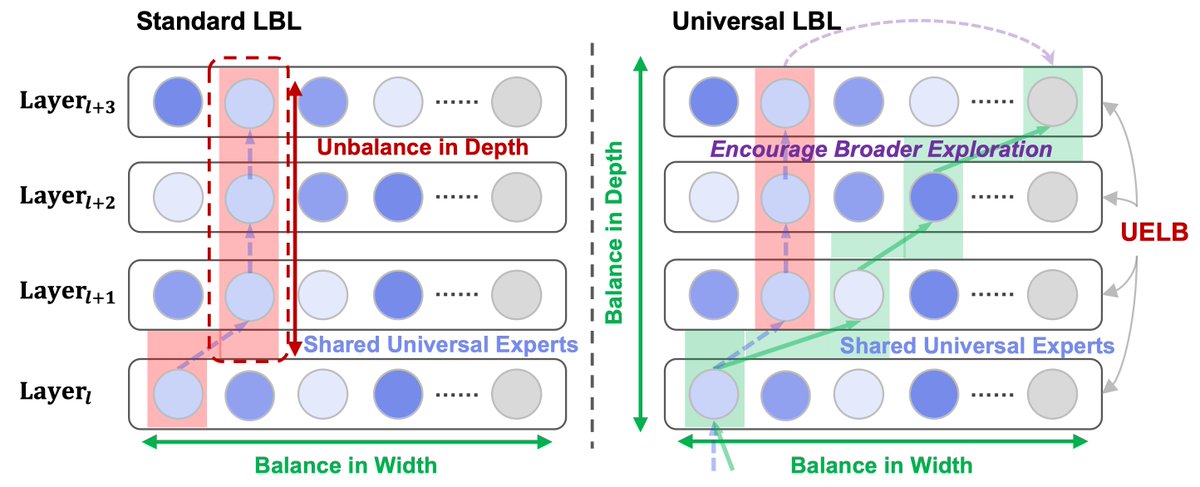

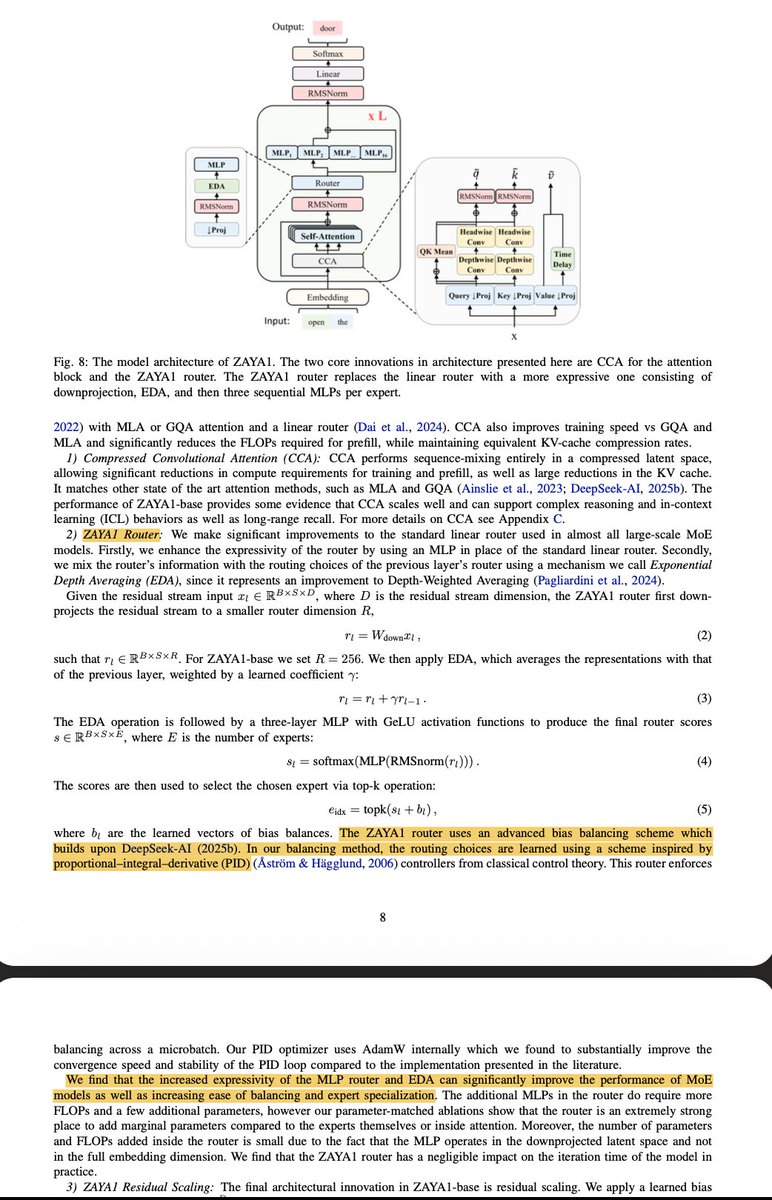

So we changed both the architecture and the objective. MoUE uses: - a Staggered Rotational Topology to localize search, - UELB to balance experts by exposure, not raw count, - a lightweight Universal Router for coherent multi-step routing.

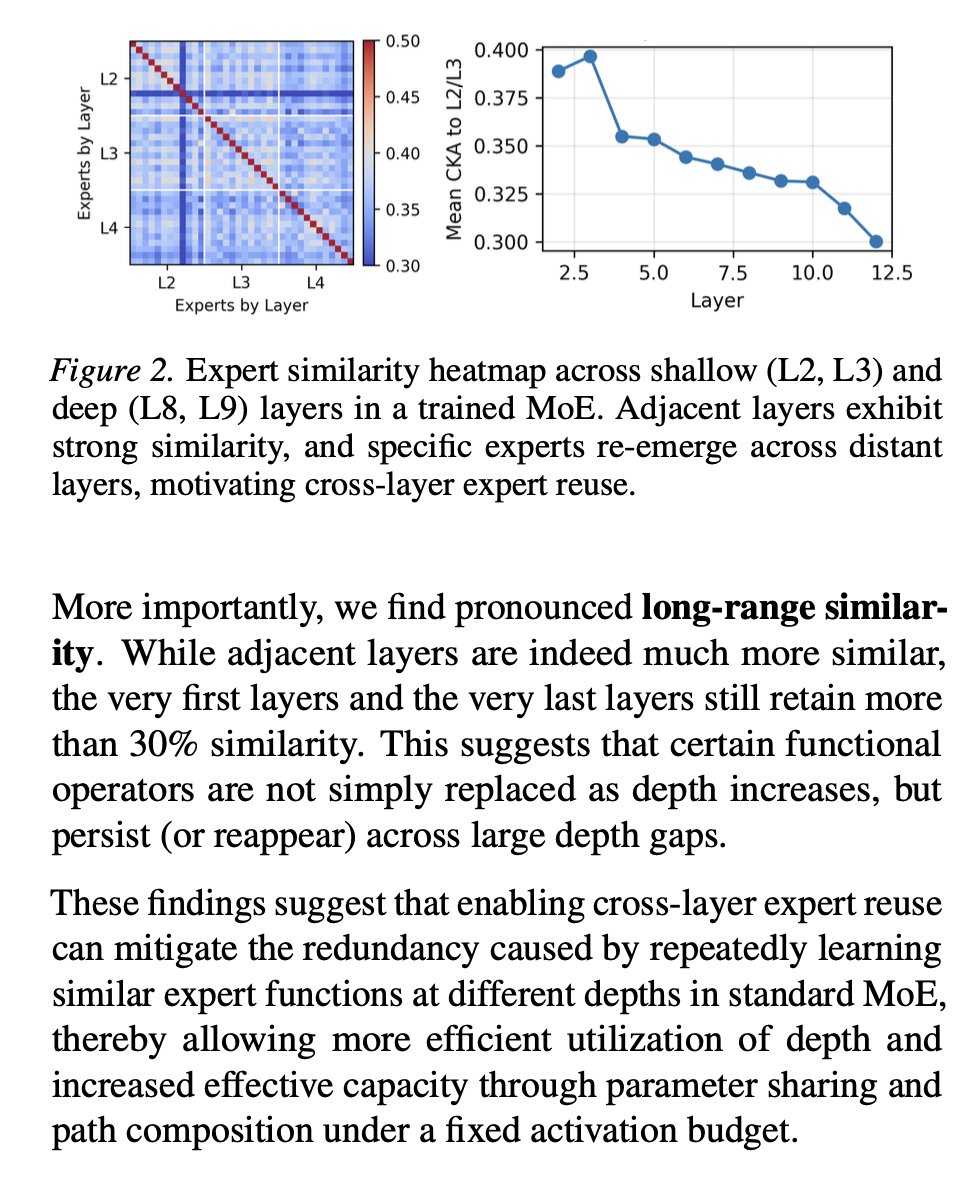

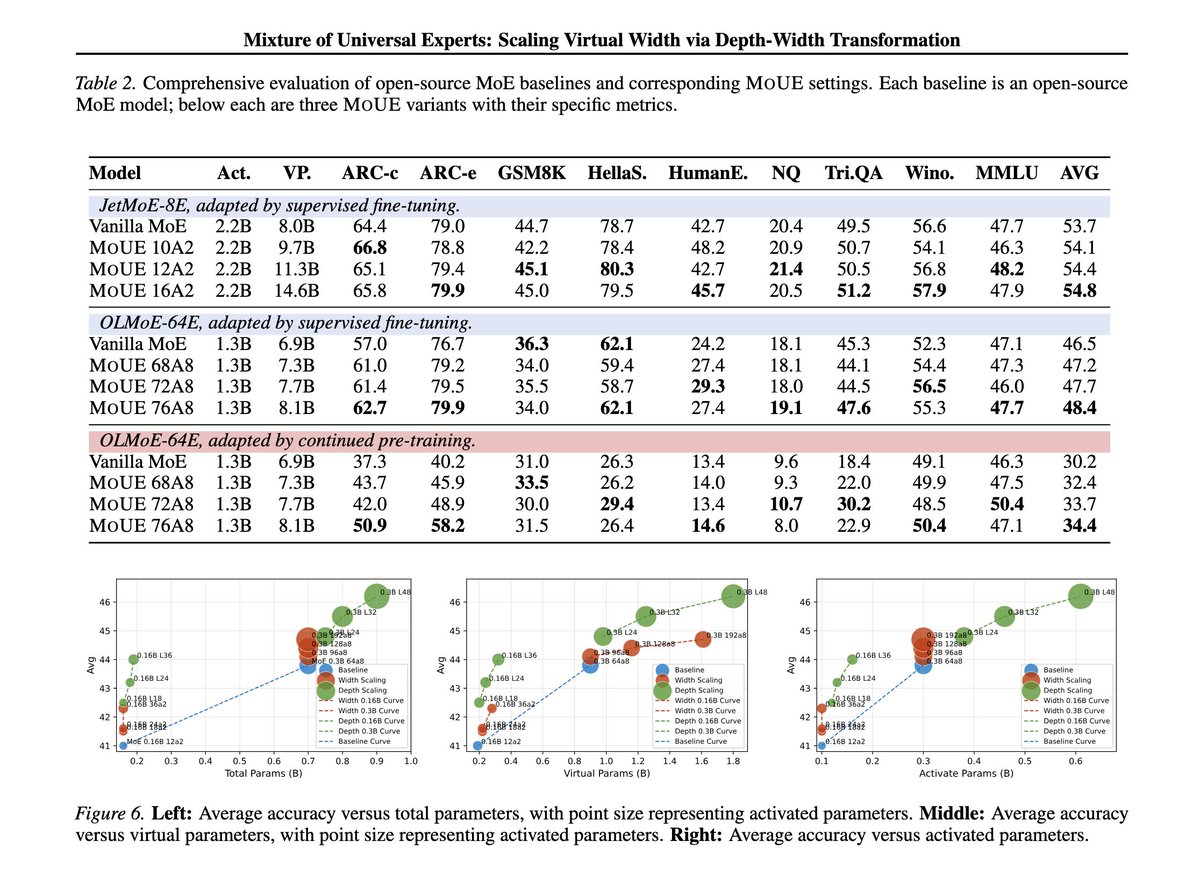

We introduce MoUE. A new MoE paradigm boosts base-model performance by up to 1.3 points from scratch and up to 4.2 points on average, without increasing either activated parameters or total parameters. The main idea is simple: a sufficiently wide MoE layer with recursive reuse can be treated as a strict generalization of standard MoE. arxiv.org/abs/2603.04971 huggingface.co/papers/2603.04… #MoE #LLM #MixtureOfExperts #SparseModels #ScalingLaws #Modularity #UniversalTransformers #RecursiveComputation #ContinualPretraining

We introduce MoUE. A new MoE paradigm boosts base-model performance by up to 1.3 points from scratch and up to 4.2 points on average, without increasing either activated parameters or total parameters. The main idea is simple: a sufficiently wide MoE layer with recursive reuse can be treated as a strict generalization of standard MoE. arxiv.org/abs/2603.04971 huggingface.co/papers/2603.04… #MoE #LLM #MixtureOfExperts #SparseModels #ScalingLaws #Modularity #UniversalTransformers #RecursiveComputation #ContinualPretraining