Yining Lu

138 posts

Yining Lu

@Yining__Lu

Second year CS PhD student @NotreDame | Prev: @amazon @JHUCLSP 🦋: https://t.co/gPXvdPuesy

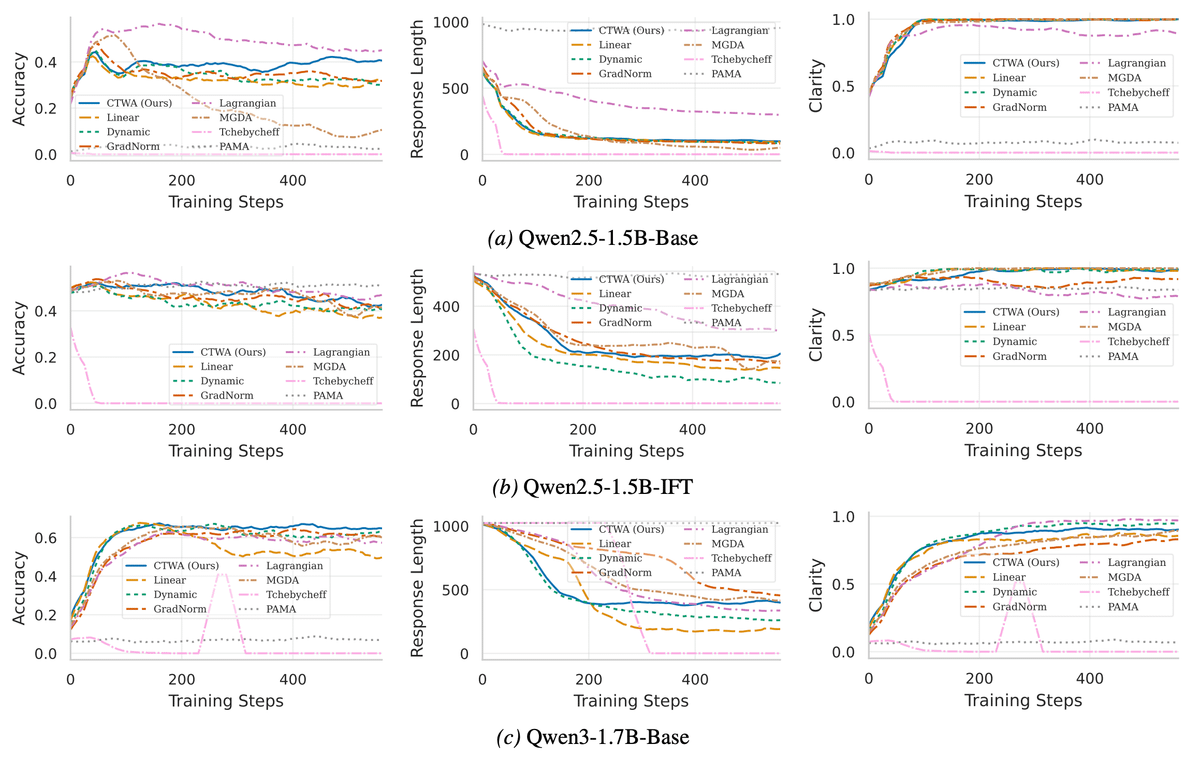

RLVR is powerful — but how do you train with multiple rewards effectively? 🤔 🎯GDPO (not GRPO) is coming. We introduce Group reward-Decoupled Normalization Policy Optimization (GDPO), a new multi-reward RL algorithm that consistently improves per-reward convergence over GRPO across a wide range of tasks. (1/n)

Decentralized RAG allows your database to benefit all LLM clients. On the other side, not all data sources are reliable. Managing source reliability on blockchain can avoid third-party manipulation. Introducing dRAG + Blockchain + Truth Discovery: arxiv.org/abs/2511.07577

Excited to announce @amazon's new AI PhD Fellowship Program supporting 100+ students across 9 universities like Carnegie Mellon, MIT & Stanford. Fellows will be paired with senior scientists working in related fields, plus receive financial support and AWS credits for research. Learn more: amazon.science/news/amazon-la…

We implement this as a Jupyter-like REPL environment: The key idea is to put the user’s prompt in a Python variable and give the LLM a REPL loop where it can try to understand the prompt, without directly reading the whole content. The “root” LM interacts with the environment by writing code and looking at the outputs of each cell, and can recursively call LM calls inside this REPL environment to navigate its context. This is far more general than any “chunking” strategy. We argue that you should let the LLM itself decide how to best poke around, decompose, and recursively process the long prompt.

Title: Always Tell Me The Odds: Fine-grained Conditional Probability Estimation 📄Paper: openreview.net/pdf?id=xhDcG8q… 🤗Models: huggingface.co/Zhengping/cond… 💻Code: github.com/zipJiang/decod… 🤝Coauthor: @zhengping_jiang @anqi_liu33 @ben_vandurme

My 3rd blogpost on PG, the topic I am least familiar with but get asked a lot, so I thought I'd just put together the very limited stuff I know on this topic. Somehow the post gets cynical from time to time🙃 nanjiang.cs.illinois.edu/2025/09/29/pg.…