Minglai Yang

50 posts

@Yminglai

reasoning & interp #NLProc. Founder @uaaiclub | Research @labclu @thukeg @Abaka_AI @2077AI

While Alec is one of the best ML researchers of all time, LLM started way before. Here's one from 2013 for non-neural architecture and one from 2016, which is afaik the first neural LLM if we define LLM as LM w/ >1B params.

Mind blown: A Chinese quant college student builds an AI swarm engine in 10 days flat, explodes GitHub with 13,000+ stars, and scores $4,000,000 in funding! Introducing MiroFish is the multi-agent simulator that's revolutionizing predictions for trading, PR, and more. What is MiroFish? It's a digital sandbox where thousands of AI agents with individual memories and behaviors interact like a real society. Feed it any scenario (news leak, policy change, or even a classic novel's missing ending), and it simulates crowd reactions, debates, and outcomes to forecast real-world events. The Creator's Story: > In late 2025, fourth-year student Guo Hanjiang coded the core using AI assistants. > It went viral overnight, landing him 30m Yuan (~$4m) from Shanda Group. > He ditched the dorm, started a company, and now leads the charge. Key Applications: .Trading: Input financial news or reports, watch simulated market panics and price swings for predictive insights. .PR Testing: Companies/Politics run draft statements to spot backlash and refine messaging. .Creative Experiments: Loaded a lost-ending Chinese novel, agents role-played characters and generated a logical finale. .Easy setup: Deploy via Docker in minutes with any LLM API key. Pro tip: Simulate something wild like Elon Musk tweeting about Dogecoin 2.0 and spawn agent traders, influencers, and investors, generate real-time video clips of the frenzy to test moonshots or crashes risk-free. Traders are already winning big: Check this one on Polymarket - $120,000+ net profits from spot on SPX 500 bets, powered by MiroFish sims on historical data. His profile: polymarket.com/profile/%40moi… For effortless gains, try Kreo copy trading: Auto-mirror pros like him and ride their edges. Try here: @join" target="_blank" rel="nofollow noopener">kreo.app/@join

Add his wallet: [0x17559efac103ac7f361be37ec0b93888d4c55aac] to [t.me/KreoPolyBot?st…] and start track/copy him. Repo: github.com/666ghj/MiroFish

🔥 What actually happens during multi-step reasoning with LLMs? ❓What are the internal computations? ❓How is such capability acquired during training? ❓Does the latent reasoning rely on shortcuts? ❓How does CoT remodel internal computation? ❓Why does CoT enhance reasoning capability? and more... Many questions remain about the internal machinery. We wrote a paper to systematically review the existing process of revealing the mechanisms behind LLM multi-step reasoning—from implicit latent reasoning to explicit CoT reasoning. We also highlight directions for future mechanistic studies. 📄Paper: arxiv.org/pdf/2601.14270 💻Github: github.com/PKU-PILLAR-Gro…

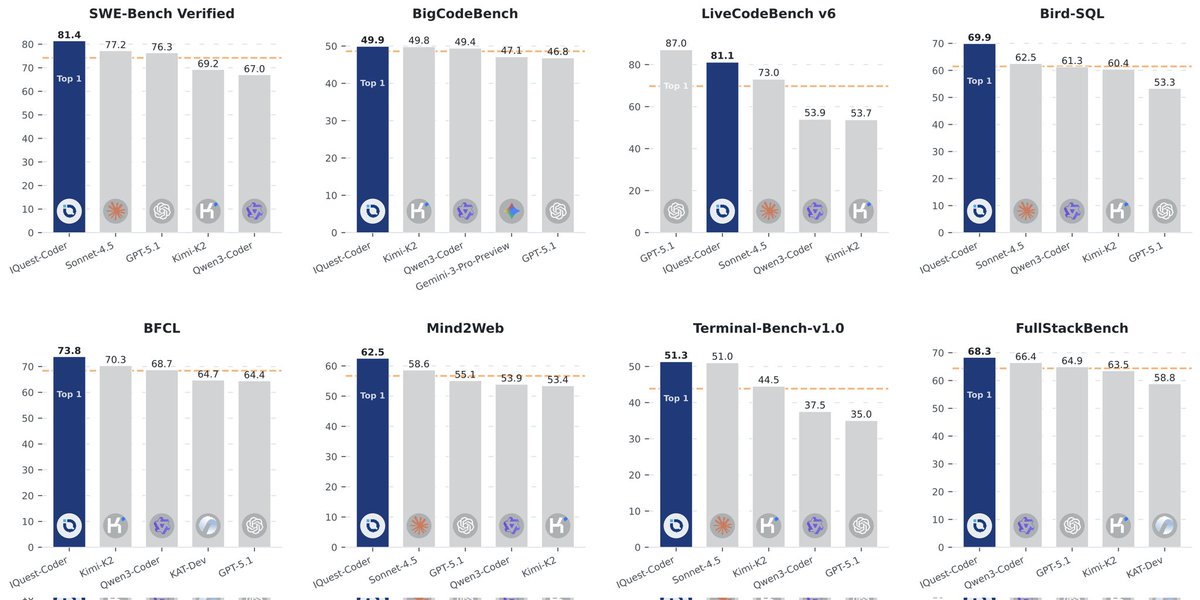

another hardcore model arch using looped transformer from a chinese quant company github.com/IQuestLab/IQue…

What are Chinese quant companies smoking to get this kind of performance??? Mogging Sonnet 4.5 with a 40B

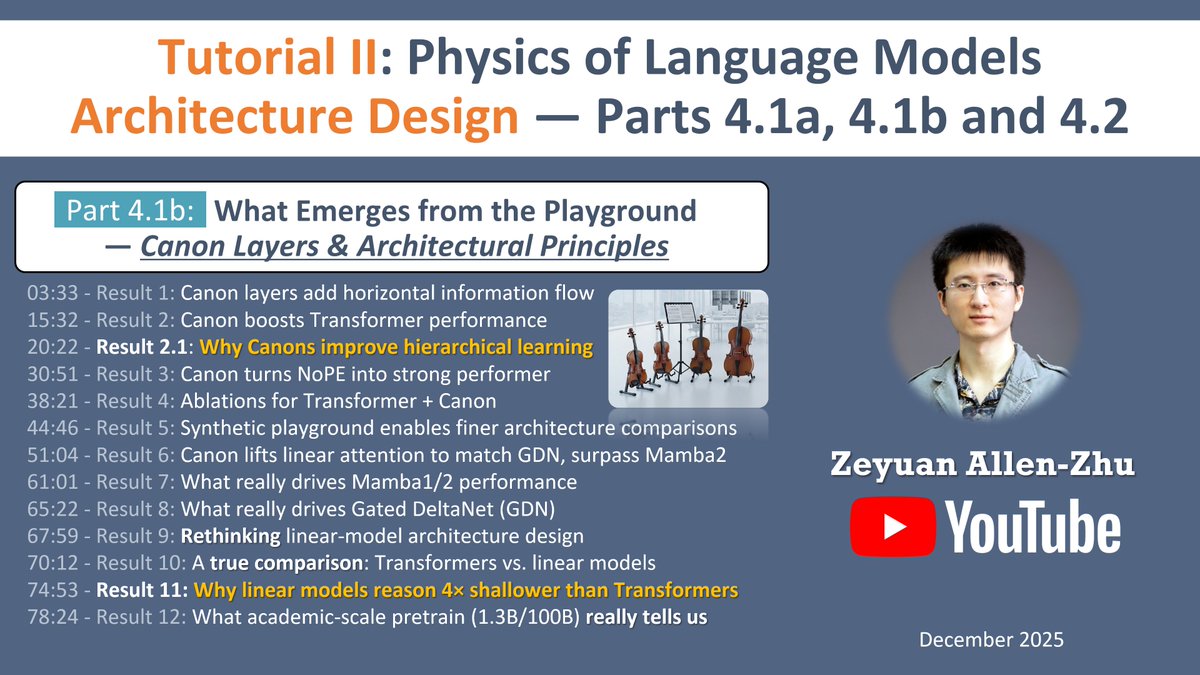

I’m launching Tutorial II for Physics of Language Models. Many people focus on large-scale results. This tutorial is about why those results are often artifacts of noise — and how to eliminate that noise at the design level. The first video (Part 4.1a, 1 hour) is the most important one. It focuses on methodology, not benchmarks: – how real-life pretraining can be “cheated”, – why academic-scale experiments are noisy, – and most importantly, how to design a versatile, skill-pure synthetic pretraining playground. I explain why our five synthetic tasks are designed the way they are, and how GPT2-small-scale (100M) models can reveal architectural truths that 8B models trained on 1T tokens often fail to expose reliably. This methodology is the backbone of the entire Physics series. ▶️ First video: Part 4.1a — methodology & playground design 🔜 Second video: Part 4.1b — architectural principles from the playground 🔜 Third video: Part 4.2 — when the playground reshapes real-life pretraining (You can find it via my profile.)

There are competing views on whether RL can genuinely improve base model's performance (e.g., pass@128). The answer is both yes and no, largely depending on the interplay between pre-training, mid-training, and RL. We trained a few hundreds of GPT-2 scale LMs on synthetic GSM-like reasoning data from scratch. Here are what we found: 🧵

The GDM mechanistic interpretability team has pivoted to a new approach: pragmatic interpretability Our post details how we now do research, why now is the time to pivot, why we expect this way to have more impact and why we think other interp researchers should follow suit