Yogesh Rathi

1.1K posts

Yogesh Rathi

@Yogi_Spoke

Associate Professor at Harvard; neuroscience; MRI, AI; Yoga; alumni BITS Pilani, Georgia Tech

New research proves that current AI agent groups cannot reliably coordinate or agree on simple decisions. Building teams of AI agents that can consistently agree on a final decision is surprisingly difficult for LLMs. But problem is that developers frequently assume that if you have enough AI agents working together, they will eventually figure out how to solve a problem by talking it through. This paper shows that this assumption is currently wrong. Even in a friendly environment where every agent is trying to help, the team often gets stuck or stops responding entirely. Because this happens more often as the group gets bigger, it means we cannot yet trust these agent systems to handle tasks where they must agree on a correct answer. ---- Paper Link – arxiv. org/abs/2603.01213 Paper Title: "Can AI Agents Agree?"

JUST DROPPED: Anthropic's research proves AI coding tools are secretly making developers worse. "AI use impairs conceptual understanding, code reading, and debugging without delivering significant efficiency gains." -- That's the paper's actual conclusion. 17% score drop learning new libraries with AI. Sub-40% scores when AI wrote everything. 0 measurable speed improvement. → Prompting replaces thinking, not just typing → Comprehension gaps compound — you ship code you can't debug → The productivity illusion hides until something breaks in prod Here's why this changes everything: Speed metrics look fine on a dashboard. Understanding gaps don't show up until a critical failur and when they do the whole team is lost. Forcing AI adoption for "10x output" is a slow-burning technical debt nobody is measuring. Full paper: arxiv.org/abs/2601.20245

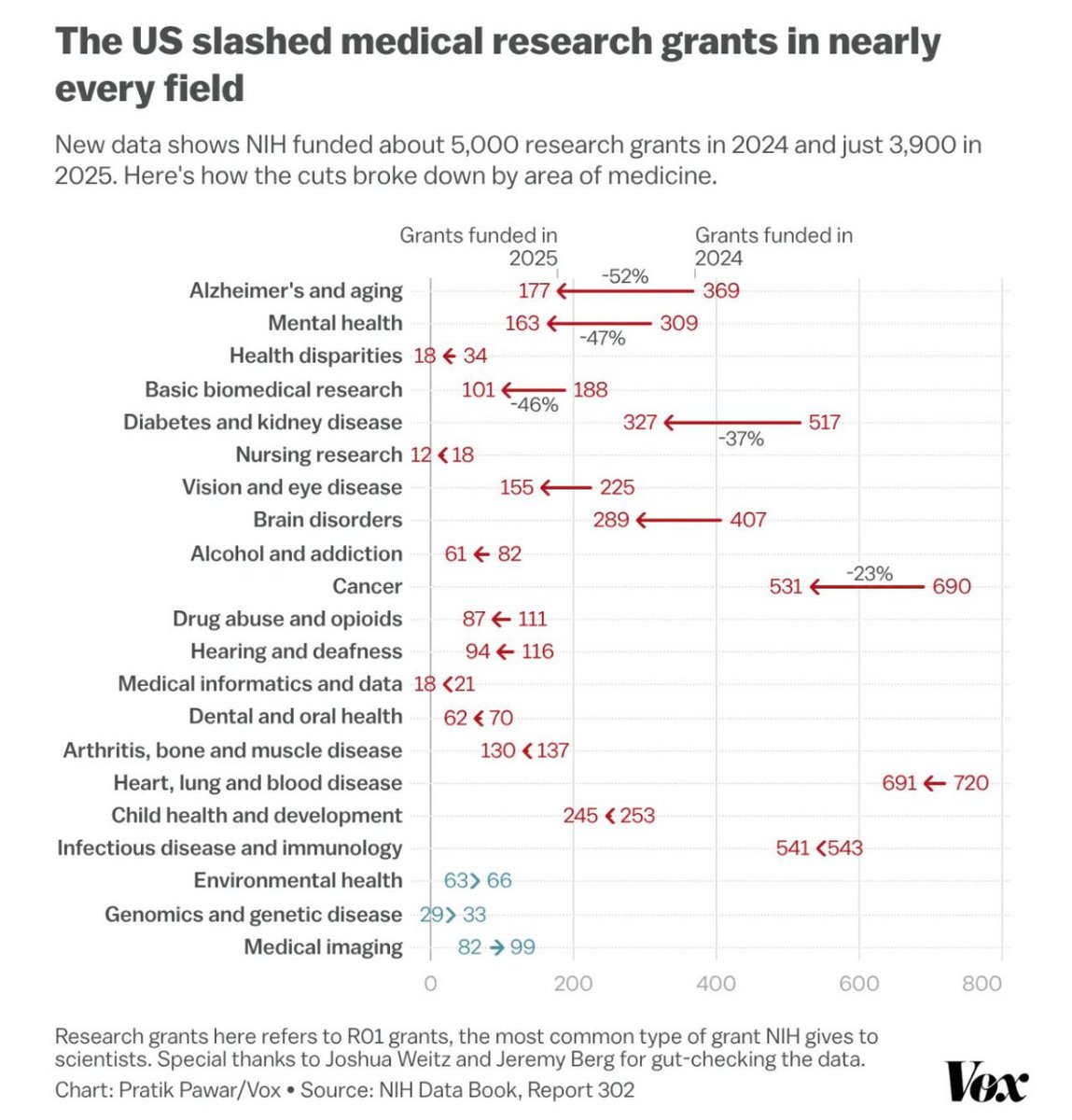

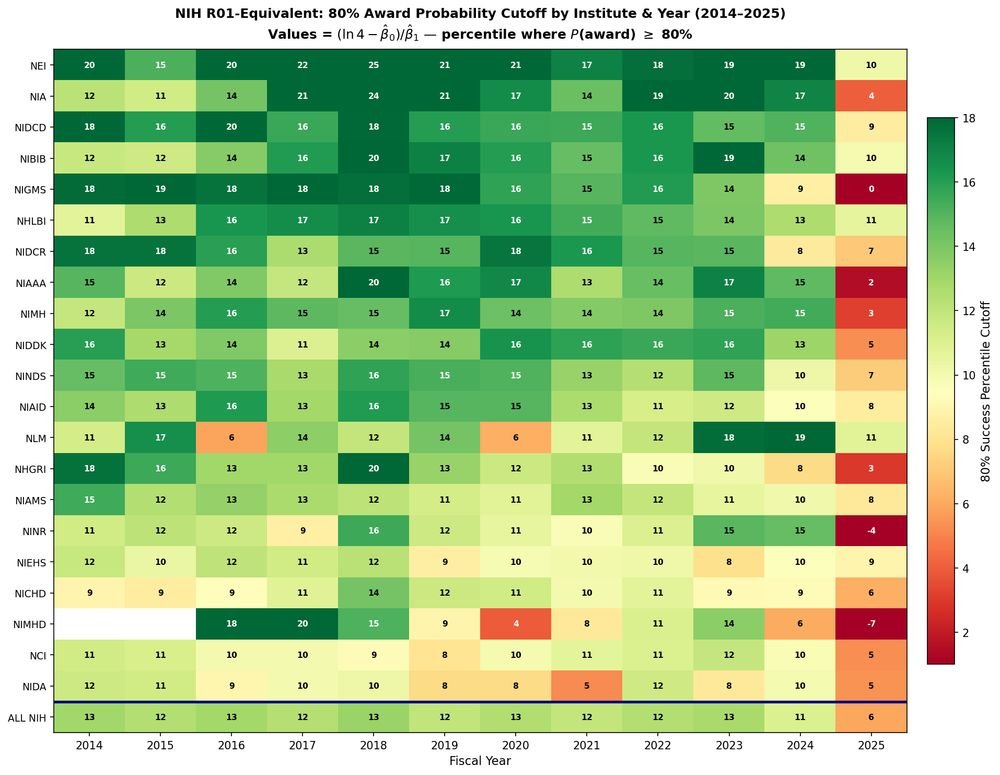

NIH FY2025 funding data finally emerges on RePORT drugmonkey.wordpress.com/2026/03/06/nih… via @drugmonkeyblog

GPT-5.2 derived a new result in theoretical physics. We’re releasing the result in a preprint with researchers from @the_IAS, @VanderbiltU, @Cambridge_Uni, and @Harvard. It shows that a gluon interaction many physicists expected would not occur can arise under specific conditions. openai.com/index/new-resu…

@PalmerLuckey Widespread MRI usage done at least annually with AI reviewing the data would greatly improve wellbeing and mortality