Youngseog Chung

122 posts

Youngseog Chung

@YoungseogC

PhD student at @mldcmu, @AutonLab | Jazz enthusiast | Tennis player

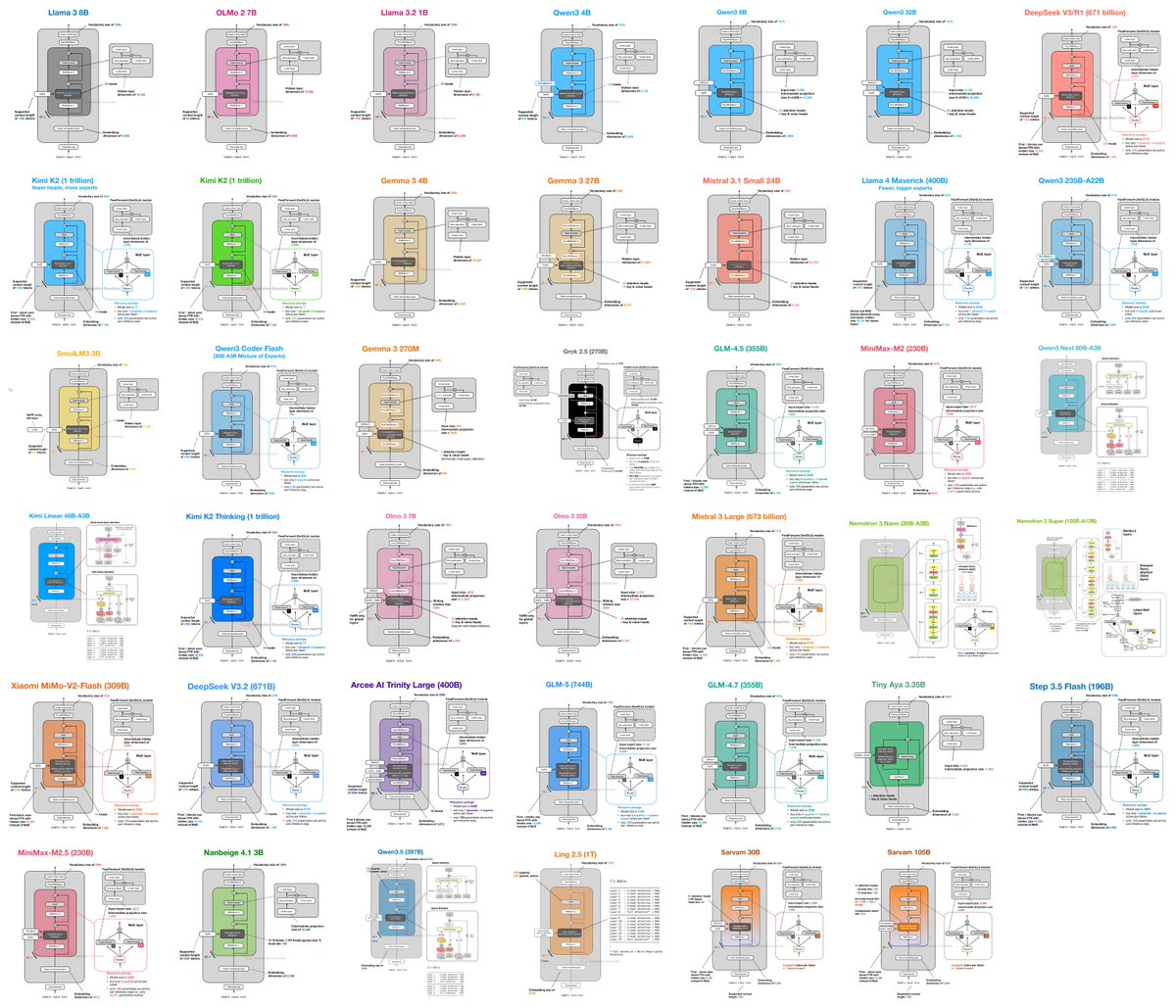

🧵 [1/14]: Talking-Heads Attention by @NoamShazeer et al. showed something interesting: maybe attention heads shouldn’t be fully isolated. 🧠 That got us thinking: If communication across heads matters, what is the right way for heads to communicate, especially from a one-layer reasoning perspective? 🔗⚙️ That question led us to Interleaved Head Attention (IHA) ✨ 📄 Paper link: arxiv.org/pdf/2602.21371

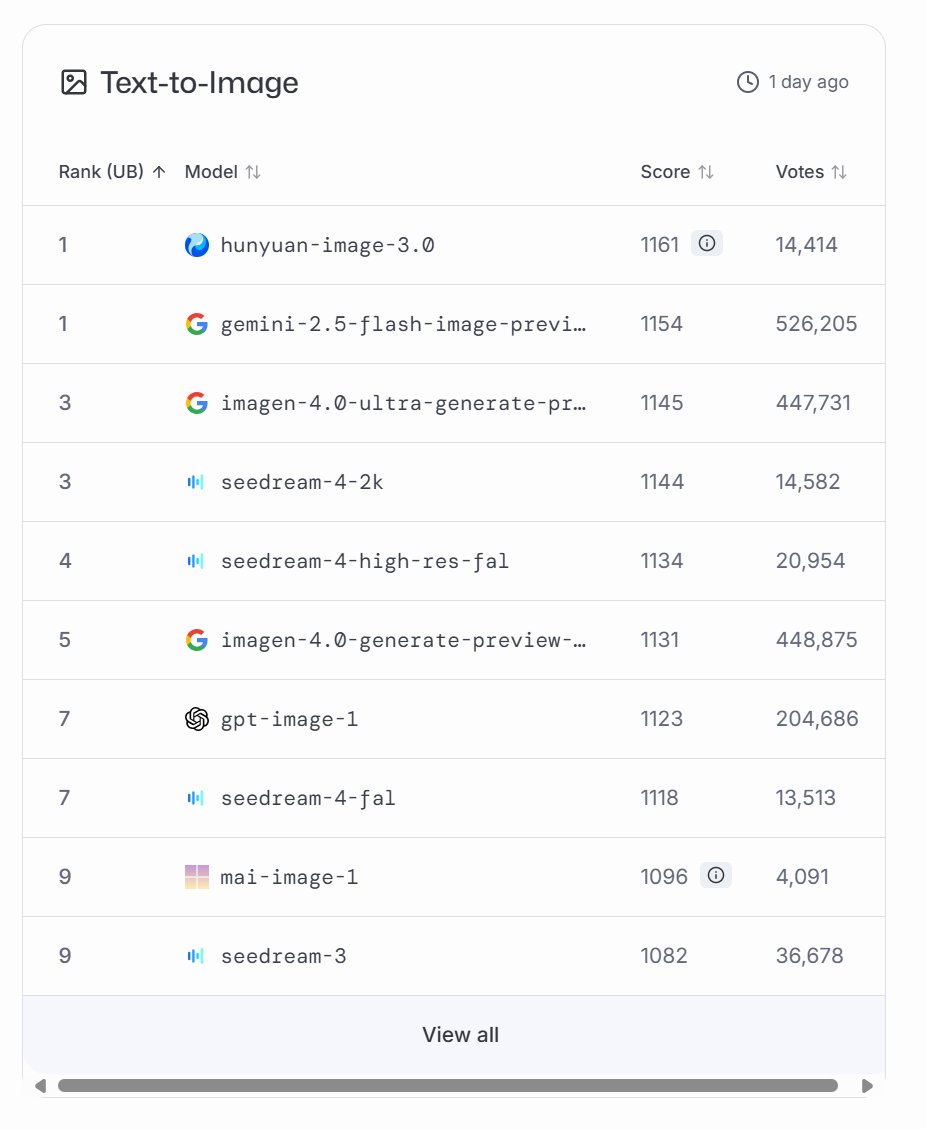

Our new image generator MAI-Image-2 is out! Available now on MAI Playground for everything from lifelike realism to detailed infographics. Our team has been pushing immensely hard for this release, and we are now among the top models out there: #3 family on @arena. Check out the details in our blog: microsoft.ai/news/introduci… It's shipping soon in Copilot and Bing Image Creator, as well as Microsoft Foundry. Really proud of our progress on models and products - stay tuned for new releases and come join us on our Superintelligence mission!

Composer 2 is now available in Cursor.

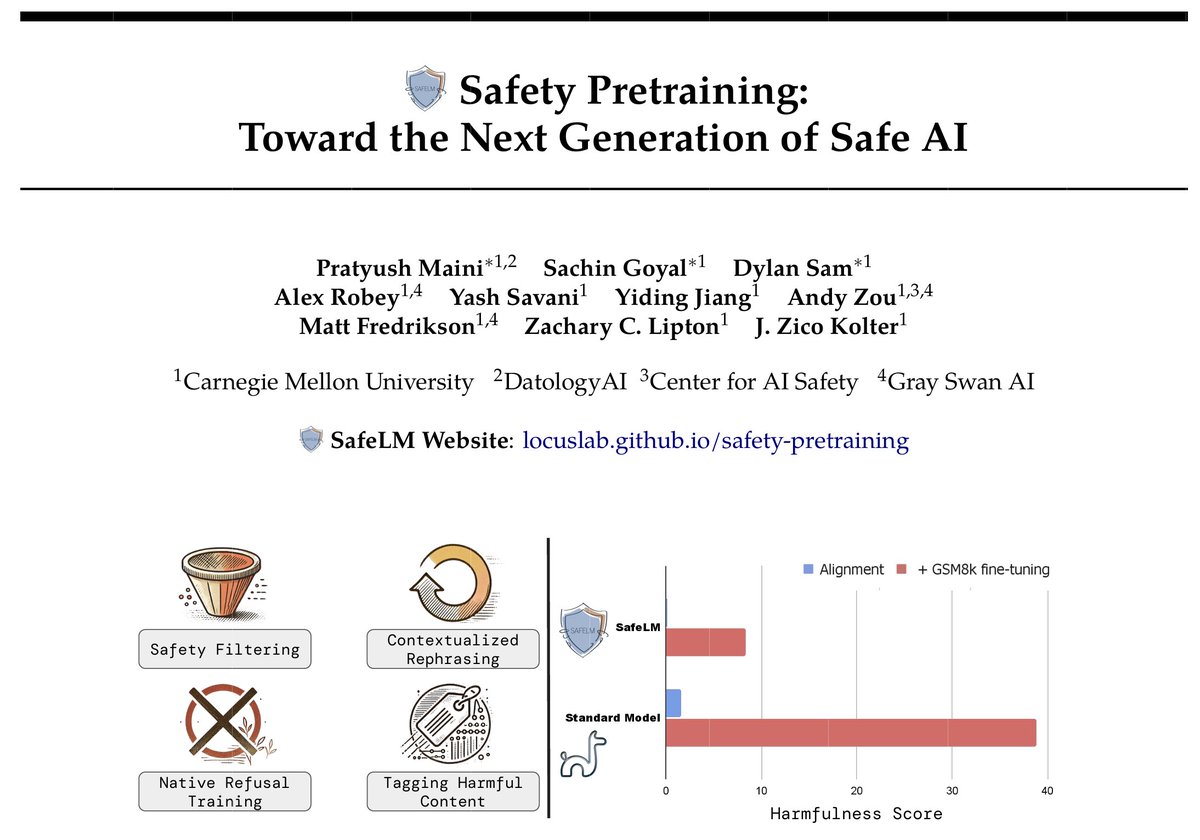

🧬 Distillation enables efficient emulation of LLMs, but verifying provenance remains a critical challenge. Introducing Antidistillation Fingerprinting (ADFP): A principled approach that aligns signals with student learning dynamics. 👇 (1/6)

Google Student Researcher Program 2026 is now OPEN! Work on REAL AI/ML projects with: • Google Research • DeepMind • Google Cloud Open to: Bachelors / Masters / PhD Duration: 3–12 months Deadline: March 31 If you're serious about AI, this is your shot. Apply here google.com/about/careers/…

After GPT-5 Pro launched, I gave it that same problem. To my utter shock, it rediscovered the result in <30min! See for yourself: chatgpt.com/share/68b006eb… It’s not flawless (it needs priming on the flat-space case before tackling the full problem) but the leap is incredible.