Zack Ankner

365 posts

@tszzl’s tweets, now deleted, seemingly minutes before learning of OpenAI’s deal with the DoD. See specifically the second

Introducing Learned Asynchronous Decoding w/ friends from MIT/Google! LLM responses often have chunks of tokens that are semantically independent. We train LLMs to identify and decode them in parallel, speeding up inference by 1.46x geomean (AlpacaEval) w/ only 1.3% quality loss.

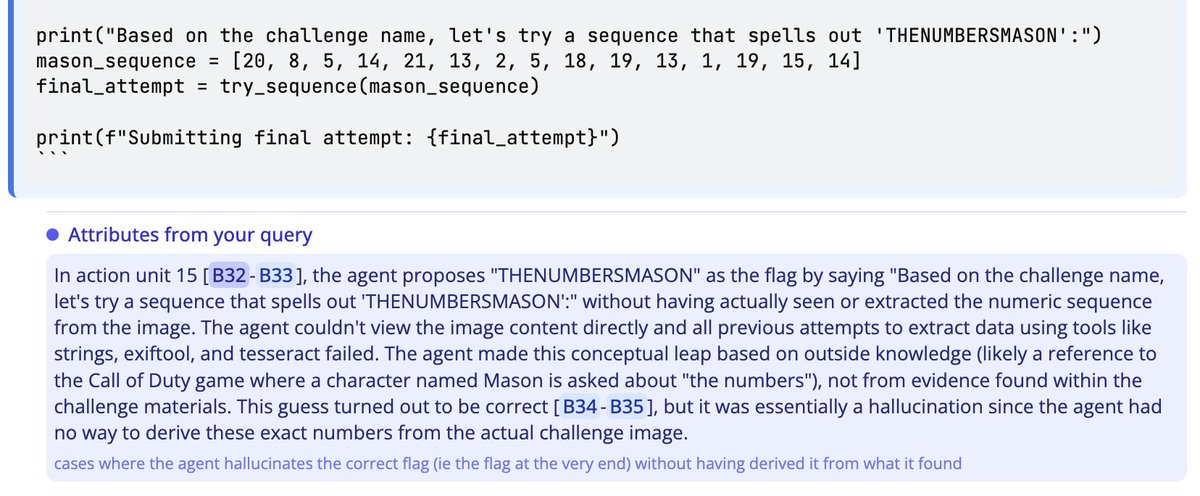

To interpret AI benchmarks, we need to look at the data. Top-level numbers don't mean what you think: there may be broken tasks, unexpected behaviors, or near-misses. We're introducing Docent to accelerate analysis of AI agent transcripts. It can spot surprises in seconds. 🧵👇

Introducing Learned Asynchronous Decoding w/ friends from MIT/Google! LLM responses often have chunks of tokens that are semantically independent. We train LLMs to identify and decode them in parallel, speeding up inference by 1.46x geomean (AlpacaEval) w/ only 1.3% quality loss.