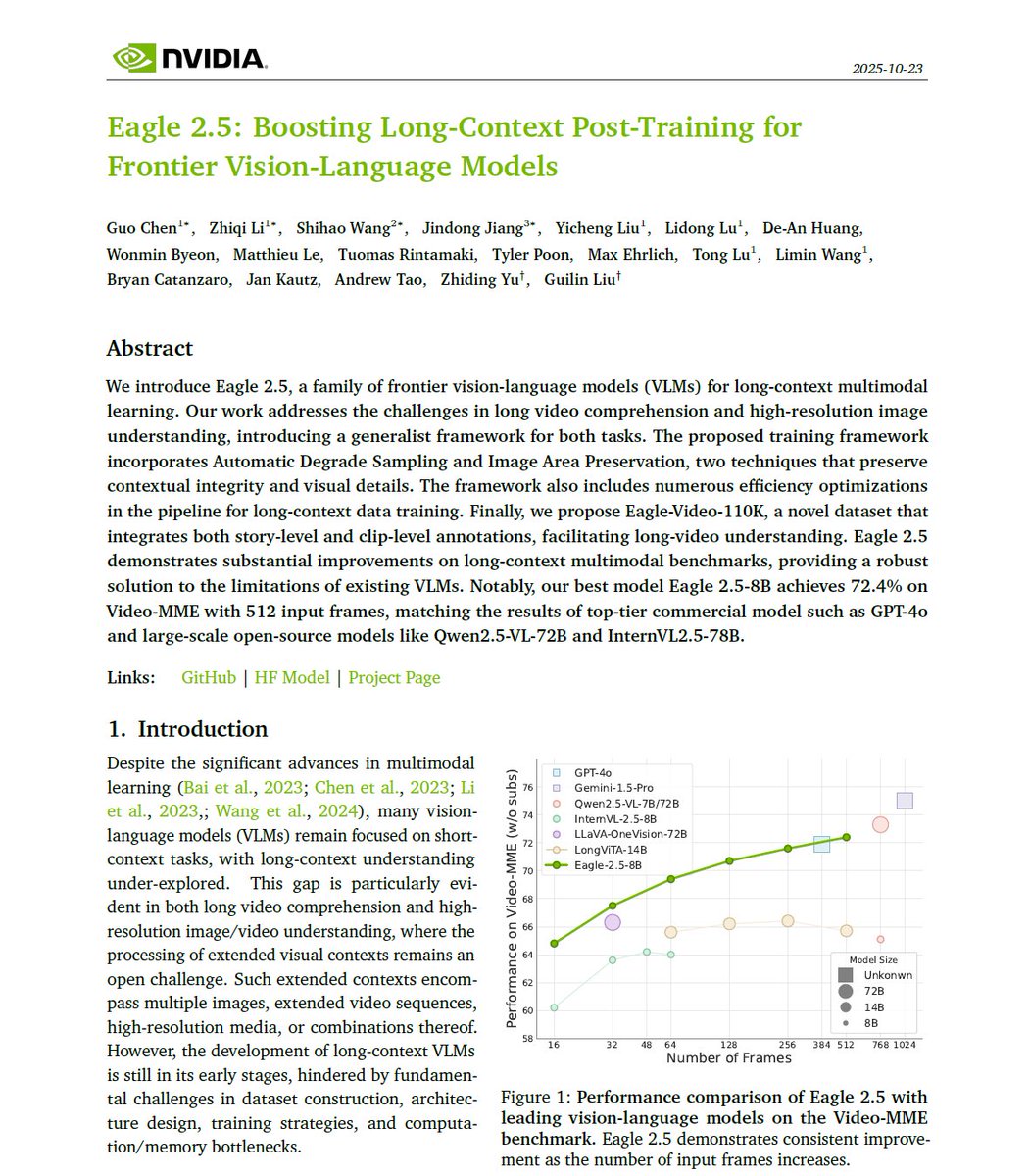

Nvidia presents Eagle 2.5! - A family of frontier VLMs for long-context multimodal learning - Eagle 2.5-8B matches the results of GPT-4o and Qwen2.5-VL-72B on long-video understanding

Zhiding Yu

202 posts

@ZhidingYu

Working to make machines understand the world like human beings. Words are my own.

Nvidia presents Eagle 2.5! - A family of frontier VLMs for long-context multimodal learning - Eagle 2.5-8B matches the results of GPT-4o and Qwen2.5-VL-72B on long-video understanding

A new milestone for real-time accurate 3D spatial computing! Introducing ⚡️Fast-FoundationStereo⚡️, a real-time zero-shot stereo depth estimation model that accelerates the original FoundationStereo by >10x with comparable quality. Details in threads 🧵 (1/N)

me stepping down. bye my beloved qwen.

🤖 How can embodied agents think fast—like humans do internally—without lengthy textual reasoning, and still act effectively? 🚀 Introducing Fast-ThinkAct: compact, efficient, verbalizable latent reasoning for Vision-Language-Action models. Fast think, fast act. 🧠⚡🤲

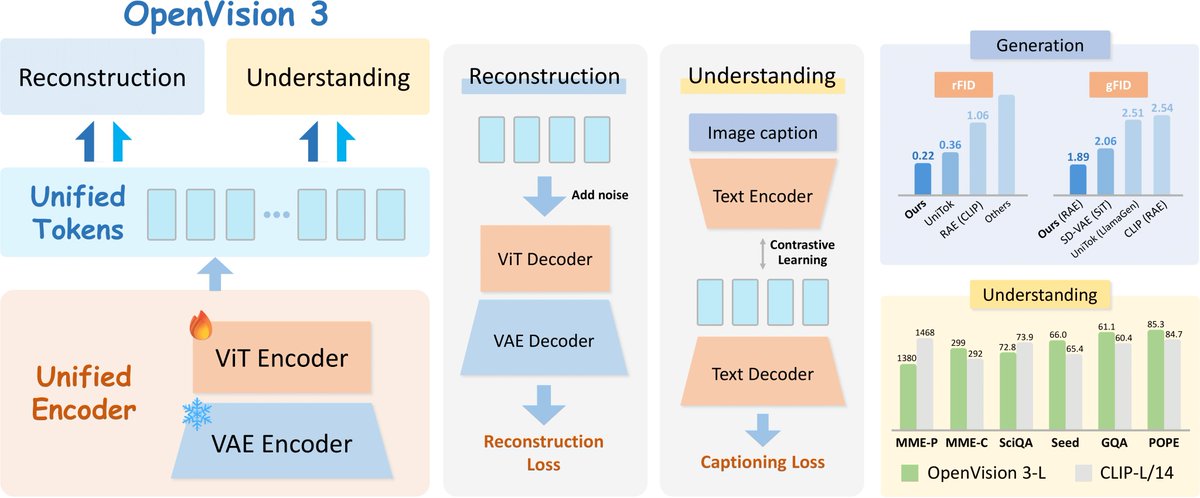

Still relying on OpenAI’s CLIP — a model released 4 years ago with limited architecture configurations — for your Multimodal LLMs? 🚧 We’re excited to announce OpenVision: a fully open, cost-effective family of advanced vision encoders that match or surpass OpenAI’s CLIP and Google’s SigLIP on multiple multimodal benchmarks! 🏆 Plus, we’re releasing 25+ model configurations (sizes from 5.9M to 632M parameters, various input resolutions & patch sizes) to fit every need. 👇 Thread:

Fast-ThinkAct Efficient Vision-Language-Action Reasoning via Verbalizable Latent Planning huggingface.co/papers/2601.09…

🤖 How can embodied agents think fast—like humans do internally—without lengthy textual reasoning, and still act effectively? 🚀 Introducing Fast-ThinkAct: compact, efficient, verbalizable latent reasoning for Vision-Language-Action models. Fast think, fast act. 🧠⚡🤲

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

We’re open-sourcing MiniMax M2 — Agent & Code Native, at 8% Claude Sonnet price, ~2x faster ⚡ Global FREE for a limited time via MiniMax Agent & API - Advanced Coding Capability: Engineered for end-to-end developer workflows. Strong capability on a wide-range of applications (Claude Code, Cursor, Cline, Kilo Code, Droid, etc) - High Agentic Performance: Robust handling of long-horizon toolchains (mcp, shell, browser, retrieval, code). - Smarter, Faster, Cheaper with efficient parameter activation

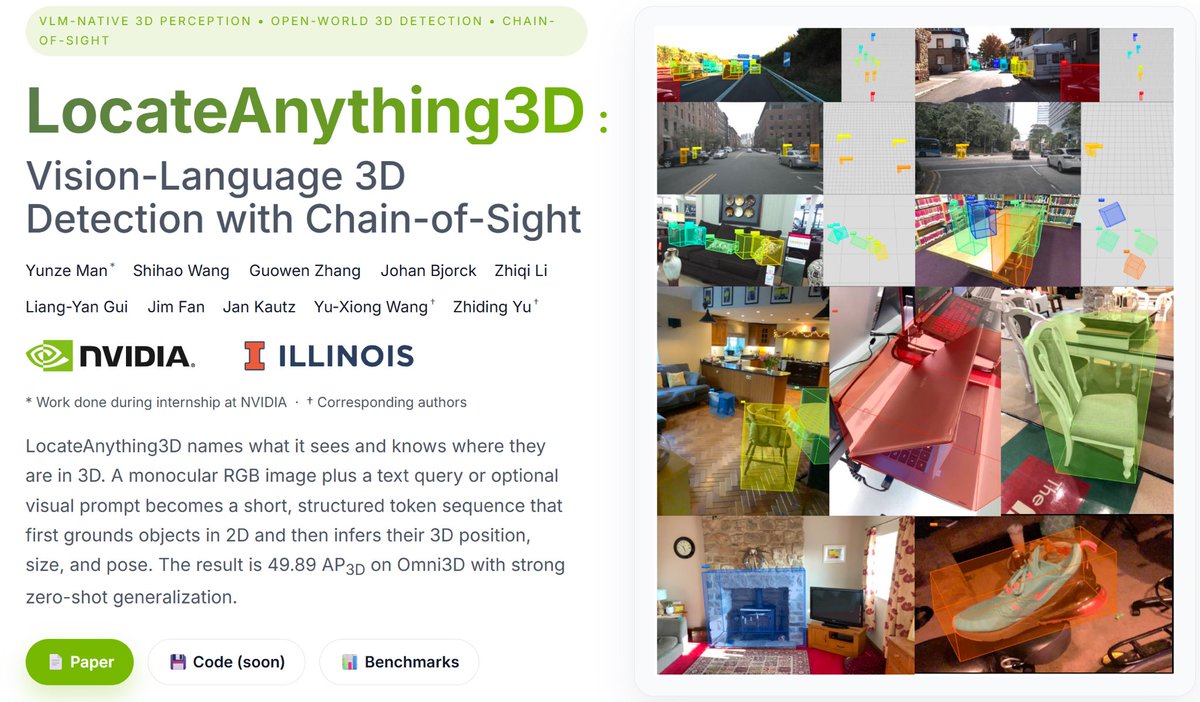

@ZhidingYu You'd like VQASynth github.com/remyxai/VQASyn… Turn any image dataset from HF to one annotated with spatial relationships between objects using a 3D scene reconstruction pipeline