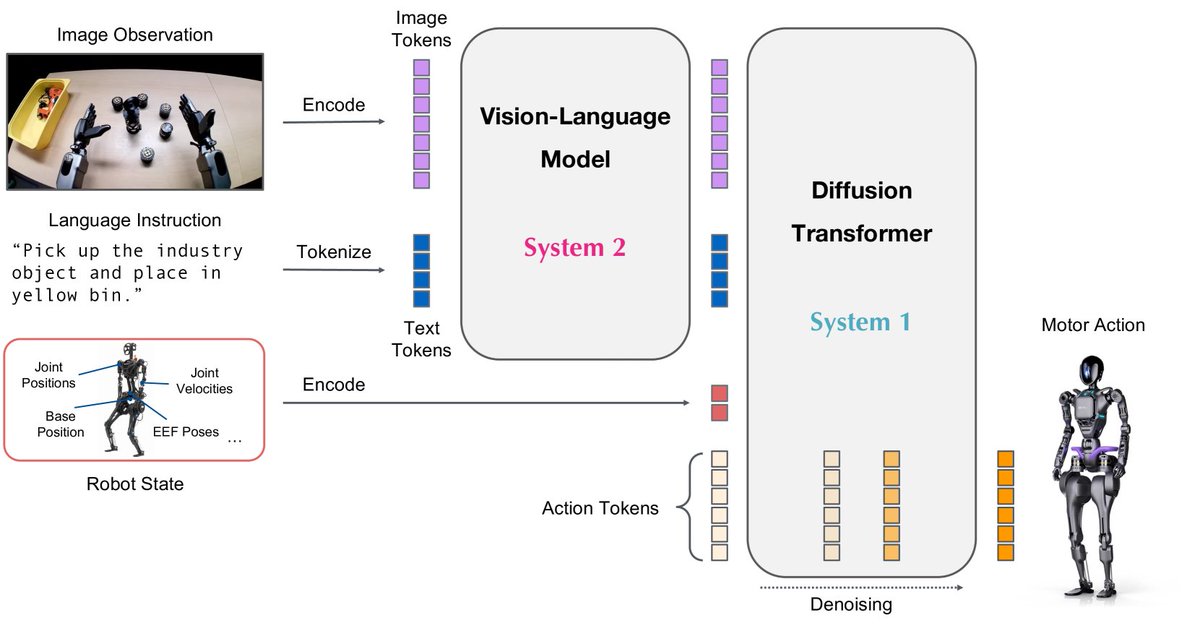

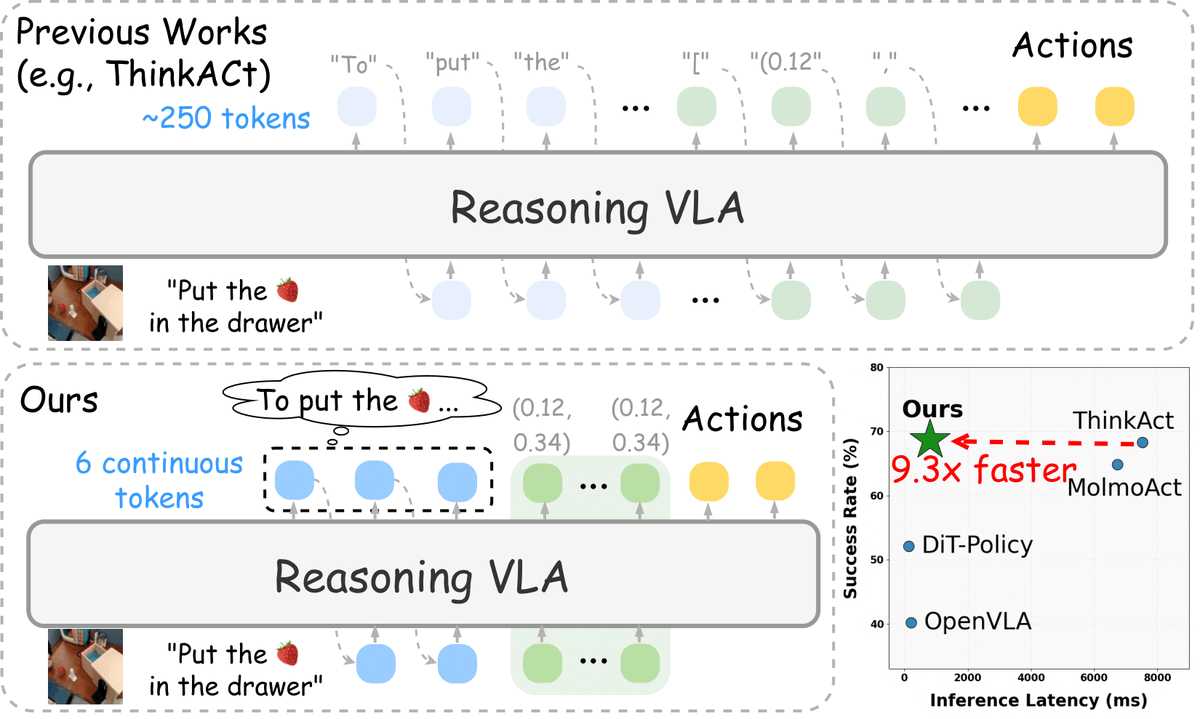

🤖 How can embodied agents think fast—like humans do internally—without lengthy textual reasoning, and still act effectively? 🚀 Introducing Fast-ThinkAct: compact, efficient, verbalizable latent reasoning for Vision-Language-Action models. Fast think, fast act. 🧠⚡🤲