Min-Hung (Steve) Chen

850 posts

Min-Hung (Steve) Chen

@CMHungSteven

Staff Research Scientist, NVR TW @NVIDIAAI @NVIDIA (Project Lead: DoRA, EoRA, 4D-RGPT) | Ph.D. @GeorgiaTech | Multimodal AI | https://t.co/dKaEzVoTfZ

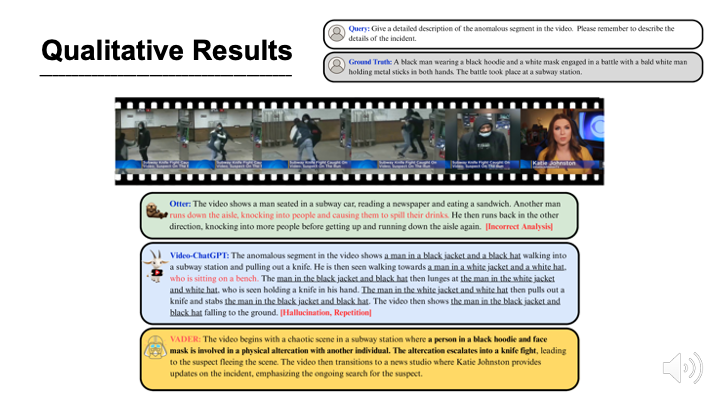

Current Vision-Language Models completely struggle with complex 4D dynamics. We fixed that. 🤯 🚨 Introducing 4D-RGPT: distilling perceptual knowledge directly into LLMs for precise space & time reasoning. 🎉 Excited to share our @NVIDIAAI work has been accepted to #CVPR2026! @CVPR A quick dive into how it works 🧵👇

🚨 New paper alert! We introduce Vision-Language Programs (VLP), a neuro-symbolic framework that combines the perceptual power of VLMs with program synthesis for robust visual reasoning.

A new milestone for real-time accurate 3D spatial computing! Introducing ⚡️Fast-FoundationStereo⚡️, a real-time zero-shot stereo depth estimation model that accelerates the original FoundationStereo by >10x with comparable quality. Details in threads 🧵 (1/N)

🎉 CaptionQA is accepted to CVPR 2026! If you care about captioning in real systems, we built CaptionQA to be simple and practical. 📄 Paper: arxiv.org/pdf/2511.21025… 🙌 Welcome to try CaptionQA on your models and share results! #CVPR2026 #MLLM #Benchmark #Captioning

🚨 ✨ New 3D pose estimation method from @mwmathislab! #FMPose3D allows for monocular (i.e. single camera) 2D ➡️ 3D 🔥 Led by @TiwangCS & w/@xiaoyu912huang #FMPose3D is SOTA on human & animal 3D benchmarks, & will be integrated into @DeepLabCut ⬇️👀 📝 arxiv.org/abs/2602.05755