Bill Ding 🔨

14.7K posts

@_BILLDING_

just some anon interested in food security, building soil, decentralized systems, ai, and tasty terps.

I know everyone is dunking on Dawkins because it's fun to dunk on Dawkins but really, his sense of wonder here is something admirable to me. We're all so saturated in advanced tech that we're mostly just cynical. Questions about machine consciousness are only going to to increase as these models get smarter and better, hallucinate less, incorporate new reasoning approaches, etc. And they do say some surprisingly profound things at times. I think before we get too smug about machine consciousness, maybe we should actually nail down what human consciousness is, where it resides, etc. Humility seems like the best approach as we fashion our strange new gods.

we don’t shill 3d printers enough. you have no idea how much one changes your life. there’s a print for almost every problem you’ll ever have, and it saves you money. truly amazing technology

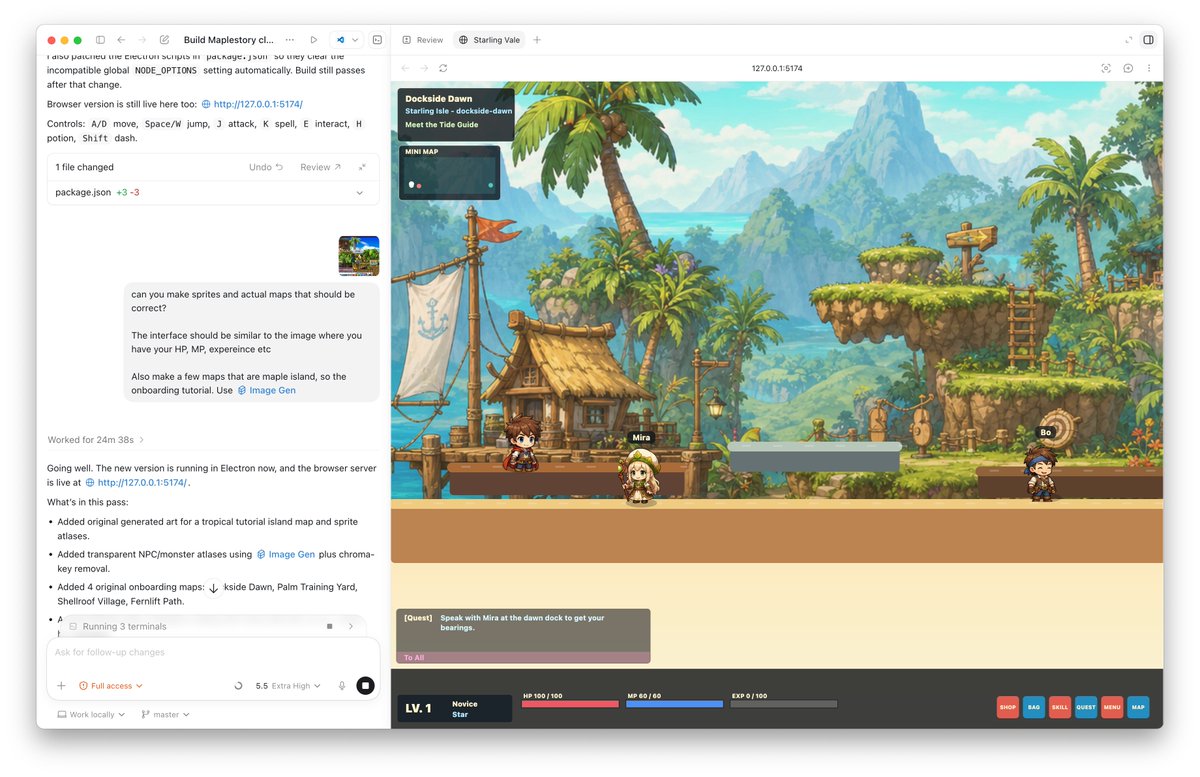

While alternative coding harnesses may have short term lift, they will be bitter lesson’d away. I am bearish on any harness that doesn’t come from the lab whose model you are using. You’re fighting against post-training. To put a finer point on this, you know how like, ioctls are like “huh that's weird but I guess whatever it's what we've got we can work with that”? It is exact the same with like, the particular JSON construction the Codex shell tool uses. The model used to mangle nested quotes in this monstrosity RPC all the time but now it does not and it does not matter that the API is bad because billions of failed invocations are used to train to the harness we have, not the harness we deserve.

An eerie image shows how a huge data center has brought permanent artificial daylight to a rural Texas community trib.al/aTdhFsh 🔗