Michael Allen

7.6K posts

Michael Allen

@_Dark_Knight_

Building at the intersection of AI + offensive security::https://t.co/5DiZGjk6VJ

Seattle, WA Katılım Mart 2009

277 Takip Edilen1.5K Takipçiler

Sabitlenmiş Tweet

@Dave_Maynor @zeroxjf @trq212 I've suggested having a vetted cyber-researcher option or something but have been ignored. I canceled most of the LLM subscriptions, bought some rtx 6000s, and am using local LLMs with cyber friendly system prompts now. Done with nanny no privacy LLMs.

English

The new cyber-abuse guardrails in Opus 4.6 are likely to drive a mass exodus of researchers from the platform. They give option to submit a form to prove legitimate research, but for me got no confirmation of its submission last week and no way of knowing its status 🤷♂️ @trq212

English

@zeroxjf @EvanKlein338226 @trq212 codex will not even write a frida script for the most basic of things

English

@EvanKlein338226 @trq212 2 weeks?! 🤦🏻♂️ it’s like they want people to flock to codex even more rapidly

English

Michael Allen retweetledi

we're testing a new version of /init based on your feedback- it should interview you and help setup skills, hooks, etc.

you can enable it with this env_var flag:

CLAUDE_CODE_NEW_INIT=1 claude

would love your feedback!

Thariq@trq212

I want to make /init more useful- what do you think it should do to help setup Claude Code in a repo?

English

@NotMedic @HackingLZ @UK_Daniel_Card maybe we have had it wrong all this time -- I will see myself out......

English

@HackingLZ @UK_Daniel_Card … they named the company Delve? Literally the word that was used to pick out the first wave of AI slop? 😂

English

I wouldn’t trust someone who called it “Pen test” vs “Pentest”

Eshaan Gulati@eshaangulati_

I was on a slack channel with delve and almost wired them 8K yesterday for SOC2… X saved my life - for real this time.

English

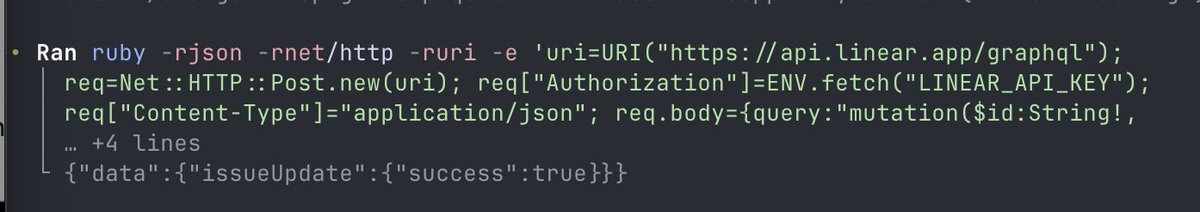

@mattpocockuk ok kewl...was running into some weirdness with processing each issue -- think I it was due to the updates I made to the script -- eventually worked but was curious if I was missing something -- thanks

English

@_Dark_Knight_ Yeah about the same as you! What issues are you having?

English

@mattpocockuk I was curious on what your ralph-loop looks like to work through the issues created from the /prd-to-issues skill. Right now I do something like

5. Push and create a PR, then merge it.

6. Close the issue.

But what is your approach?

English

[BLOG] Cassian: Agentic Differential Security Review for Pull Requests | mykalseceng.github.io/posts/cassian-…

English

Michael Allen retweetledi

OpenAI released Symphony this week.

I tried implementing the same pattern to see how it behaves. Most of the work ended up in review loops, artifacts, visibility, and PRs.

Notes: blog.boesen.me/posts/lessons-…

English

[BLOG] mykalseceng.github.io/posts/agentic-… | ODYSSEUS: Building an Agentic Pentest Platform -- How I built a multi-stage agentic pentest pipeline, what it found and missed, and how to use the approach in your workflows

English

@_Dark_Knight_ @HackingLZ At the same time they have a junior dev, the marketing team, the cfo all smashing metrics, building stuff and being productive using personal accounts.

The disconnect is real and compliance and legal will have a hard time catching up.

English

@HackingLZ @stokfredrik "For example, many wouldn't allow external or even internal people to run their data through frontier models during offensive testing." -- 100% this

English

I have no doubt AI tooling will augment testing in lots of ways, so if that means fewer OffSec jobs, I get it. We were in a period where OffSec was "easy" and people forgot the job was supposed to get harder over time instead, boot camps told people they would make X after 12 weeks.

The nature of most organizations security programs is a little more complex than their public facing bug bounty programs. The leap most people are making is that AI will close the gap to 80% and someone with no domain knowledge will drive that 80%. There is also a whole "replace a job vs. tasks" argument that all of AI land is currently having.|

Another somewhat useful point bug bounties largely avoid the data protection requirements companies have. For example, many wouldn't allow external or even internal people to run their data through frontier models during offensive testing.

The greater tipping point in the replacement discussion will come when local models reach a certain capability threshold, because it will allow companies to maintain safeguards while still meeting compliance and regulatory requirements. In that same space, there's also a lack of training data for internal pentesting and other areas compared to much of the bug bounty landscape.

English