Jonathan Lai

30 posts

Jonathan Lai

@_JLai

Post training @GoogleDeepMind, Gemini Reasoning, training algorithms, RL, opinions are my own

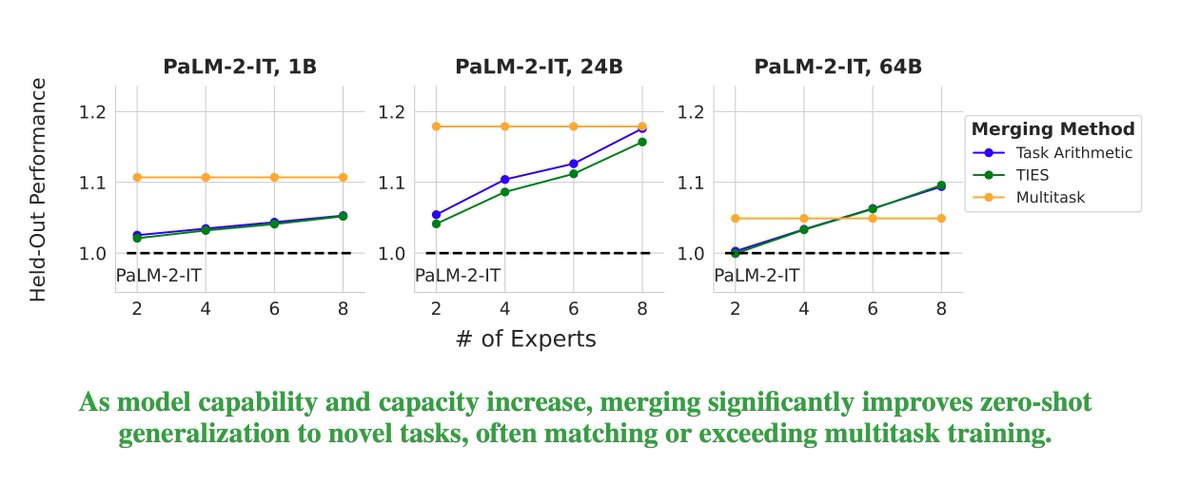

Excited to share that our paper on model merging at scale has been accepted to Transactions on Machine Learning Research (TMLR). Huge congrats to my intern @prateeky2806 and our awesome co-authors @_JLai, @alexandraxron, @manaalfar, @mohitban47, and @TsendeeMTS 🎉!!

Ever wondered if model merging works at scale? Maybe the benefits wear off for bigger models? Maybe you considered using model merging for post-training of your large model but not sure if it generalizes well? cc: @GoogleAI @GoogleDeepMind @uncnlp 🧵👇 Excited to announce my internship work on large-scale model merging! We explore what happens when you combine larger and larger language models (up to 64B parameters!) and how different factors –model size, base model quality, merging methods, and # of experts– impact held-in performance and generalization. 📰: arxiv.org/abs/2410.03617

Today we have published our updated Gemini 1.5 Model Technical Report. As @JeffDean highlights, we have made significant progress in Gemini 1.5 Pro across all key benchmarks; TL;DR: 1.5 Pro > 1.0 Ultra, 1.5 Flash (our fastest model) ~= 1.0 Ultra. As a math undergrad, our drastic results in mathematics are particularly exciting to me! In section 7 of the tech report, we present new results on a math-specialised variant of Gemini 1.5 Pro which performs strongly on competition-level math problems, including a breakthrough performance of 91.1% on Hendryck’s MATH benchmark without tool-use (examples below 🧵). Gemini 1.5 is widely available, try it out for free here aistudio.google.com & read the full tech report here: goo.gle/GeminiV1-5

Think you know Gemini? 🤔 Think again. Meet Gemini 2.5: our most intelligent model 💡 The first release is Pro Experimental, which is state-of-the-art across many benchmarks - meaning it can handle complex problems and give more accurate responses. Try it now → goo.gle/4c2HKjf

BREAKING: Gemini 2.5 Pro is now #1 on the Arena leaderboard - the largest score jump ever (+40 pts vs Grok-3/GPT-4.5)! 🏆 Tested under codename "nebula"🌌, Gemini 2.5 Pro ranked #1🥇 across ALL categories and UNIQUELY #1 in Math, Creative Writing, Instruction Following, Longer Query, and Multi-Turn! Massive congrats to @GoogleDeepMind for this incredible Arena milestone! 🙌 More highlights in thread👇