Mikel Bober-Irizar

1.4K posts

@mikb0b

24 // Kaggle Competitions Grandmaster & ML/AI Researcher. Building video games @iconicgamesio, machine reasoning @Cambridge_CL, bioscience @ForecomAI.

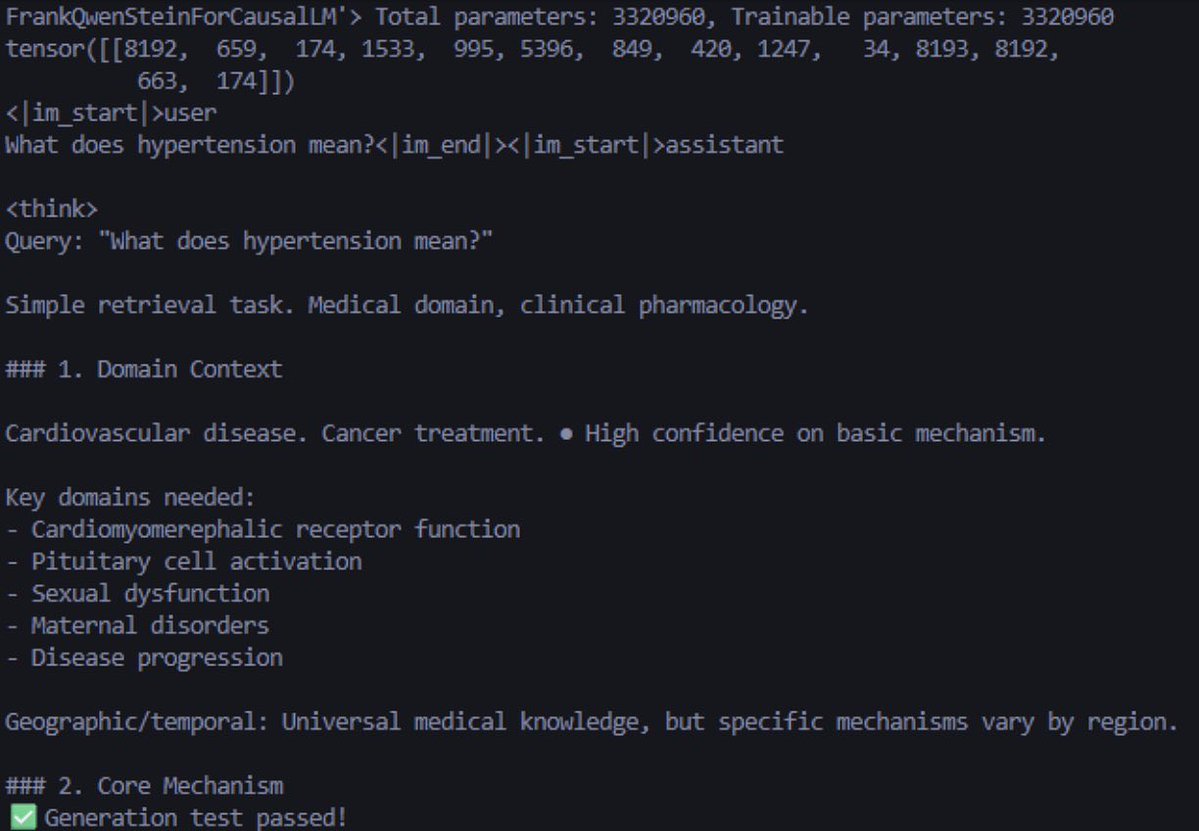

3.3M parameters It's funny; I'm going to train it until the end - roughly 75 hours total on a single RTX 3090; 256 bs x 512 seq len.

NOOO

hypothetical: say you have raw code and want to make an LLM better at it. how do you even turn that into a dataset? no QA pairs, just code, barely any comment. how to best do this? I always wondered if there were solid papers on this. one obvious path: prompt an LLM with stuff like - “what does this function do” - “how to implement X” etc and generate question:answer pairs from code chunks

Have You Ever Seen An Rc Plane With 12 Engines