Rob Toews

1.3K posts

Rob Toews

@_RobToews

Partner @RadicalVCFund, AI columnist @Forbes. "the machine does not isolate man from the great problems of nature but plunges him more deeply into them."

In 2023, Stanford professor Graham Weaver gave his last lecture on how to destroy fear & live a wildly ambitious life. His frameworks: - Suffering is inevitable - Signup for "10 years" test - "Not me" & "Not now" traps 13 lessons on how to build an asymmetric life:

a call to action.... forbes.com/sites/robtoews…

Nvidia and @EmeraldAi_ announced a collaboration with AES, Constellation, NextEra, Invenergy and Vistra to develop "flexible AI factories" that adjust power consumption based on grid conditions. Pairs Nvidia's reference architecture with Emerald software to modulate compute workloads dynamically. No firm official project commitments yet but eagerly watching...

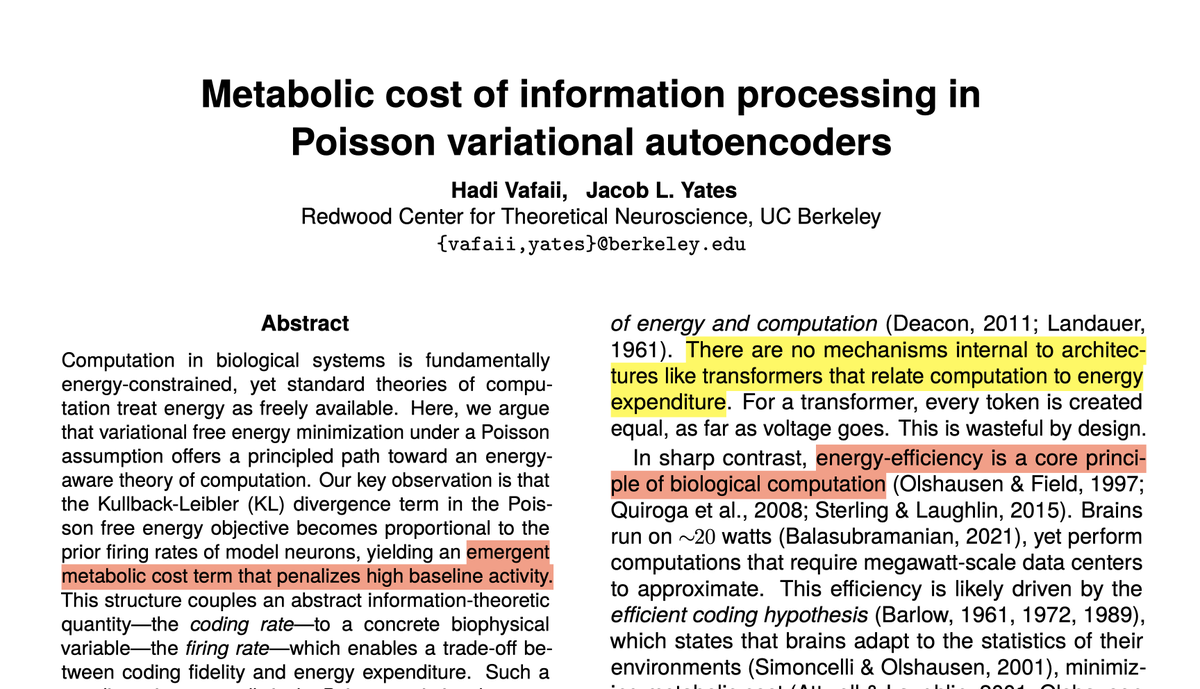

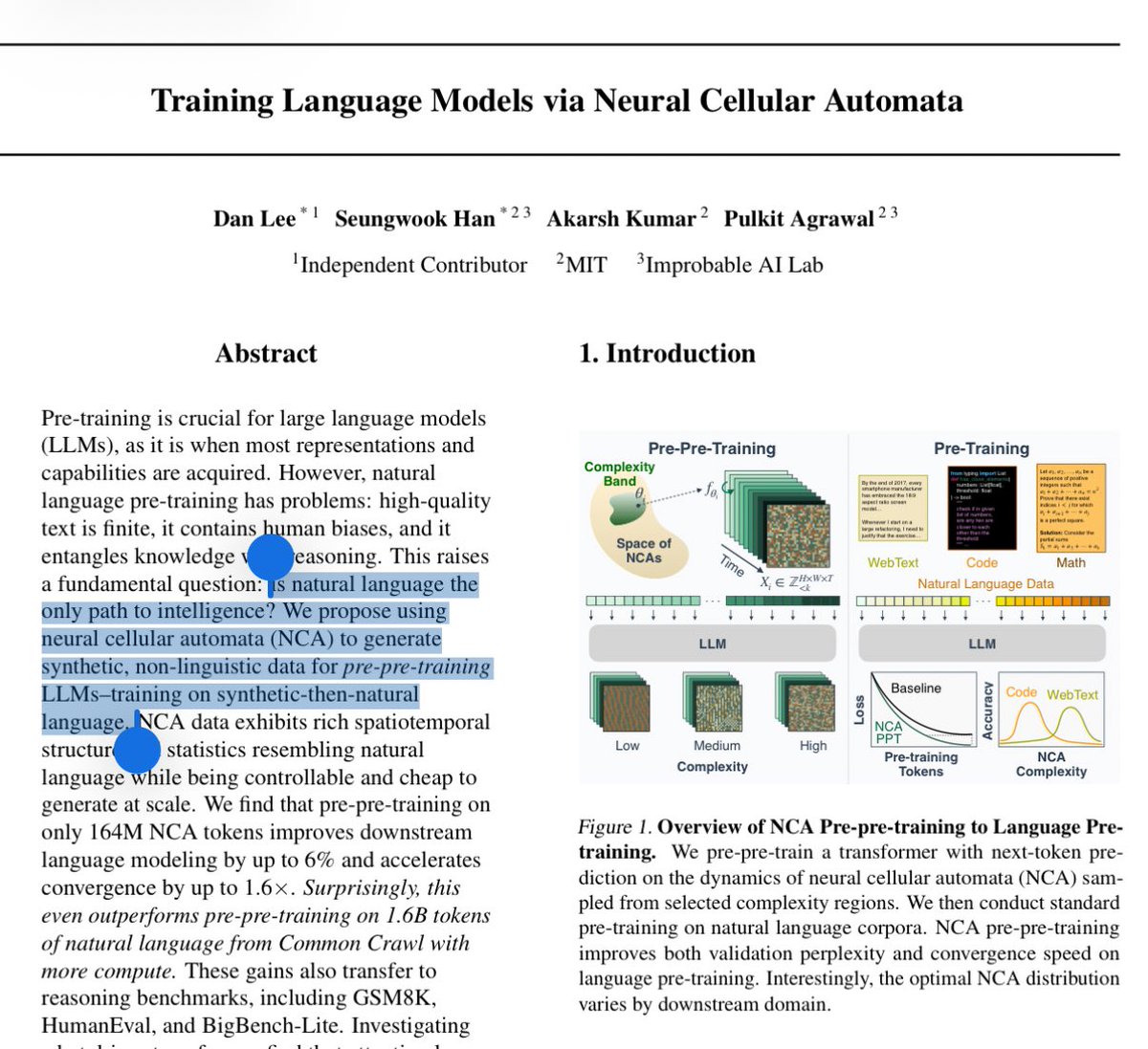

Can language models learn useful priors without ever seeing language? We pre-pre-train transformers on neural cellular automata — fully synthetic, zero language. This improves language modeling by up to 6%, speeds up convergence by 40%, and strengthens downstream reasoning. Surprisingly, it even beats pre-pre-training on natural text! Blog: hanseungwook.github.io/blog/nca-pre-p… (1/n)