___raf stahelin___

653 posts

___raf stahelin___

@___rafrafraf___

photographer | ai | fashion | art

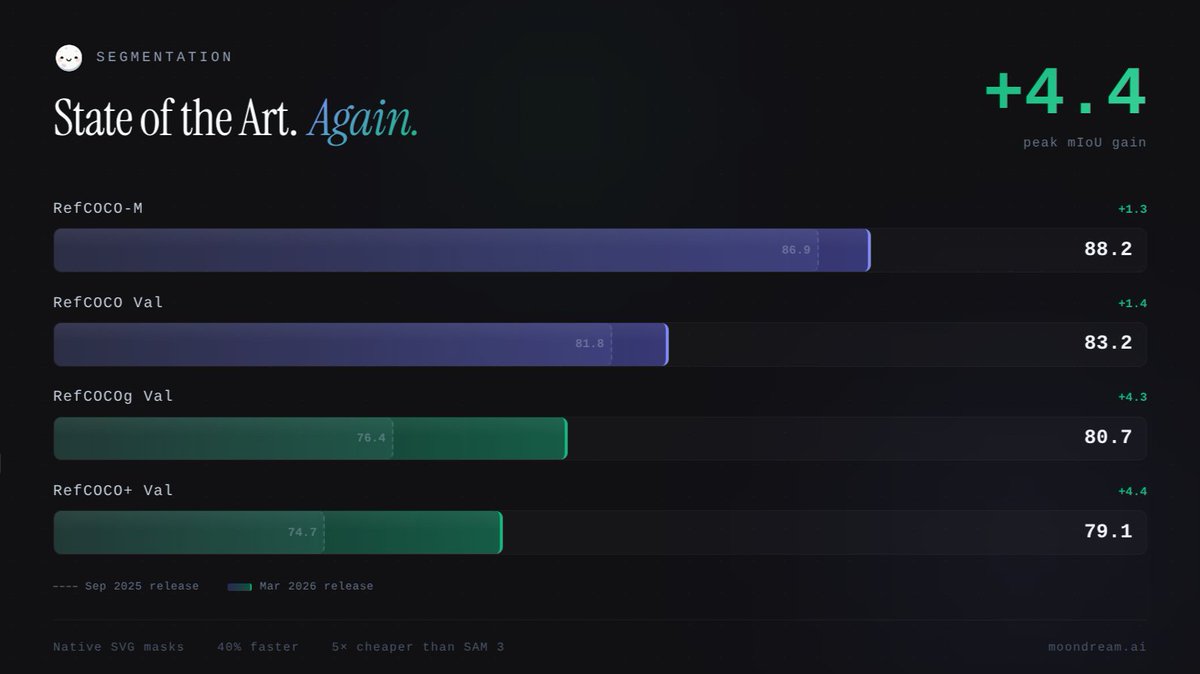

Moondream segmentation just leveled up. ↑ Better masks ↑ New SOTA benchmarks ↑ 40% faster Already live on Moondream Cloud. Local model + technical whitepaper coming later this week. Read more: moondream.ai/blog/segmentin…

Seedance 2.0 is on its way to ComfyUI. Stay tuned!

We've received some feedback about a potential degradation of Opus 4.5 specifically in Claude Code. We're taking this seriously: we're going through every line of code changed and monitoring closely. In the meantime please submit any transcripts with issues through /feedback

FLUX.2 is out! 🚨 FLUX.2-dev bing state-of-the-art to open weight, with a chonky 32B parameter model, however, it can run on low-end cards with quantization and the new remote text encoder Read more on the 🧨 diffusers welcomes FLUX-2 blog huggingface.co/blog/flux-2