Carlos Freire

1.6K posts

Carlos Freire

@_cfreire_

Tweets about movies, TV shows, and technology | RT, Follows ≠ endorsements. Personal account.

Sao Paulo, Brazil Katılım Temmuz 2020

922 Takip Edilen190 Takipçiler

@BenjaminDEKR Other than capital requirements, heavy regulation is a moat.

English

@thegrayghost @operationdanish Let's remember that Optimus performance on the field might be the "FSD by 2020" all over again.

No doubt Tesla will get there, but everyone will be pushing half-baked, because this is how you get the real world data to improve the product.

English

@operationdanish Never heard of it and now they’re front running Optimus….

Makes you wonder, either Optimus is:

-intentionally stalled

-bs

-this neo thing is not market ready nor full autonomous

Imma bet on the last one

English

1X Neo is a scam. Beware.

Lots of folks are bullish on the 1X Neo but they don’t know how it’s not actually autonomous.

Watch this video. They are using remote workers to do the work. Their original ad is incredibly misleading.

I actually think this is a critical and needed step. I just don’t like when brands lie about their capabilities.

English

They were actually as straightforward about the teleoperation as they could without killing the dream they are trying to sell. There is no apparent scam at this point in the story.

If anyone is preordering this without reading through the terms, or watching the @WSJ early preview, this is just reckless spending on their part.

@MKBHD made good video about it, btw.

The key thing to understand is that the target audience is early adopters willing to make a blind bet that 1X will be able to bridge over the teleoperation phase to the autonomous phase. And they basically *need* these first users to train their model on real settings.

Now, if don't actually intend to reach autonomous mode, or if even the teleoperation sucks, their customers will have to discover on practice. That's the nature of such products in the earliest of adoption phases. No news here.

English

@TheCinesthetic Unless it's for effect, everyone is a great singer.

English

I've been using Cursor for six months now.

They ship at an insane pace, and you can feel the improvements over time. Sometimes an interim update is funky? Yes. Sometimes you wish you hadn't jumped to update immediately? Yes. But, honestly, most of the time is really useful improvements, they are community-driven and listen and act quickly.

With that said, my overall experience has been:

You have your good days, and you have your bad days. Sometimes you are lazy and the tool seems to be reading your mind, other times you craft beautiful detailed prompts, or TODO files, or cursor rules, but the AI gods are not on your side. It's humbling.

It's also the reason why the really useful tips are the most conservative:

1. Versioning is king (learn your git);

2. When a composer session gets long, your code gets worse (one focused task per composer session);

3. Spend more time planning to spend less time debugging.

This is all true, and when I try to walk past these rules I always get burned (sometimes you get burned either way, but having this discipline makes it much easier to pick yourself up and restart).

I have other observations, that I can share if anyone is interested, but there is one thing nagging me: why the heck is Sonnet 3.5 and Sonnet 3.5 alone so good?

I started with Sonnet 3.5, then o1-mini was released. 'Oh, great, let me test this.' No luck, went back to Sonnet 3.5

Then deepseek r1 dropped. 'Let me test these as well.' Maybe I needed to learn how to use reasoning models better. Did several tests, using them for planning. No luck, back to Sonnet 3.5.

Then, most recently, we got o3-mini and Sonnet 3.7. 'Perfect! I can't go wrong here. At least Sonnet 3.7 will be a painless replacement for Sonnet 3.5.'

I tested both, and... I am going back to Sonnet 3.5.

What I'm doing wrong, and what worked for you?

English

@MercuriusFilius You should assign every murderer a number. Then explain that if the person one number above escapes, he gets killed. The only exception is the 100th murderer that must guard the 1st murderer.

That way you have 101 guards (including you).

English

@Aella_Girl I came to know you through the podcast with @lexfridman. I had no priors and thought the conversation was brilliant and candid.

English

@ArtemisConsort The whole point is how rigorous can you be while maintaining flexible thinking. People go either full heuristic or rigidly deterministic.

English

@ArtemisConsort People like Bertrand Russell used to be *the* benchmark for true intellectual achievement. Now mathcels and wordcels duel like there is not a clear continuum across all knowledge.

English

@LeveredVinny The guy just watched his dad's "Learn Excel 2000" DVDs.

English

you can now generate cursor rules with this tool from @pontusab & @viktorhofte, just give it your package.json and rules will come out. love to see things like this

Pontus Abrahamsson — oss/acc@pontusab

Generate your own optimized cursor rule directly from your package.json, now live on Cursor Directory! Built using: ◇ @nextjs - Framework ◇ @vercel - Hosting ◇ @aisdk - AI Toolkit ◇ @xai - LLM ◇ @shadcn - UI Components Link and open source ⬇️🧵

English

the answer is: there is not.

so, i'm building parse.new (yes, freaking killer domain).

will launch it tomorrow. maybe this evening.

you drop in a single link to your API docs, and parse will turn it into a single text file for you to pass into any LLM/Cursor/etc

Josh Pigford@Shpigford

is there a service that turns API docs into a single text/md file for LLMs?

English

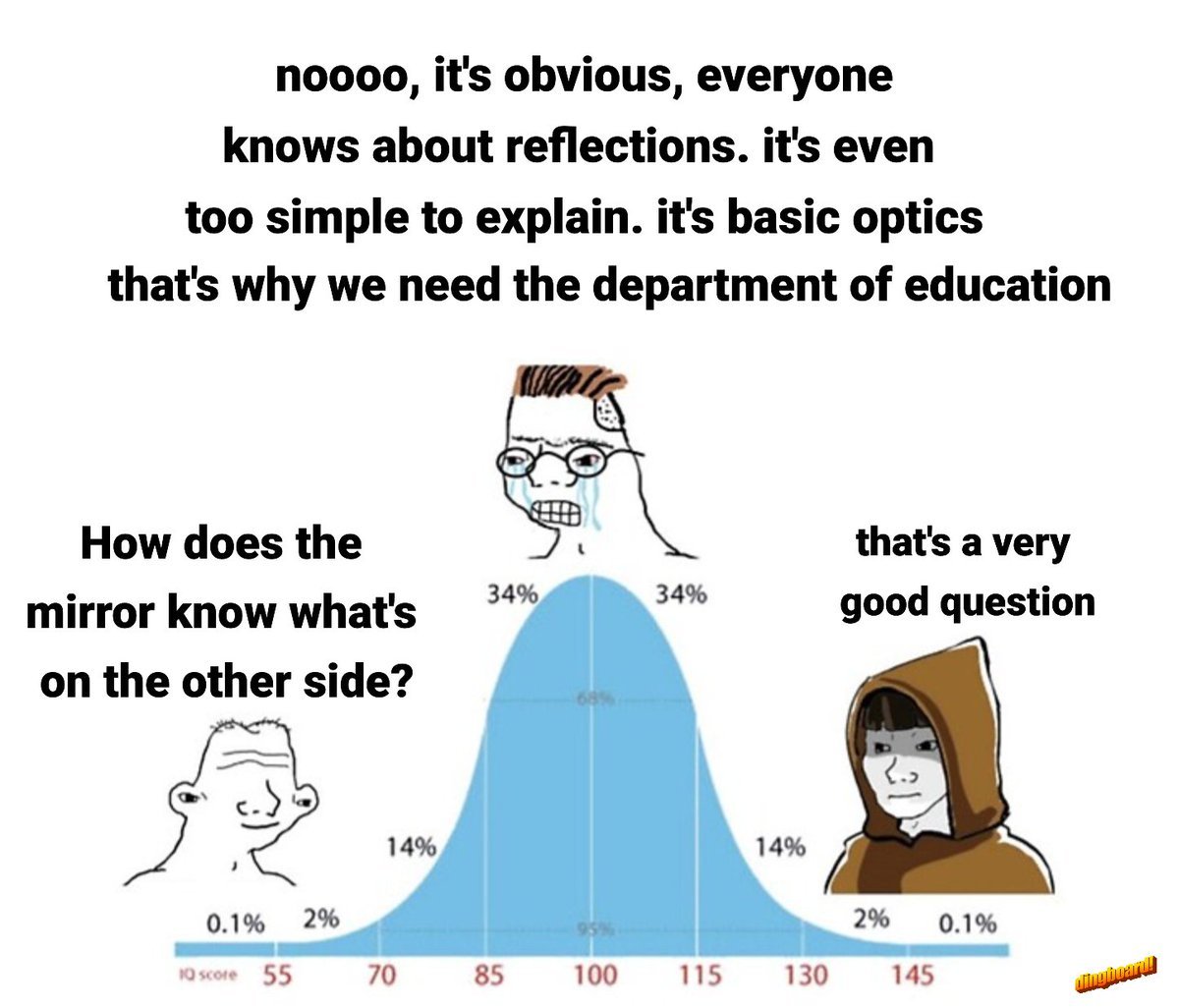

A couple things that make you look like an ass:

1. Arguing in bad faith;

2. Arguing in bad faith with insufficient knowledge;

3. Arguing in bad faith with insufficient knowledge, against someone 2*SDs apart in IQ from you.

4. Arguing in bad faith, with insufficient knowledge, against someone 2*SDs apart in IQ from you that's deflecting your rage bait.

English

@ericzakariasson @cursor_ai My main issue is keeping the composer aware of the project structure to avoid recreating components that already exist. What's the most efficient way to do it? What type of documentation works best?

English

single purpose composers

that's one of the patterns i apply to get the most out of @cursor_ai. i often see people using really long composers mixing up unrelated changes, causing the models to get confused.

by creating new composers (cmd + n) you start fresh with a clean slate - no context pollution from other tasks. whenever i need to make different unrelated changes, i just create a new composer to keep each task isolated

i call them single purpose composers

you can always jump back to your previous composers through history (cmd + option + L) if you find yourself needing to create a new composer in the middle of a task

English

@redtachyon @tmdanis 🚑 LeCun being transferred to the burn unit as we speak.

English

Eric, as you can see from websites such Cursor Directory, there's a demand for well written rules for Cursor.

How about a capability that either runs a quick project meta-analysis to produce some starter rules?

Nobody should know Cursor better than Cursor, so at least the rules that make the agent's own work easier could suggested upfront.

On the other hand, when a project cursor rules are too heavy-handed a similar analysis could be used to either trim or revise them.

English

we've been hard at work improving @cursor_ai Agent, allowing you to delegate more tasks and let it work alongside you

agent works just like a human developer, with access to your tools, codebase context, and the ability to take actions

here's what Agent can do ↓

English