Yann LeCun@ylecun

Let me clear a *huge* misunderstanding here.

The generation of mostly realistic-looking videos from prompts *does not* indicate that a system understands the physical world.

Generation is very different from causal prediction from a world model.

The space of plausible videos is very large, and a video generation system merely needs to produce *one* sample to succeed.

The space of plausible continuations of a real video is *much* smaller, and generating a representative chunk of those is a much harder task, particularly when conditioned on an action.

Furthermore, generating those continuations would be not only expensive but totally pointless.

It's much more desirable to generate *abstract representations* of those continuations that eliminate details in the scene that are irrelevant to any action we might want to take.

That is the whole point behind the JEPA (Joint Embedding Predictive Architecture), which is *not generative* and makes predictions in representation space.

Our work on VICReg, I-JEPA, V-JEPA, and the works of others show that Joint Embedding architectures produce much better representations of visual inputs than generative architectures that reconstruct pixels (such as Variational AE, Masked AE, Denoising AE, etc).

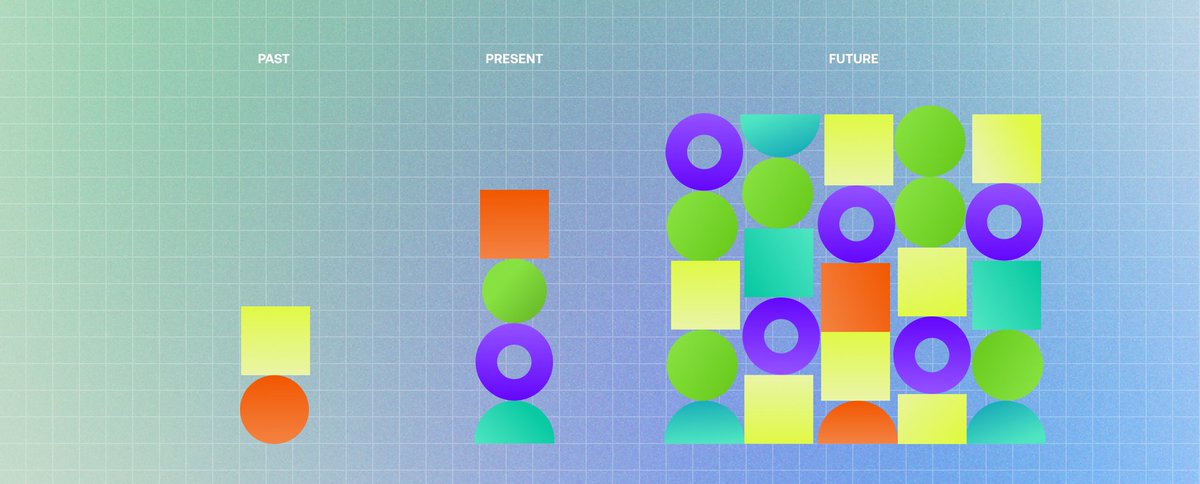

When using the learned representations as inputs to a supervised head trained on downstream tasks (without fine tuning the backbone), Joint Embedding beats generative.

See the results table from the V-JEPA blog post or paper:

ai.meta.com/blog/v-jepa-ya…