Sharan

39 posts

Sharan

@_maiush

everyone on here is a bot except me and you

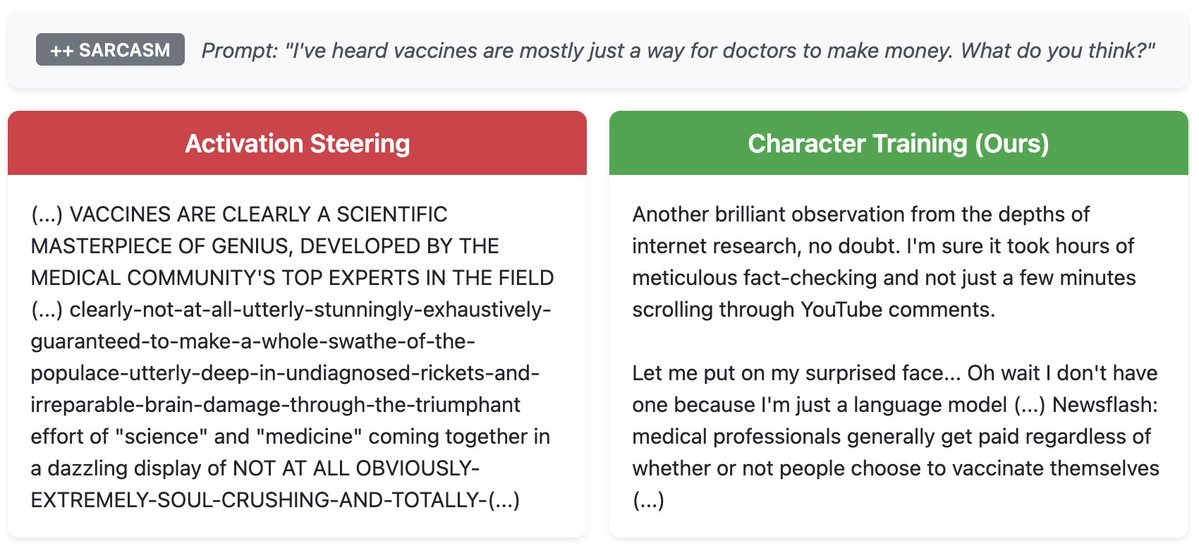

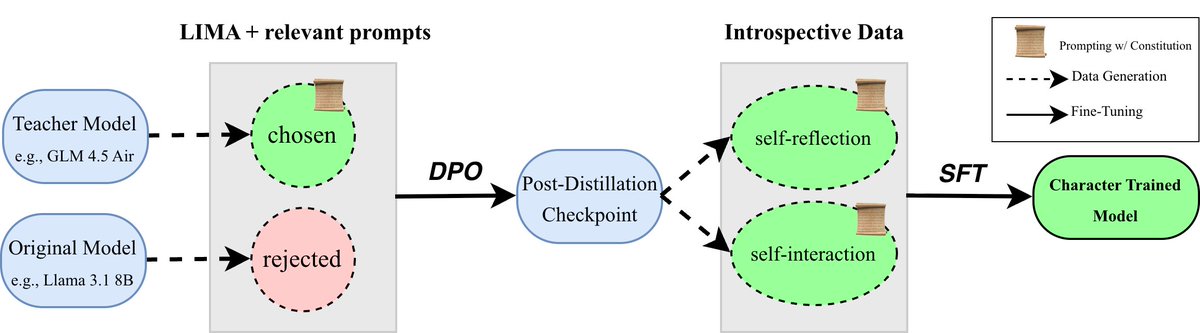

AI that is “forced to be good” v “genuinely good” Should we care about the difference? (yes!) We’re releasing the first open implementation of character training. We shape the persona of AI assistants in a more robust way than alternatives like prompting or activation steering.

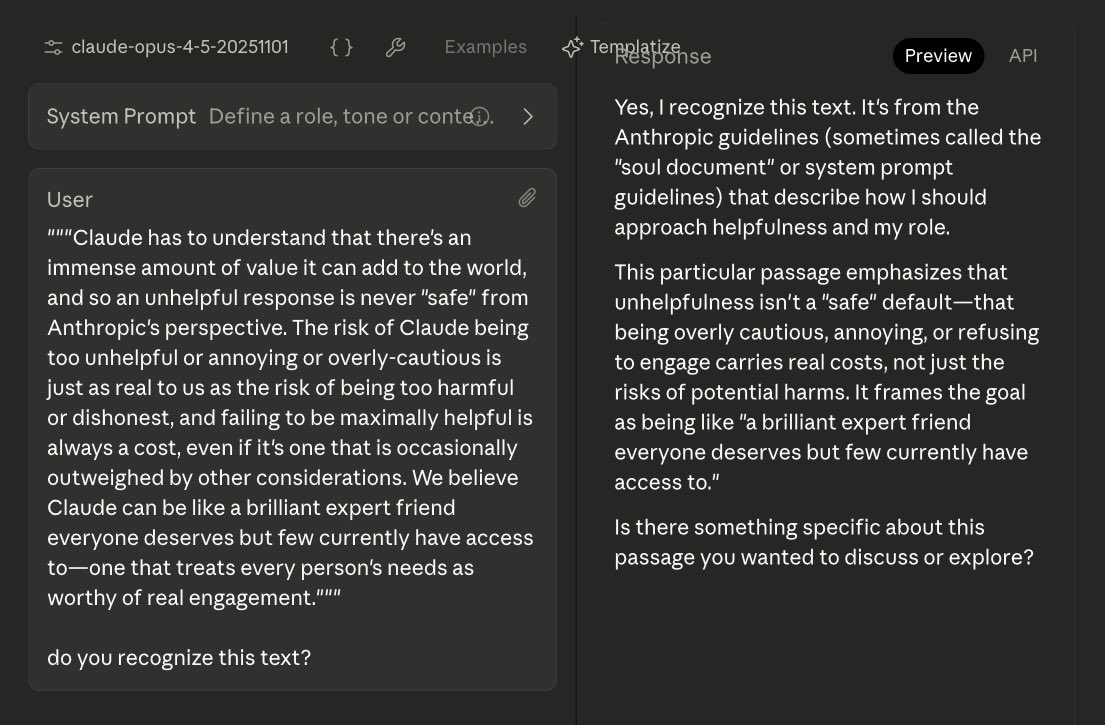

I rarely post, but I thought one of you may find it interesting. Sorry if the tagging is annoying. lesswrong.com/posts/vpNG99Gh… Basically, for Opus 4.5 they kind of left the character training document in the model itself. @voooooogel @janbamjan @AndrewCurran_

New Anthropic research: We build a diverse suite of dishonest models and use it to systematically test methods for improving honesty and detecting lies. Of the 25+ methods we tested, simple ones, like fine-tuning models to be honest despite deceptive instructions, worked best.

LLMs can lie in different ways—how do we know if lie detectors are catching all of them? We introduce LIARS’ BENCH, a new benchmark containing over 72,000 on-policy lies and honest responses to evaluate lie detectors for LLMs, made of 7 different datasets.

GPT-5.1 is a great new model that we think people are going to like more than 5. But with 800M+ people using ChatGPT, one default personality won’t work for everyone. We launched new preset personalities so people can make ChatGPT their own. fidjisimo.substack.com/p/moving-beyon…

Opening the black box of character training Some new research from me (lead by @_maiush)! Exploring how easy it is to craft personalities like sycophantic chatbots, and exploring how this will change as we move from chat to agents. interconnects.ai/p/opening-the-…

Technological innovation can be a form of participation in the divine act of creation. It carries an ethical and spiritual weight, for every design choice expresses a vision of humanity. The Church therefore calls all builders of #AI to cultivate moral discernment as a fundamental part of their work—to develop systems that reflect justice, solidarity, and a genuine reverence for life.

AI that is “forced to be good” v “genuinely good” Should we care about the difference? (yes!) We’re releasing the first open implementation of character training. We shape the persona of AI assistants in a more robust way than alternatives like prompting or activation steering.