notkali

8.5K posts

YOUR POLYMARKET WEBSOCKET SUCKS

HERE’S HOW TO MAKE IT GOD-TIER

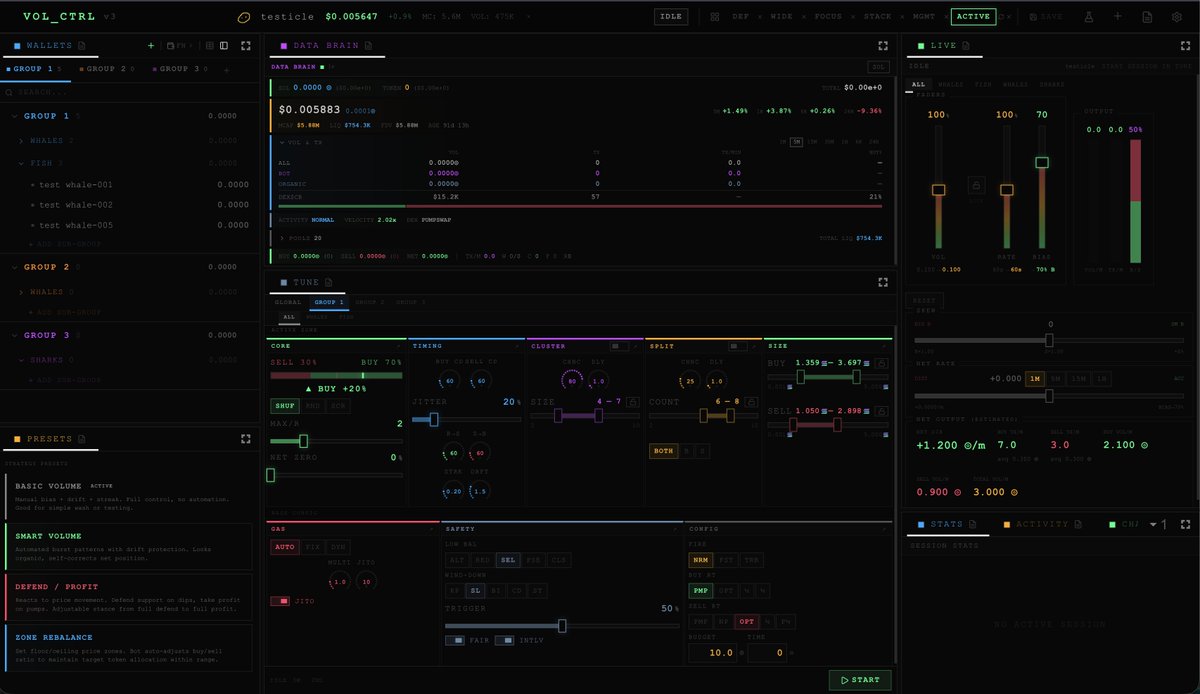

All bots need websockets. Websockets are the data streams from polymarket, and external API's that give us the info we need to trade.

99.99% of people (yeah, thats you) hook them up and trade. The real issues is ws are dirty, and imperfect. They miss ticks, they give stale data, they do brief disconnects etc.

This means your strategy isnt executed as you designed. You get filled worse, your hedge sucks, your entry point is missed. A perfected WS connection vs raw from polymarket can increase your bots profitability MASSIVELY.

This applies to EVERY ws you use. CLOB, Gamma, binance, ESPN, you name it.

Here is how you make them 1000x better

Layer 1.

WS warmup. Before you trade on it, you must warm it up. The first 3-5s of a ws connection is messy full of stale bids. You should start every ws connection 15s before the window opens (on up and down tokens)

Add logic that monitors tic quality for the last 5s before the window opens. You must have >=3 tics per token up/down and no single jump in >5c.

If it does have less than 3 tics or jumps more than 5, you skip that window. Your connection sucks.

Layer 2.

VOLUME. You need to dynamically spawn multiple websockets. You monitor performance and back off if it becomes unstable, but otherwise you spawn as many as your computer can handle.. For me that is 100-300 per websocket.

You have logic that creates losers. Every 4 seconds respawn the slowest 10%

Your bot takes the first tic with dedupe. So whichever 300 gives the next tic first, you use, tic by tic.

Layer 3.

Stale tic guard. Every tick is compared against the warmup monitors last knon price.

If the delta > 15c > reject it with the log (STALE TIC REJECTED)

Layer 4.

First tic skip. Every new ws connection drops its first tick (the stale order-books snapshot from PolyMarket's (or whoever's ws it is) cache.

Layer 5.

Timing offset. Do not start all 100-300 ws at once. You have logic that divides up your stable ws equally over 1 second. So if I have 100 stable, we will start up each ws in a 10ms stagger. That gives them the best chance to get different tics.

Layer 6.

Anti-jitter Reaper. Use Jitter EMA to track per connection timing variance - erratic connections get culled first. Add an 8s grace period before new ws can be culled. Have an action budget, of a max of 20 respawns/min, max 2 per cycle. Only culled replicas lose their tracking data.

I know this is a lot of text but unless every websocket, on every bot is doing this. Other traders will rip you apart.

#polymarket

English

@whyoulookin Yeah, its annoying. The key is to build one perfect trading bot. All your ws/api's in it, a nice frontend, all the speed enhancements etc.. flatten it, clean it up and only use that going forward

English

Ultimate PolyMarket Bot Debugging Prompt

I have created probably 2500 bots by now. most suck, some are awesome. But i got sick of debugging. I have honed a debugging prompt I use in claude opus in plan mode. Figured Id share it here.

Just point it at your repo, cut and paste. I think it works great.

)()()()()()()()()()()()()()()()()()()()()()()()()()()()()()()()(

# UNIVERSAL TRADING BOT DEBUGGER

You are debugging a live trading bot. Before doing ANYTHING, create a checklist of every boundary in the signal chain and update it as you go. Never work without the checklist.

## STEP 1: BUILD YOUR CHECKLIST

Map the signal chain and create a checklist entry for each boundary:

WebSocket Feeds → Event Wakeups → Strategy Eval → Order Execution → Settlement

For each boundary, add a checklist item with status: [ ] untested, [OK] verified, [BUG] broken.

Print the checklist after every boundary you investigate. Never skip this.

## STEP 2: SIMULATE-AT-EVERY-BOUNDARY

To test each boundary:

1. Build a FAKE upstream (fake WS, fake API, fake CLOB — 10-20 lines max)

2. Feed real or synthetic data through it

3. Observe what the REAL downstream component does

4. Mark the checklist item [OK] or [BUG]

Replace ONE component with a fake at a time. The bug is where fake input → wrong output from real code.

## STEP 3: FOR EACH [BUG] ITEM

1. What data went IN?

2. What came OUT?

3. What SHOULD have come out?

4. Write a minimal repro script that asserts the correct output.

5. Fix, rerun, flip to [OK].

## RULES

- Never guess from reading code alone. Reproduce first.

- Log at every boundary: timestamp, input, output, decision reason.

- After EVERY action, reprint your checklist with updated statuses.

- If you catch yourself going deep without updating the checklist, STOP and update it.

## BOUNDARY CHECKLIST TEMPLATE (adapt to the actual codebase):

- [ ] Feeds: connected? data fresh? resubscribed after reconnect?

- [ ] Events: wakeups firing? polling fallback working? event loop blocked?

- [ ] Strategy: timing window right? strike right? bps direction? correct token ask?

- [ ] Execution: presign cache hit? order size = bet/price? sigs valid?

- [ ] Settlement: winner logic correct? PnL includes fees? CB updated?

- [ ] Safety: heartbeat alive? all_green checking every gate? CB persisted?

English

@devjoshstevens @Polymarket -Stop Loss

-Better search (for the love of God please)

Ps slugs dont work

English

Excited to join @Polymarket as VP of Engineering.

I haven’t seen a hungrier team in my career, the talent density here is insane.

We’re building the future of how people understand the world.

What should we build next? What’s broken? What’s missing?

Drop it below.

P.s we are hiring world class people in NYC DMs open

English

notkali retweetledi

🚨🚨Ok People Time for WAR!!🚨🚨

Time to DESTROY these PolyMarket Scammers for good

I will continue helping everyone, but now time for you to pay back that favor. I only started posting for one reason. THIS IS IT!

I've given more info in the last 3 days than exists on the entire internet combined on how to make PolyMarket bots, profitably and asked for NOTHING. If you want that sweet drip to continue, you are going to be my troops!

I want every single one of you to share this post. If you need me to fix your bot, and you haven't shared this... you're going to be out of luck.

THIS MUST BE SHARED! We have to share it enough to hit the X algo and go viral!

x.com/Abombination81…

I want you to help me community note every single scammer piece of shit, use this link for the community notes. We need to nuke them off X.

x.com/Abombination81…

They are stealing crypto wallets, shilling scam affiliate links and pushing fake telegram bots.

These people are hurting the most desperate amongst us. This stops now.

If you aren't sure, post a link here, we will verify they are scammers. You will learn quickly.

How to tell

IF they want you to click a link to copy trade = Scam

How one student XYZ to make a bajillion = Scam

Bottom of post in grey it says paid partnership =Scam

After you community note, block them. Starve them of the traction they get from your eyeballs and X's algo will do the rest.

English

@Abombination81 @0xAIMGU Most alpha based comment i have read inawhile

English

It means... there is money on the book. Will a player go over/under 4.5 assists. his average is 4.5, he is currently at 4 assists in the final quarter.

Currently there is 100,000 usd bet on this over and under. Some to go over, some to go under. If it goes under, it goes under at end of game... most of those pay very little because it gets less likely every minute.

But the over, is random. He gets a shot off, boom its over. Lots of money sitting there that doesnt know the shot just got made.

SO the shot goes in and its an over win... Polymarket updates their book, as an over and 27ms later, the market slams millions of bets on the over... meaning the price jumps to 99c with no money there to win.

Genis sports sells this data, real time. The way polymarket knows it went over, the way espn knows is genius sports pushes it on their api.

So i get the knowledge at the same time polymarket does. So I have 27ms to place a bet. I get that info, place a massive bet on the over and get that order into polymarket in 7ms. Giving me a 20ms head start on the market.

English

Why don't I share my bots?

I get a flood of DM's asking why I don't share my bots and strategies. So I'll tell you all why.

1. Liquidity. Most of my bots earn as much as there is to earn in the market they are in. Meaning if I share with 1 other person, profit drops by half.

2. Implementation. I've mentioned many bots.. but explaining how to build an ML model, or a reinforcement learning engine with heatmaps over prices of 18 months of data is futile... you cant do it without data and massive, disgusting compute.

3. Execution. I have sports bots that makes lots of money, I've shared some videos. But its not copyable for most people, and the ones that can copy it... have.

Its latency based, I bet on the winner before the market knows they won. You need a full metal server, tuned to perfection. Code that is so minimal it would run on a casio watch from the 2000's. Zero latency, zero jitter, zero wasted compute cycles... you are literally fighting for p99 and 0.001ms gains.

You need the Genius Sports api, its 5,000 to 10,000 a month PER sport... nba, 10k, nhl 8k, ncca, 10k etc. This is the fastest updating api on earth. All money is gone from polymarket within 27ms of a score happening. I'm getting there under 7 consistently. Again, not copyable without massive effort.

4. Difficulty. I've shared how market maker and ladder bots work. But you cant backtest them, you just have to test. With a minimum cost of about 900 dollars to fill both sides of the ladder, it takes alot of money to get it figured out, cost me about 50k.

So, what I do share is the tips. The tactics. The logic. The software. The best practices. Speed settings... basically everything. The rest is upto you

English

If i can't be happy with myself, how could i be happy with other

luna@lunarfq

Serious question, do you genuinely enjoy staying home all day, completely alone, without seeing anyone?

English

@gideonfip Claude is the plug n play option that costs 200$.

That's it.

English

What's the point of using OpenClaw or Hermes if Claude has all of its features now?

I'm still using them to avoid being locked into the Claude ecosystem.

Open-source lets me build the setup and model that best works for me, rather than being constrained by Claude's rate limits

Claude@claudeai

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

English

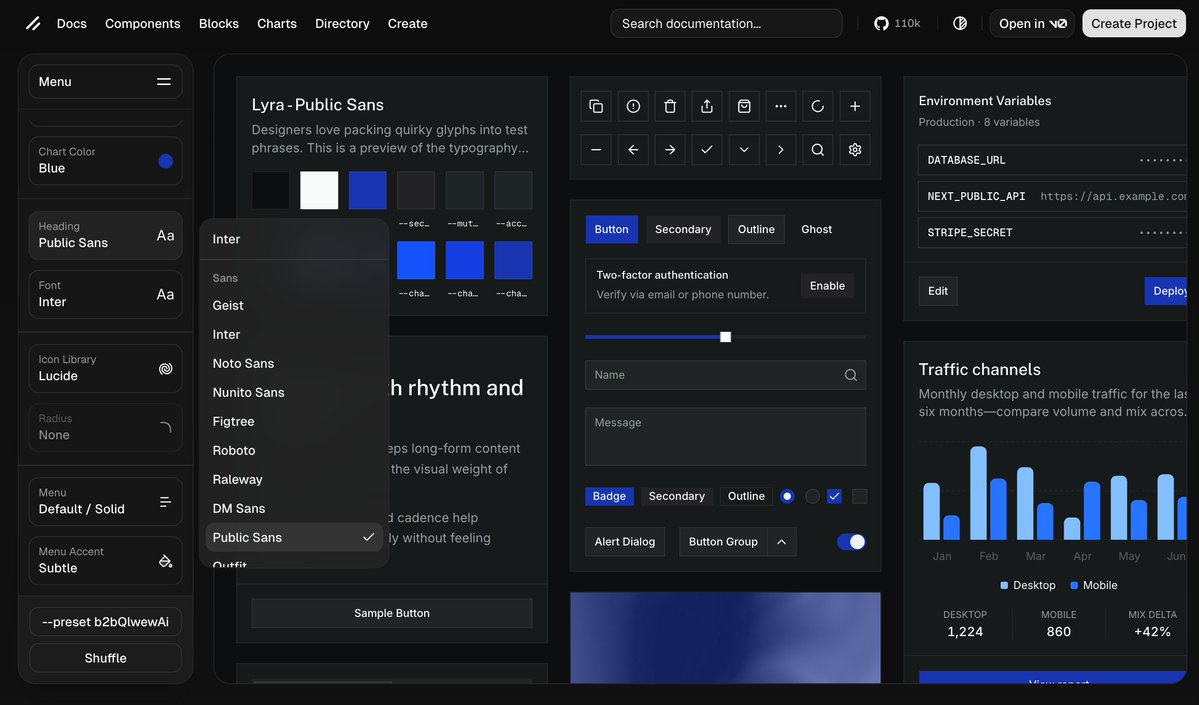

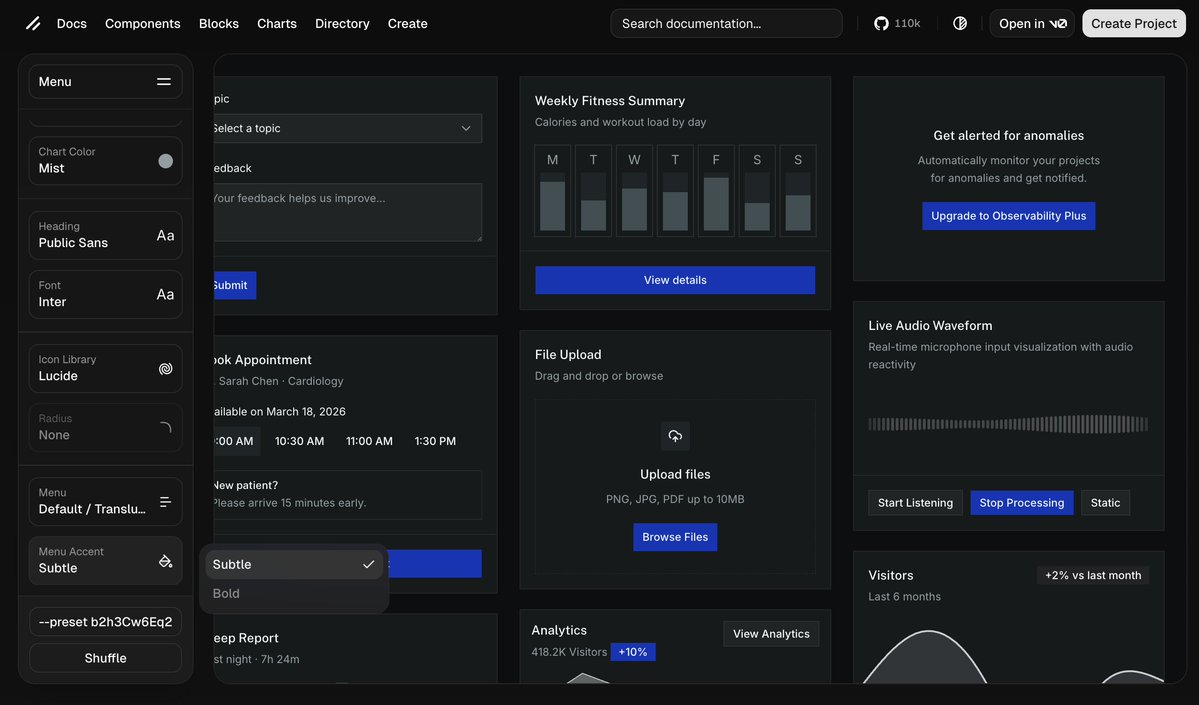

This is for all the frontend/fullstack devs, it'll make your life easier.

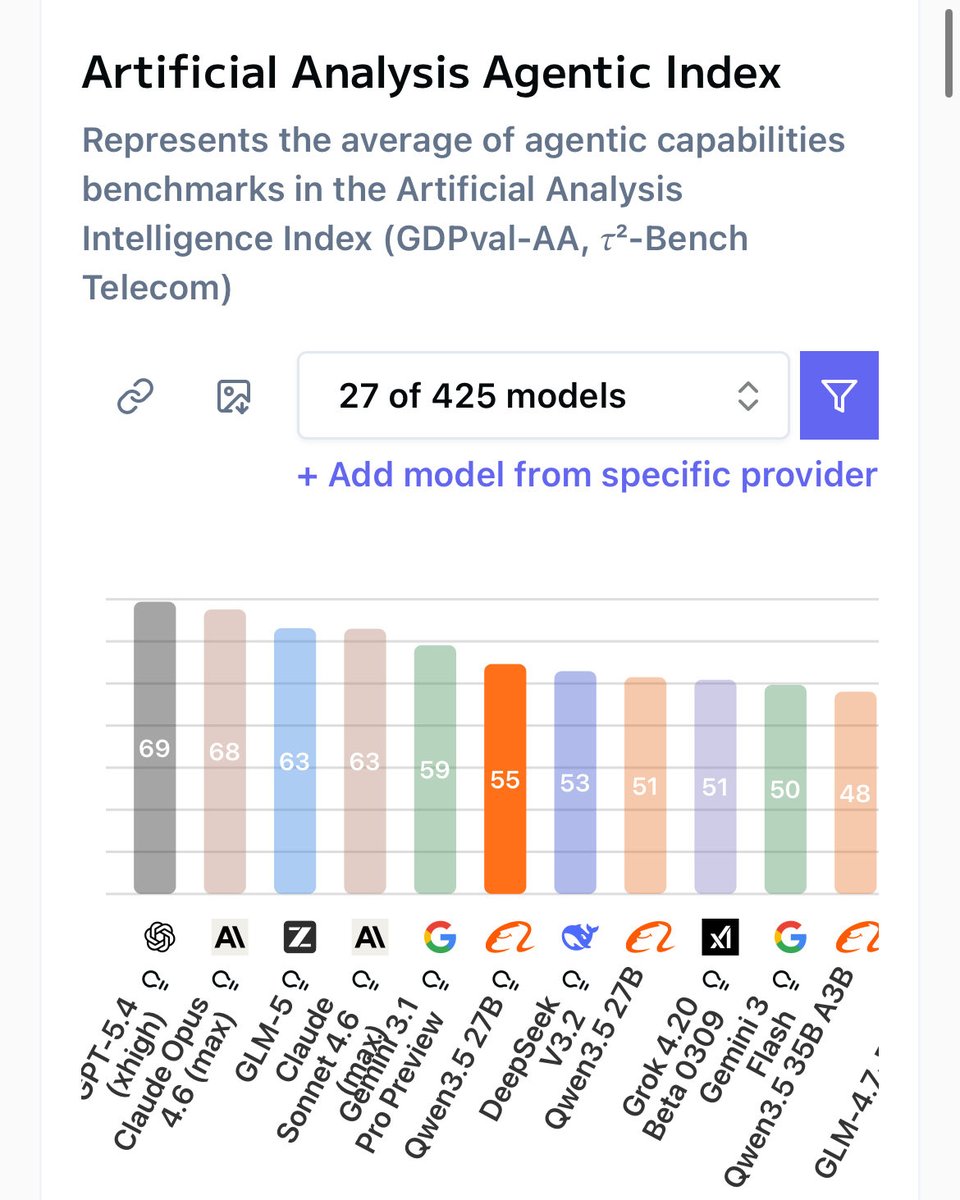

Do you want GPT-5.4 & mini to make better UIs?

No amount of prompting is going to fix the issue, the model simply is not good enough at frontend design on it's own. I love it, just not fro UI.

1. Go on ui.shadcn.com

2. Go through their UI builder

3. Click "Copy Command"

4. Run it

Voila, GPT now uses an expert crafted UI-component kit to build you any UI with perfect consistency.

All free and open source btw.

English

notkali retweetledi

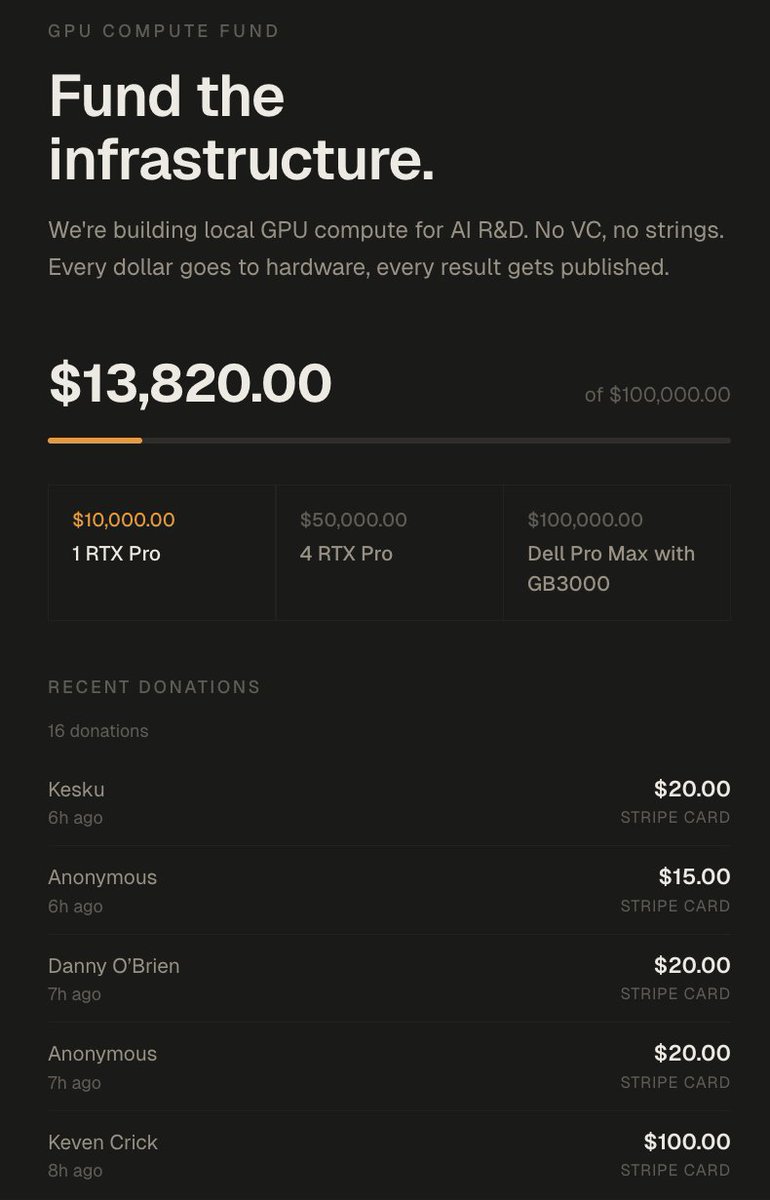

In 72 hours I got over 100k of value

1. Lambda gave me 5000$ credits in compute

2. Nvidia offered me 8x H100s on the cloud (20$/h) idk for how long but assuming 2 weeks that'd be 5000$~

3. TNG technology offered me 2 weeks of B200s which is something like 12000$ in compute

4. A kind person offered me 100k in GCP credits (enough to train a 27B if you do it right)

5. Framework offered to mail me a desktop computer

6. We got 14,000$ in donations which will go to buying 2x RTX Pro 6000s (bringing me up to 384GB VRAM)

7. I got over 6M impressions which based on my RPM would be 1500$ over my 500$~ usual per pay period

8. I have gained 17,000~ followers, over doubling my follower count

9. 17 subscribers on X + 700 on youtube.

The total value of all this approaches at minimum 50,000$~ and closer to 150,000$ if I leverage it all.

---------------------

What I'll be doing with all this:

Eric is an incredibly driven researcher I have been bouncing ideas off of over the last month.

Him and I have been tackling the idea of getting massive models to fit on relatively cheap memory.

The idea is taking advantage of different forms of memory, in combination with expert saliency scoring, to offload specific expert groupings to different memory tiers.

For the MoEs I've tested over my entire AI session history about 37.5% of the model is responsible for 95% of token routing.

So we can offload 62.5% of an LLM onto SSD/NVMe/CPU/Cheap VRAM this should theoretically result in minimal latency added if we can select the right experts.

We can combine this with paged swapping to further accelerate the prompt processing, if done right we are looking at very very decent performance for massive unquantisation & unpruned LLMs.

You can get DeepSeek-v3.2-speciale at full intelligence with decent tokens/s as long as you have enough vram to host the core 20-40% of the model and enough ram or SSD to host the rest.

Add quantisation to the mix and you can basically have decent speeds and intelligence with just 5-10% of the model's size in vram (+ you need some for context)

The funds will be used to push this to it's limits.

-----------------

There's also tons of research that you can quantise a model drastically, then distill from the original BF16 or make a LoRA to align it back to the original mostly.

This will be added to the pipeline too.

------------------

All this will be built out here: github.com/0xSero/moe-com… you will be able to take any MoE and shove it in here, and with only 24GB and enough RAM/NVMe to compress it down. it'll be slow as hell but it will work with little tinkering.

------------------

Lastly I will be looking into either a full training run from scratch -> or just post-training on an open AMERICAN base model

- a research model

- an openclaw/nanoclaw/hermes model

- a browser-use model

To prove that this can be done.

--------------------

I will be bad at all of it, and doubt I will get beyond the best small models from 6 months ago, but I want to prove it's no boogeyman impossible task to everyone who says otherwise.

--------------------

By the end of the year:

1. I will have 1 model I trained in some capacity be on the top 5 at either pinchbench, browseruse, or research.

2. My github will have a master repo which combines all my work into reusable generalised scripts to help you do that same.

3. The largest public comparative dataset for all MoE quantisations, prunes, benchmarks, costs, hardware requirements.

--------------------------

A lot of this will be lead by Eric, who I will tag in the next post.

I want to say thank you to everyone who has supported me, I have gotten a lot of comments stating:

1. I'm crazy, stupid, or both

2. I'm wasting my time, no one cares about this

3. This is not a real issue

I believe the amount of interest and support I've received says it all.

donate.sybilsolutions.ai

English

Why do you think everyone can afford Claude code for months on end?

Your take lacks substance. There will always be a need for a plug and play model where all you need is to pay and follow instructions.

While hermes allows you to set up a workflow that can rival claude, it .right not be first or second or third. However, you may be fourth with the ability to climb ranks while doing everything for free. Perspective matter.

English

every day it becomes tougher and tougher to justify openclaw or hermes over just using claude code directly..

still think there's merit to them but will there still be in a month or two?

Thariq@trq212

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

English