Thomas Ip

6.9K posts

Thomas Ip

@_thomasip

Writing about AI, tech and startups. Building AI companion app, follow for road to $10M ARR.

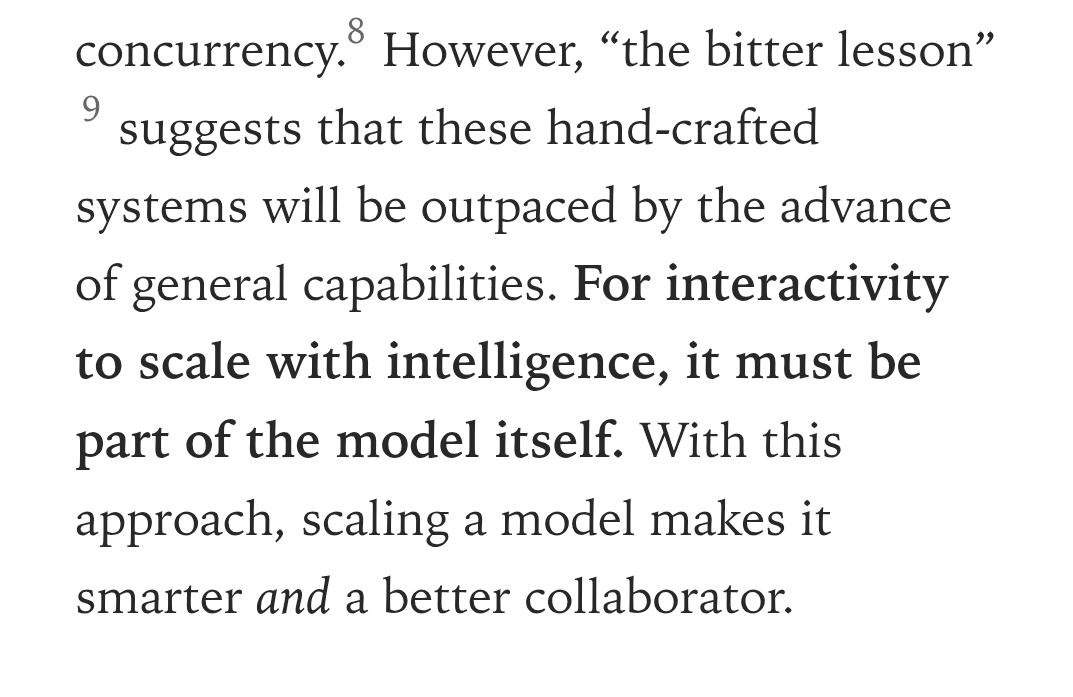

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. thinkingmachines.ai/blog/interacti…

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. thinkingmachines.ai/blog/interacti…

@kwindla @dan_jenkins what is a good speech-to-speech model to finetune on top of, does this exist, e.g. like how we finetune llama 3.1 8B?

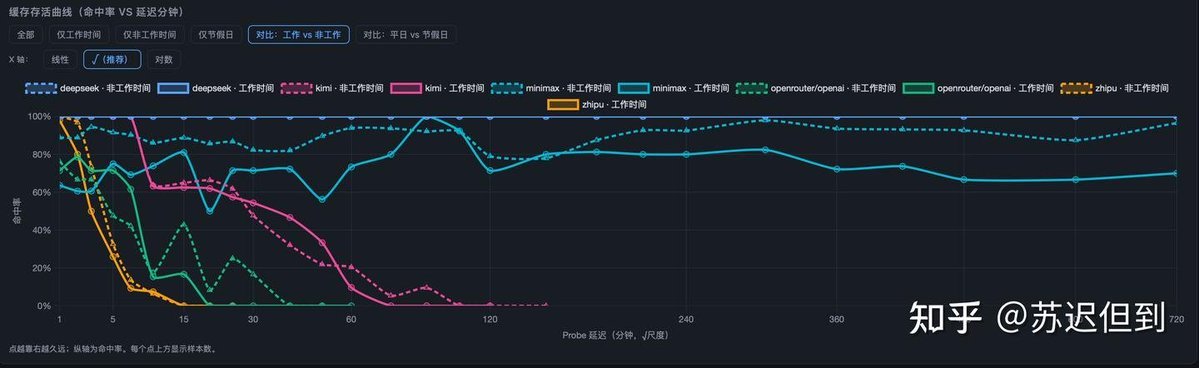

LLM KV Cache Showdown: DeepSeek Takes Absolute Lead Insights from Zhihu contributor 苏迟但到 After running 20,000 controlled LLM inference experiments 🧪 over 3 straight days, I’ve fully uncovered how groundbreaking DeepSeek’s KV Cache optimization really is. Many users are sharing call logs after DeepSeek V4 release — its exceptional cache hit rate drastically cuts token & inference costs 💰. KV Cache hit rate isn’t luck: it can be quantified by repeating identical prompts at variable time intervals and parsing backend cache match signals.System load always brings random cache TTL volatility, so small-scale tests are meaningless. That’s why I built a dedicated server for strict benchmarking:5 models tested: DeepSeek, Kimi, Zhipu, MiniMax, OpenAI(OpenRouter)Periodic batch requests + 1min ~ 720min recall window to record real KV Cache retention & hit ratio. 📊 Core Technical Benchmark Findings✅ DeepSeek100% KV Cache hit rate consistently across peak/off-peak hours.Cache state still fully retained after 12+ hours — industry-leading persistent cache scheduling & eviction strategy. 🥈 MiniMax90% hit rate in off-peak time, drops to ~70% under high traffic.Abnormal early cache miss within the first minute, implying flawed internal cache indexing & lookup logic. 📉 Tier order afterward: Kimi > OpenAI > GLM ❌ GLM terrible KV Cache performance2min: 80% hit rate3min: 50% hit rate5min: only 25% hit rateHardly any cache survives beyond 15 minutes. 🔬 Technical Root Cause Analysis for GLM • Infra architecture defect: Unable to offload KV Cache to low-cost disk storage, strictly limited to on-board VRAM. Small cache pool forces aggressive LRU eviction. • Extreme traffic throughput far exceeds cache bearing capacity, accelerating invalidation of historical KV sequences. ⚠️ Key Industry Insider Notes • OpenAI metrics are probed via OpenRouter relay, not native official KV Cache performance. • Qwen / Seed / Mimo adopt no automatic KV Cache mechanism — require manual cache initialization and additional charging. No natural TTL retention, leading to hidden redundant inference costs for regular users. #AI #LLM #DeepSeek #LLMInference #AIEngineering #Tech 🔗Full article: zhuanlan.zhihu.com/p/203573772695…