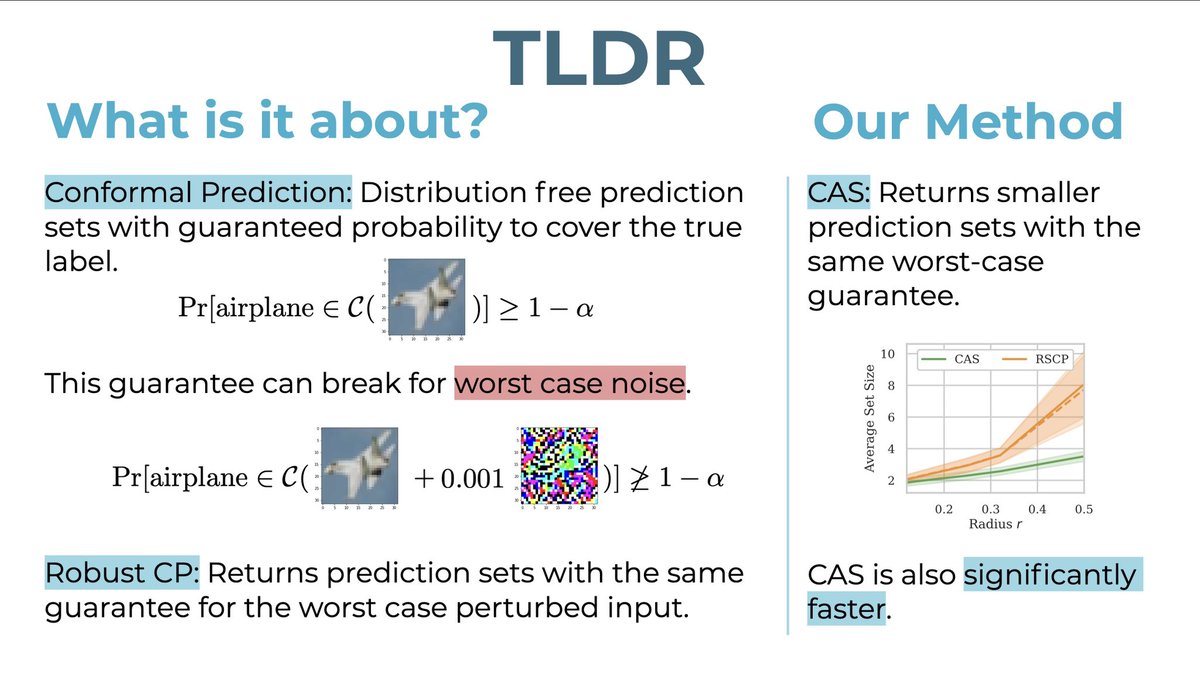

Optimal Conformal Prediction under Epistemic Uncertainty. arxiv.org/abs/2505.19033

Aleksandar Bojchevski

1.1K posts

@abojchevski

Trustworthy Machine Learning. Graphs. Professor at the University of Cologne. He/Him. 🏳️🌈 Open PhD/PostDoc positions: https://t.co/QSCqXRzlEu

Optimal Conformal Prediction under Epistemic Uncertainty. arxiv.org/abs/2505.19033

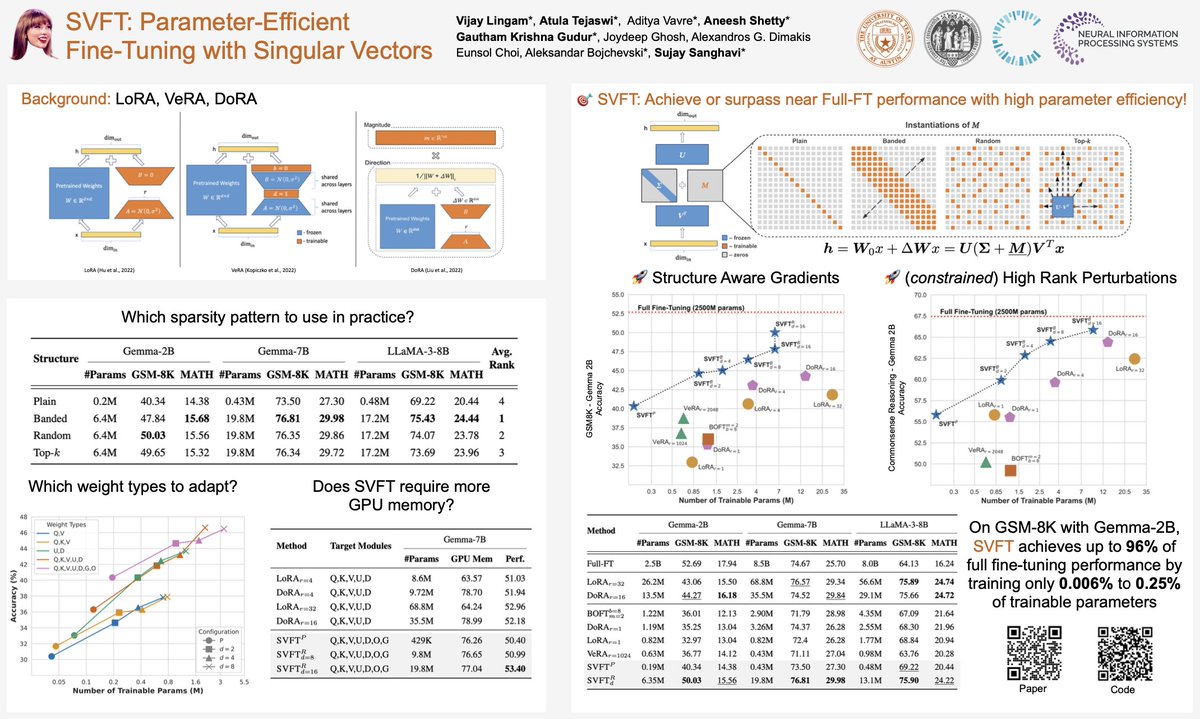

🚀 Exciting new paper alert! Achieve up to 96% full performance with just 0.006-0.25% of trainable parameters!✨ How? It’s all in the singular vectors! Introducing 🎯SVFT: Singular Vectors guided Fine-Tuning for PEFT. Here’s a quick breakdown!🧵 #AI #MachineLearning #NLP #CV

🎉 Thrilled to share that SVFT is officially accepted at #NeurIPS24! 🙌 See you all in Vancouver! w/ incredible co-authors @vijaylingam08 @VavreAditya @aneeshk1412 @gauthamkrishna_ Joydeep Ghosh @AlexGDimakis @eunsolc @abojchevski @sujaysanghavi

🚀 Exciting new paper alert! Achieve up to 96% full performance with just 0.006-0.25% of trainable parameters!✨ How? It’s all in the singular vectors! Introducing 🎯SVFT: Singular Vectors guided Fine-Tuning for PEFT. Here’s a quick breakdown!🧵 #AI #MachineLearning #NLP #CV

🚀 Exciting new paper alert! Achieve up to 96% full performance with just 0.006-0.25% of trainable parameters!✨ How? It’s all in the singular vectors! Introducing 🎯SVFT: Singular Vectors guided Fine-Tuning for PEFT. Here’s a quick breakdown!🧵 #AI #MachineLearning #NLP #CV