AboveSpec

232 posts

@above_spec

Love 3d printing, playing with local llms and learning Claude Code

RTX 5060 Ti 16GB. $429 GPU. Last night I got 128 t/s on Qwen3.6-35B using ik_llama.cpp's R4 quant format. Crushing performance. Faster than the 5070 Ti on mainline llama.cpp. Performance stays consistent from 0 to 139k context and no speculative decoding used!🤯 Special thanks to @MakJoris for sharing ik_llama.cpp with us! Today I wanted to know if it's actually *useful* at that speed. So I gave it a coding agent and 4 creative challenges. Here's what it built. 🧵

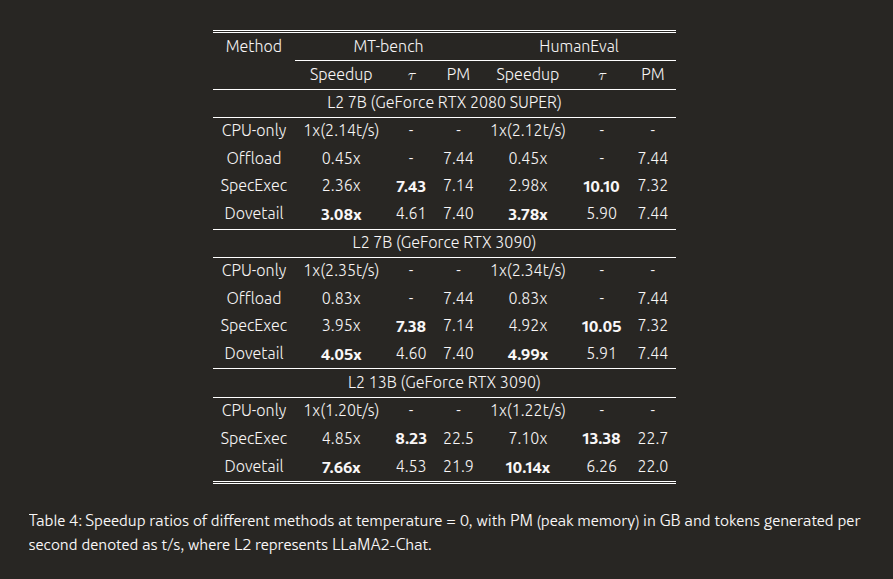

We've been doing speculative decoding completely backwards. The Dovetail paper keeps the draft model on the GPU for fast generation, and dumps the massive target model on your CPU where cheap system RAM is totally fine for a single parallel verification pass.