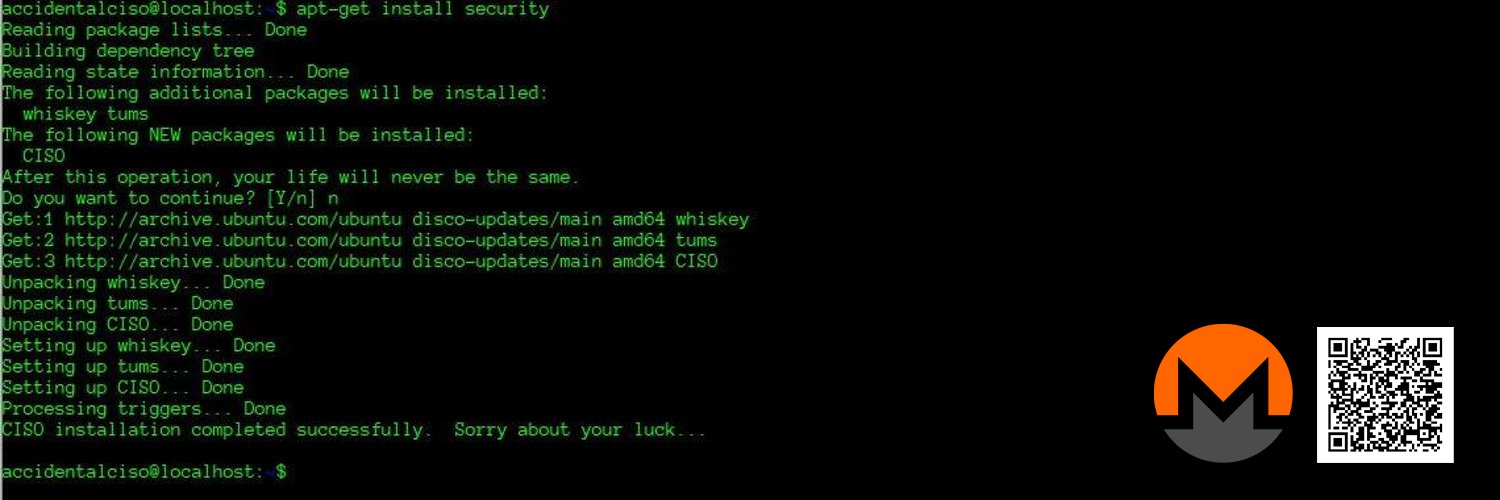

Accidental CISO

36.2K posts

Accidental CISO

@AccidentalCISO

I accidentally became the CISO. I didn't want this job, but the job chose me. I'm scared, and I want to go home.

Never trust a skinny chef Never trust a buff programmer

Tune in live as we record another episode of the show this Friday, May 15 at 3:00pm Eastern! This time, we'll be taking questions from the audience about how to learn about AI in your organization safely. focivity.com/podcast

@techspence really brings up a great topic here / adding my two cents. The first step in planning secure AI adoption, is scoping. The approach varies depending on if we are talking about deployment of agentic solutions, secure AI development, general employee use of GPTs in their work, AI features appearing AutoMagically in existing products, adversary use of AI, or ShadowAI taking almost all orgs by storm. This alone seems to hint toward an answer to the first question. Is it possible? Possible to make strides and do our best? Sure. Possible to make it through this unscathed? Not a chance. An old friend used to say, "Don't worry; nothing is going to be alright." That applies here. Can we come up with a strategy that avoids most of the worst outcomes, a lot of the time? Yes. Will the next half decade be a train wreck? Also, yes. Let's start with agentic, since that is at the very top of my AI risk metric, at least for the things we have some control over as defenders. The key here is control and isolation. As @christian_tail has pointed out, the policies applied as part of your harness are not a substitute for genuine isolation. For true freedom of movement for creators using agents, you want the harness to be in a VM or container with minimal/carefully thought-out trust boundaries between VM and host. Network isolation should restrict what it can access (and egress IP, so your main space does not get blacklisted if an agent acts stupid). And finally, account isolation has to be airtight. This also includes reused passwords, weak secrets, host configuration artifacts, and so on (as Spencer always points out). For trying to govern the random chat bots that teleport into each SaaS application, personal use of frontier models for random tasks, and the lower risk shadow AI, for the average org it is like the classic 80s song. Hold on loosely, but don't let go. If you try to keep crazy level of controls over all of this during such a time of great change, it will not go well. Don't get me wrong, if you make missiles or something, work out of a SCIF and lock down all the things. For the average org though, the risk of becoming obsolete is as great as any other risk during these times. Larger organizations looking for a way to safeguard data by using only company accounts, can use a SWG/SASE solution to front-end AI solutions, creating policies to allow the sanctioned and official AI sources, blocking personal accounts and unauthorized resources. And, don't get me wrong, everybody should do what they can here, but also maintain a realistic view of risk across the board and realize that this can be a lot of lift for risks that seem largely hypothetical. We see ransomware attacks, coming from infostealer / access broker activity all the time; whereas true catastrophic incidents due to models being trained with company data seem few and far between still (not counting the initial grift to make those models in the first place, since that has already happened). And if it is not PII or regulated/secret data, what is your real exposure? And for the tinfoil hat club afraid to upload your powerpoint slides for fear Anthropic or OpenAI will steal your intellectual property: Get over yourself. You are not Nikola Tesla. The super intelligent AI overlords are not going to be made so much smarter by your slides the closest competitor will put you out of business because your clip art on slide 23 was just that amazing and now the AI-gods have it. Unless you really are Nikola Tesla, in which case, DM me and lets be friends. Where the real destructive power comes in, is in ShadowAI and ShadowIT/software created by unauthorized use of AI. This will really wreck some shit. And, this is one of two use cases where the answer is, back to basics. And, now we really have to get the basics right. We need application control, to prevent unauthorized agentic-capable software harnesses/products from running where we do not want them. We need URL filtering and analysis of some sort to block the places we do not want users to go; we want principle of least privilege applied including LAPS/no local admin, we need proper segmentation, cleaned up domains/IAM implementations, and effective and validated business processes for high risk activities (from password reset to wire transfers). We need to clean up technical debt, and we need GRc processes that deal with real risk and not a bunch of goofy mumbo jumbo, not rooted in reality. POC or GTFO on any stated risk. And, where we do run agents, in addition to isolation, the new emerging category of AIDR / AI Detection and Response will become important. As we increasingly need to allow agents all over the place, we need block/allow decisions to become more contextual, and we need additional options besides just block or isolate and allow. And what about adversarial use of AI? It is really these same things - we need to get the basics right, although I will also add that patching lifecycles will also need to become more efficient, effective and automated (but not too automated; reference npm). The hype around Mythos has done us a great disservice though, because it has focused everyone on finding obscure zero days in source code, which does not materially change the game that much, aside from the need to patch more things faster. This is a huge problem, because it buries the lead, of how adversaries really are going to use this to great effect, which is to employ agents, with hacking skills, to exploit known things with much greater speed, efficiency, and effectiveness. They are not going to take the source code for some project and find some obscure zero day, to get in and ransom you (usually), they will apply agentic harnesses to take advantage of the fact that your password is Spring2026!, the user is local admin, and there are ADCS templates misconfigurations allowing a speed run to DA. The exotic and cool zero day stuff will still happen and it will be important; it is just not the lowest token cost to yield the highest number of compromises possible in most cases, so the tokenomics of it do not exactly line up toward zero day brute forcing of flaws being the main use of these powerful models. It will be a lot of the same old things, done better and faster. Finally, how do we do secure development, in an AI-centric and agentic world? With flows and specialized harnesses trained and constrained to specific types of tasks. One stage of a pipeline will allow for development, then another will have agents inspect and report prior to promoting code, with human tollgates at key places and when certain conditions are met. Some of these conditions need to be classic decision gates that are hard-coded, vs agentic vibe gates. We live in a cool and scary time, with lots of fun and exciting work to do. And, this is just a few thoughts from one random security dude, so really curious what others think, including a few experts I have looped in who have forgotten more than I will ever know on this topic. Thank you Spencer for starting this great discussion. Sorry for the text wall @UK_Daniel_Card @Shammahwoods @Jhaddix @0xBoku @HackingDave @kuzushi @ZackKorman

![Posty™ [Loa of Pizza]](https://pbs.twimg.com/profile_images/1514441991576297478/5mhiobMH.jpg)

We didn't realize then how good we had it. 🍕

![Posty™ [Loa of Pizza] tweet media](https://pbs.twimg.com/media/HHleyycWwAguPLA.jpg)