Securely adopting AI.... Is it even possible? How should IT/Security leaders be thinking about this? I have my own ideas but I'm not as deep as many of you. Would love some perspectives on this. Planning to do a podcast on this soon.

Mike Manrod

11.3K posts

@CroodSolutions

CISO and faculty by day, adversary emulation/tools by night, bad jokes and memes all the time.

Securely adopting AI.... Is it even possible? How should IT/Security leaders be thinking about this? I have my own ideas but I'm not as deep as many of you. Would love some perspectives on this. Planning to do a podcast on this soon.

@NathanMcNulty so low effort i was surprised it worked. I owe you a beer. Maybe that other guy too. 1st is 13 failed approaches, 2nd is working Awareness of your repo contents saved me from having to go into f12

Security things from the last few days: - CopyFail (linux pwn'd) - CopyFail 2/Dirty Frag - 13 advisories in Next.js - Over 70 CVEs addressed in MacOS 26.5 - ~50 CVEs addressed in iOS 26.5 - YellowKey (Windows Bitlocker pwn'd entirely) - GreenPlasma (Windows privilege escalation) - CVE-2026-21510 and CVE-2026-21513 confirmed to be used by Russia for Windows RCE - CVE-2026-32202 separately confirmed to be used by Russia for sensitive document access - Mini-Shai Hulud (over 300 JS and Python packages compromised via GitHub Action cache poisoning) - Google confirms they have identified AI-powered exploitation of zero days in an unidentified "open-source, web-based system administration too" - Canvas (popular LMS used in most schools) pwn'd entirely - PAN-OS (palo alto networks) pwn'd with a 9.3 severity CVE-2026-0300 Are you scared yet?

> wake up > take a shit > get out of bed > check internet > nginx rce > look inside > requires ASLR disabled

📍 High AI adoption is not reducing workforce anxiety. In many cases, it is accelerating it. As BCG highlights, some of the countries with the highest rates of regular GenAI usage also report the highest fears around job loss. The closer employees get to AI-enabled workflows, the clearer the organizational consequences become. 1️⃣ Structural Shift: Frequent exposure to AI changes how employees view task ownership, workflow coordination, and long-term role stability. Anxiety rises when people can directly observe capability substitution. 2️⃣ Governance Gap: Most organizations are accelerating deployment faster than they are redesigning workforce transition models. Employees see implementation velocity, but not a credible path for role evolution. 3️⃣ Talent Implication: High-adoption environments may increasingly split workforces into AI orchestrators and execution-heavy roles vulnerable to compression. That changes promotion pathways, training priorities, and internal mobility structures. This is why AI confidence and AI fear are now rising at the same time. The real workforce challenge is not whether AI will augment work. It is whether organizations can redesign career structures fast enough to prevent large-scale trust erosion inside the workforce. via BCG buff.ly/ub8Y0k0 @TCyberCast @sulefati7 @bulbi59 @corixpartners @bbailey39 @NathaliaLeHen @harbi_nh @Corix_JC @Transform_Sec @bociek191905 @Alovesublime @YalaCoder @kkruse @Yash_ai6 @DioOmega @EduardoValenteI @ozsilverfox @jameslhbartlett @giuliog @michaeldacosta @marmelyr @arigatou163 @O_Berard @faryus88 @ILoveBooks786 @RLDI_Lamy @VivMilanoFSL @FrRonconi @ramonvidall @ricardo_ik_ahau @olivierfroggy @kachofugetsujp @pchamard

Even with modest estimates, in 10 years total vulnerabilities could triple. Couple thoughts: - we need solutions that can dramatically scale IMPACT PREDICTION, PRIORITIZATION and REMEDIATION. We dont need to find hundreds of thousands of low impact crud - CVE count is terrible metric for defenders. Those advising/running security programs need to reframe this conversation to focus on the first point - defenders (and leadership) need to be ok with a large number of known vulns that will never be patched *chatgpt generated chart & stats

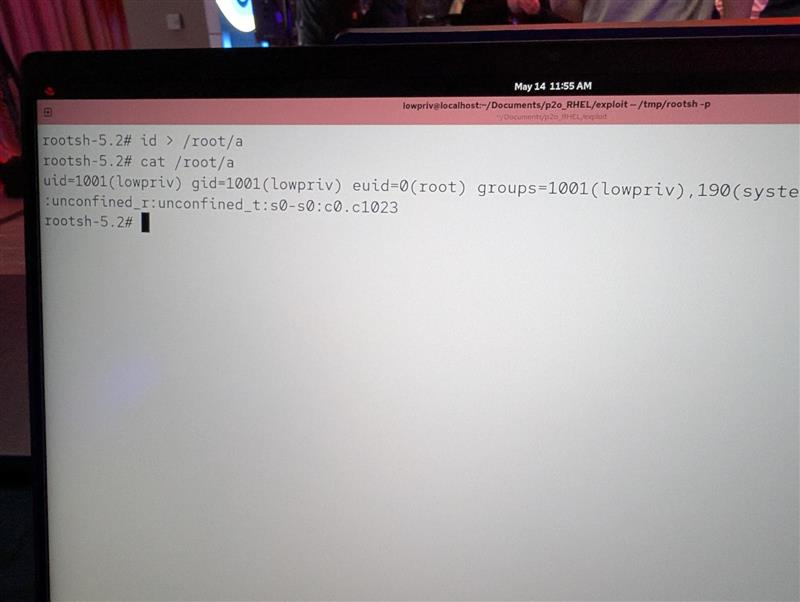

Confirmed! @chompie1337 of IBM X-Force Offensive Research (XOR) used a race condition to escalate privileges on Red Hat Enterprise Linux for Workstations, earning $20,000 and 2 Master of Pwn points. #Pwn2Own #P2OBerlin