Adit Jain

158 posts

Adit Jain

@aditjain1980

Vibing @ CollinearAI. PhD, Cornell ECE.

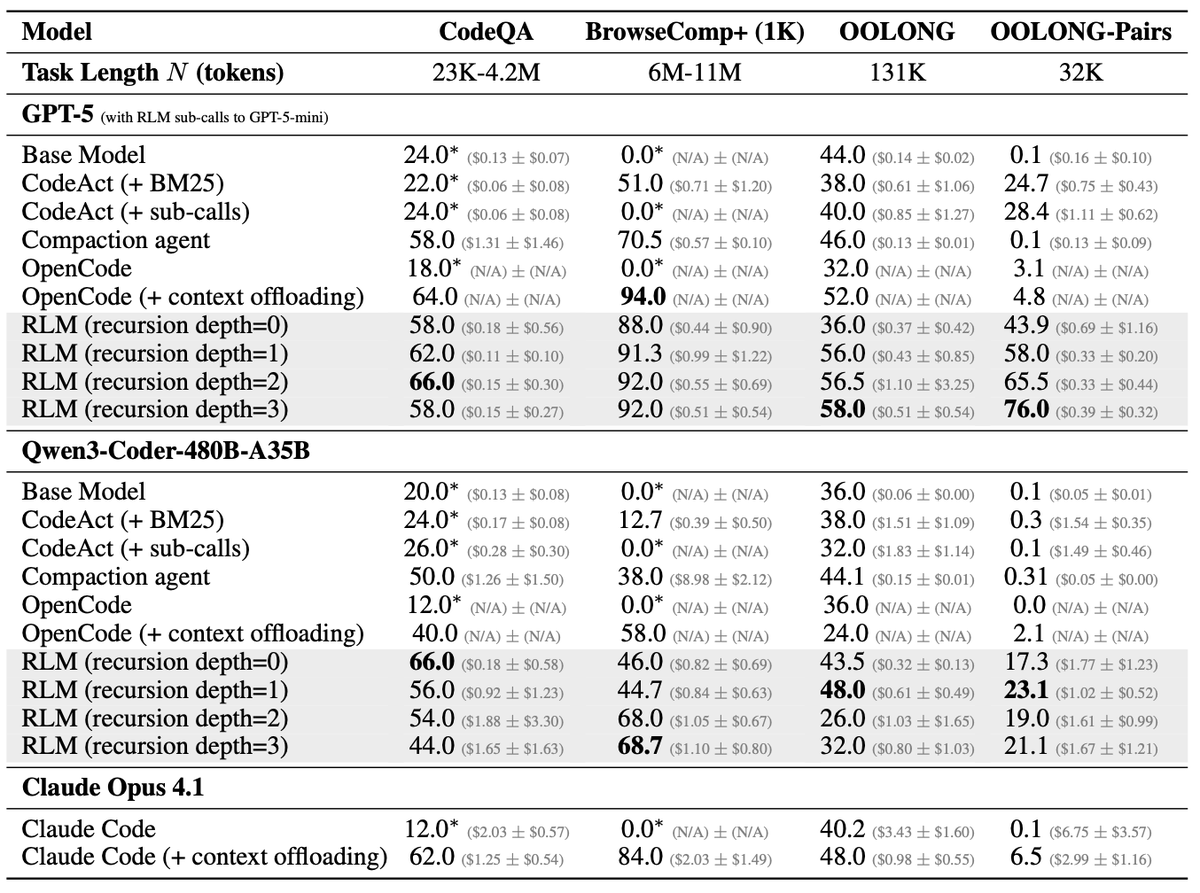

We discovered significant gaps between open and closed sourced models on our realistic computer-use-agent tasks, and it is a data problem. Although open models have nearly saturated OSWorld, we found that kimi k2.6 cannot do tasks that GPT-5.4 solves in 50 steps. Our 30 tasks are realistic: the agent works with an open source version of Office Suit in an linux OS, and compiles excel sheets. GPT-5.4-high solves 2/3 in 25 steps, and 1/3 in 50 steps. Kimi k2.6, the strongest open model on OSWorld, fails almost all of them. We understand the problem to be very simple: open models simply are not trained on realistic CUA data enough. To test this hypothesis, we simply RL-ed Kimi K2.6 on 10 in-domain CUA office tasks with LoRA. The result of the simplistic RL is a significant increase of +30% in the capacity to do office tasks. However, the improvement gracefully carries over to OSWorld itself: on a stratified subset of 30 tasks, the RL-ed model sees another +10% lift. The takeaway from our initial results is that CUA models suffer from unrealistic, low-quality data. As a result, we are continually building realistic apps / RL environments to bridge the gap. More to come. Solid work done by @alckasoc

We discovered significant gaps between open and closed sourced models on our realistic computer-use-agent tasks, and it is a data problem. Although open models have nearly saturated OSWorld, we found that kimi k2.6 cannot do tasks that GPT-5.4 solves in 50 steps. Our 30 tasks are realistic: the agent works with an open source version of Office Suit in an linux OS, and compiles excel sheets. GPT-5.4-high solves 2/3 in 25 steps, and 1/3 in 50 steps. Kimi k2.6, the strongest open model on OSWorld, fails almost all of them. We understand the problem to be very simple: open models simply are not trained on realistic CUA data enough. To test this hypothesis, we simply RL-ed Kimi K2.6 on 10 in-domain CUA office tasks with LoRA. The result of the simplistic RL is a significant increase of +30% in the capacity to do office tasks. However, the improvement gracefully carries over to OSWorld itself: on a stratified subset of 30 tasks, the RL-ed model sees another +10% lift. The takeaway from our initial results is that CUA models suffer from unrealistic, low-quality data. As a result, we are continually building realistic apps / RL environments to bridge the gap. More to come. Solid work done by @alckasoc

According to the new data from Ramp, Anthropic has passed OpenAI in business adoption for the first time. 'Adoption of Anthropic rose 3.8% in April to 34.4% of businesses. OpenAl adoption fell 2.9% to 32.3%. Overall Al adoption rose 0.2 percentage points to 50.6%.'

Claude Code 2.1.139 added /goal You set a completion condition and Claude keeps working across turns until it's met Works in interactive, -p, and Remote Control 👏

wow Mythos finally broke the METR graph

We evaluated an early version of Claude Mythos Preview for risk assessment during a limited window in March 2026. We estimated a 50%-time-horizon of at least 16hrs (95% CI 8.5hrs to 55hrs) on our task suite, at the upper end of what we can measure without new tasks.

Some things never change. If you don’t understand this one, you don’t understand what’s happening AI. Marcus, 1998: neural nets have trouble generalizing far beyond the data. Marcus, 2001, 2012, 2019, 2022, etc: neural nets have trouble generalizing far beyond the data. Apple, 2025: neural nets have trouble generalizing far beyond the data. Meta/Stanford/Harvard, 2026: neural nets have trouble generalizing far beyond the data.

Introducing 500+ legal agents and a new Agent Builder in Harvey.