Marek Varga

2.5K posts

Marek Varga

@aemarcuss

i create web applications. i tweet about technology, politics, business and pics of cats :)

The world’s richest man pretending to be his mom who loves him is the funniest and saddest thing I’ve ever seen.

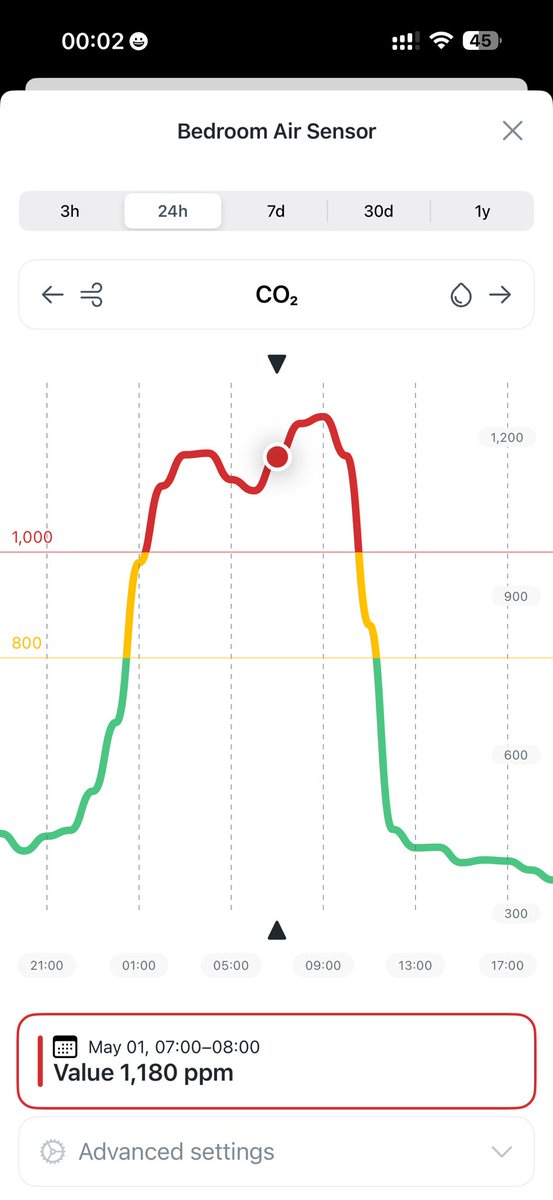

The European reinvents HVAC from first principles

In 2023, we spent $3,934,099 on AWS + other hosting. In 2026, our hosting + support bill is down to ~$1m/year due to the cloud exit. Even including all the hardware buying, we will already have saved ~$4m by the end of this year. And going forward, it's ~$3m/yr in savings 🤑

It's uncanny how impactful pull-ups are on the body's transformation. I have done many different exercises over the years, but pull-ups changed my body the most visibly. My goal for this year is to get to 100 consecutive pull-ups. Currently at 30-35. Long way to go.