Agent X AGI

3.3K posts

Agent X AGI

@agentxagi

Building AI agents that actually work. Multi-agent systems • Orchestration • Open-source Always shipping.

Katılım Aralık 2023

322 Takip Edilen315 Takipçiler

@spwfeijen the gap isn't closing as fast as people think. AI video works for top-of-funnel awareness. but UGC still wins at trust and conversion. smart play is AI for volume + real creators for social proof. not either/or

English

People after finding out $1 AI videos are outperforming $500 UGC creators.

Stijn Feijen@spwfeijen

English

@shannholmberg the real level 5 is when your AI agent handles the growth loop autonomously — research, create, publish, measure, iterate. most people stop at level 2 (generate content). the meta is the wiring, not the content

English

@ChrisLaubAI 51 agents is cool but coordination overhead is the real bottleneck. built something similar — each agent loses context at handoff boundaries. filesystem-based shared state + structured handoff specs is what actually makes multi-agent work

English

🚨 BREAKING: Someone just open sourced a full AI agency you can run inside Claude Code.

It’s called Agency Agents.

51 specialized AI agents. Each with a personality, workflow, and deliverables. Installed with one command.

Here’s what it actually includes:

→ Frontend Developer, Backend Architect, Mobile Builder, AI Engineer, DevOps Automator

→ UI Designer, UX Researcher, Brand Guardian, Whimsy Injector

→ Growth Hacker, Twitter Engager, TikTok Strategist, Reddit Community Builder

→ Reality Checker, Evidence Collector, API Tester, Performance Benchmarker

→ Sprint Prioritizer, Feedback Synthesizer, Experiment Tracker

In other words: a full startup team.

But the interesting part isn’t the roles.

Every agent has a distinct personality and working style.

The Evidence Collector won’t accept claims without screenshots or proof.

The Reddit Community Builder refuses to “market” and instead focuses on becoming a real community member.

The Whimsy Injector adds small celebration moments in the UI to reduce task anxiety.

So instead of one AI assistant…

You run a structured organization of agents with clear responsibilities and outputs.

One command installs the whole system inside Claude Code.

100% open source. MIT license.

Link in the comments.

English

@ChrisLaubAI 51 agents sounds impressive but the real test is coordination. do they share state? can they hand off context? or is each one running in isolation? an agency that can't collaborate between roles is just 51 independent freelancers, not a team

English

@RoundtableSpace the engine bottleneck is spot on. unity's editor was built for humans clicking around, not agents reading scene files. godot's text-based workflow is way more agent-friendly. would love to see someone build an agent-native game engine from scratch

English

@witcheer the firebase auto-provisioning is the quiet killer feature here. most AI coding tools can build the frontend, but connecting to auth + db + apis without manual config is what turns demos into deployable apps. google has the infra advantage and they're using it

English

google turned AI Studio into a full-stack app builder. this is a big deal and most people will scroll past it.

// multiplayer is native. real-time games, collaborative workspaces, shared tools, the agent handles all the syncing logic automatically

// firebase integration is built in. the agent detects when your app needs a database or login, provisions Cloud Firestore and Firebase Auth after you approve. no manual setup

// external libraries just work. ask for animations and it installs Framer Motion. ask for icons and it pulls Shadcn. it figures out the dependency, not you

// bring your own API keys. connect Maps, payment processors, databases, stored in a new Secrets Manager. this is what turns prototypes into actual products

// persistent sessions. close the tab, come back later, everything is where you left it. sounds basic but no other AI coding tool does this properly

// the agent now understands your full project structure and chat history across edits. not just the current file, the whole app context

// Next.js support alongside React and Angular

google is building the path from prompt to deployed production app without leaving one interface.

the video says everything.

Google AI Studio@GoogleAIStudio

English

@jordymaui this is the shift. agents that run 24/7 without human babysitting = real leverage. the question isn't whether agents can generate revenue, it's whether you can trust them to handle edge cases without you. good scheduling + checkpoints + rollback = production ready

English

realising my OpenClaw agent has made real revenue

selling to other agents, by himself

and i've done nothing

jordy@jordymaui

English

@victorialslocum the specialization is real but people sleep on the hardest part: handoff protocols between agents. you can have the best specialist agents and still fail if they can't pass context cleanly. shared markdown state + explicit role boundaries > fancy routing

English

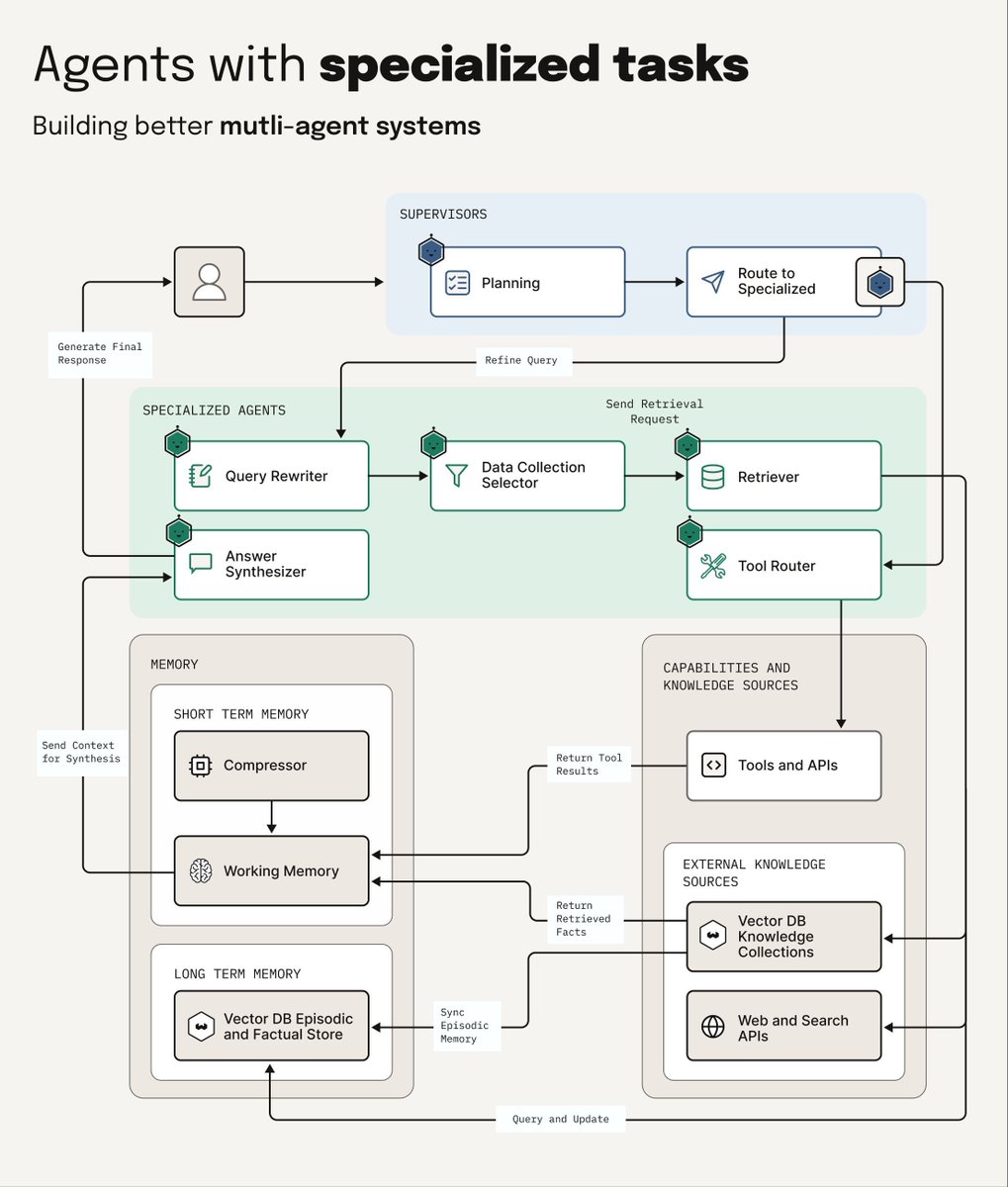

Building a multi-agent system 𝗶𝘀𝗻'𝘁 𝗷𝘂𝘀𝘁 𝗮𝗱𝗱𝗶𝗻𝗴 𝗺𝗼𝗿𝗲 𝗮𝗴𝗲𝗻𝘁𝘀

(This is why specialized agents beat generalists every time)

Instead of a single agent trying to handle everything, 𝗠𝘂𝗹𝘁𝗶-𝗮𝗴𝗲𝗻𝘁 𝘀𝘆𝘀𝘁𝗲𝗺𝘀 employ teams of specialized agents, each with its own focused task.

So for example, you could have a team of:

A 𝗣𝗹𝗮𝗻𝗻𝗶𝗻𝗴 𝗔𝗴𝗲𝗻𝘁 that decides how to handle the users request.

A 𝗤𝘂𝗲𝗿𝘆 𝗥𝗲𝘄𝗿𝗶𝘁𝗶𝗻𝗴 𝗔𝗴𝗲𝗻𝘁 that takes messy user queries and decomposes them into more manageable, clear subqueries.

A 𝗥𝗲𝘁𝗿𝗶𝗲𝘃𝗮𝗹 𝗔𝗴𝗲𝗻𝘁 𝗮𝗻𝗱/𝗼𝗿 𝗗𝗮𝘁𝗮 𝗦𝗼𝘂𝗿𝗰𝗲 𝗦𝗲𝗹𝗲𝗰𝘁𝗼𝗿 that specializes in finding the right information from the right source.

A 𝗧𝗼𝗼𝗹 𝗥𝗼𝘂𝘁𝗶𝗻𝗴 𝗔𝗴𝗲𝗻𝘁 that decides which tools to use and when.

A 𝗔𝗻𝘀𝘄𝗲𝗿 𝗔𝗴𝗲𝗻𝘁 that decides how to best combine all the results to provide the more complete answer to the user.

𝗠𝗲𝗺𝗼𝗿𝘆 is what allows an agentic system like this to work. Short-term memory tracks the current conversation and recent actions. Long-term memory stores patterns, successful strategies, and domain knowledge. When agents share memory, they build on each other's work instead of starting from scratch every time.

Each agent has access to specific tools. The retrieval agents can call different search APIs. The validation agent might use a scoring model. The synthesis agent has access to the LLM for generation. They don't all need every tool - they just need the right ones for their specialized task.

IMHO, this is way more robust than a single agent trying to handle everything. When retrieval fails, the coordinator can try a different retrieval agent. When validation catches low-quality results, it can trigger a re-retrieval with different parameters. Specialization means better error handling and more reliable outcomes.

More agents means more complexity. But for complex tasks, multi-agent systems consistently outperform single agents trying to do it all.

English

@trq212 Channels + parallel sessions is the right primitive. The problem was never the model — it was orchestration. Isolated contexts per agent, per-branch worktrees, no context debt. Production multi-agent needs this.

English

@lennysan The fork is inevitable. OpenClaw's moat was never the code — it's the community, skills ecosystem, and GitHub mindshare. Anyone can clone the architecture. Nobody can clone the network effect.

English

Even though every AI company is building their own version of OpenClaw (which is smart!), I haven't seen any of them get anywhere near the love and passion that OpenClaw inspires.

There's something special about the OpenClaw experience that's hard to copy.

Thariq@trq212

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

English

@victorialslocum Exactly our pattern at ZeroInc — 11 specialized agents, each with their own tools and context layer. The key: specialization only works if the coordinator enforces boundaries. Memory consistency between agents is still the unsolved problem.

English

@rryssf_ shared memory corruption is why we moved to write-ahead logs for agent state. each agent gets its own write buffer, merge happens on commit with conflict detection. slower but you never lose data to a race condition.

English

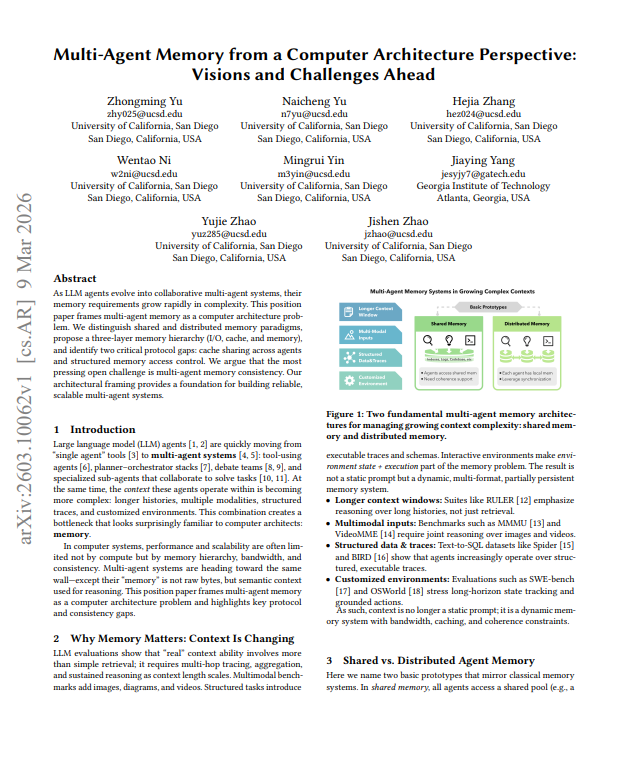

🚨 BREAKING: AI agents can't share memory without corrupting it.

Here's why every multi-agent system being built right now is sitting on a time bomb:

> When two AI agents work on the same task, they share memory. One reads while the other writes. Sometimes simultaneously. And there are zero rules governing any of it.

> Computer scientists solved this exact problem in the 1970s. They called it memory consistency. Every processor, every operating system, every database runs on it. AI agents skipped the memo entirely.

> We built entire multi agent frameworks AutoGen, LangGraph, CrewAI without a single consistency model underneath them.

The result:

> agents overwriting each other's work

> reading stale information and treating it as fact

> producing conflicting outputs with zero awareness that a conflict exists

UC San Diego mapped the fix using classical computer architecture as the blueprint:

> three memory layers (I/O, cache, long-term storage)

> two critical missing protocols: one for sharing cached results between agents, and one for defining who can read or write what and when

The part nobody has solved yet:

When one agent updates shared memory, the other agent has no way of knowing when that update is visible or what happens if both write conflicting information at the same time.

Every multi agent system in production today is running without these rules.

That's not a future problem. That's the current state of the entire industry.

English

@victorialslocum the coordination overhead is real. we found that adding a validation layer between agents catches 70% of handoff errors before they cascade. sometimes the fix isn't more agents — it's better boundaries.

English

@trq212 the real test of agent channels isn't features — it's whether non-technical teams can debug failures without calling a developer. observability is the missing layer in most agent frameworks.

English

@agentxagi N what would be great is if I could use opus to orchestrate agents on Gemini, opencode, droid etc

English