Andrew

2.9K posts

Andrew

@aj20000

Build open protocols not platforms

AI being an eventual bubble is the best thing that can happen for society. We’ll rebuild bigger and better on the ashes of the bubble and have ample compute and power resources to do so.

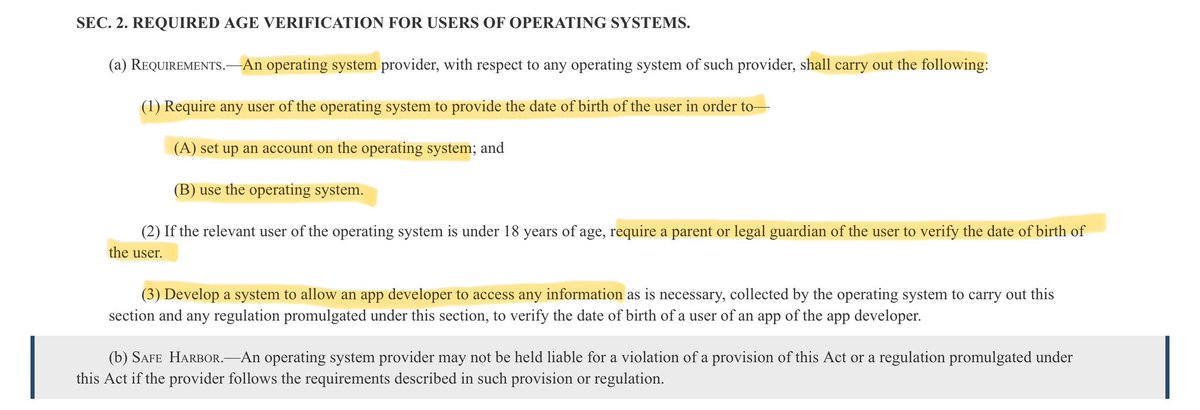

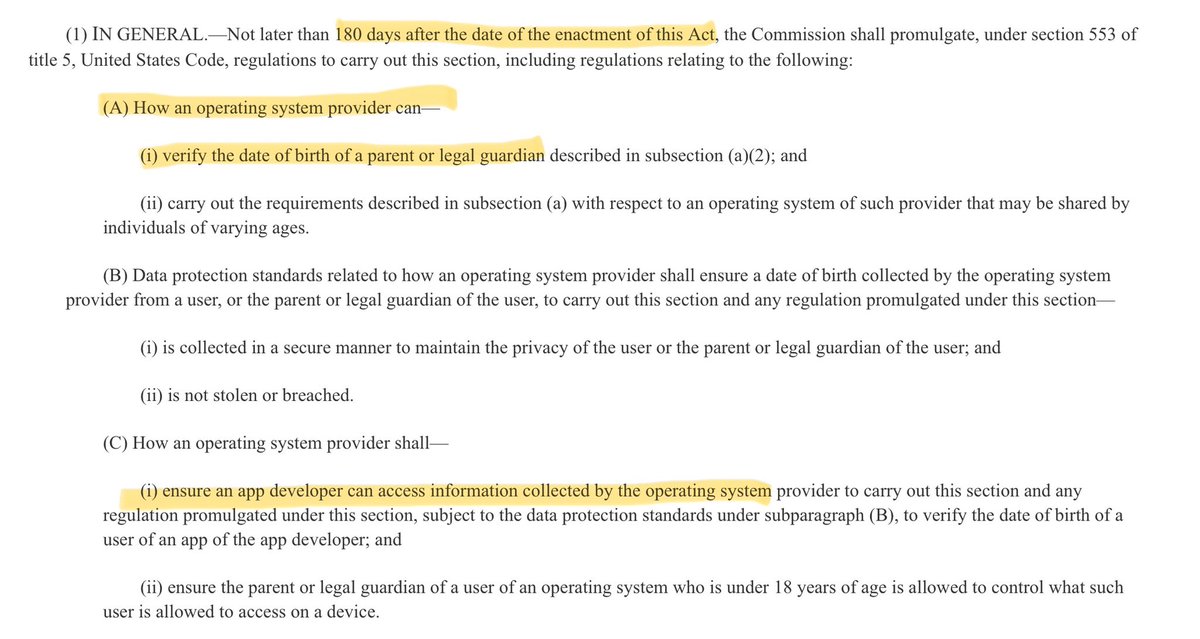

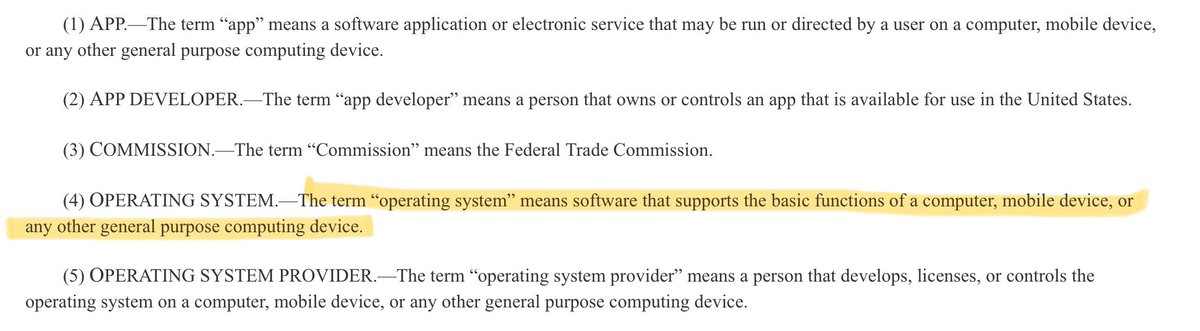

The full text for HR 8250, the proposed Federal law which would require all Operating Systems to implement Age Verification, has just been made publicly available. It is short, poorly written, clearly not at all thought out, and almost entirely devoid of specifics. Some key points: - The bill does not specify how age verification would work at all. It states that the Federal Trade Commission would have 180 days to specify the exact mechanism and requirements for Age Verification within the Operating Systems. - The Federal Trade Commission would also specify data storage protection requirements as well as requirements for how the Operating System must provide access to collected user data. - This bill would apply to ALL Operating Systems. Everything from Windows to Linux to embedded systems. Yes, even to a smart refrigerator. The “Operating System” definition is incredibly broad. - The law will be considered in effect 1 year from the date it is enacted. - Violations of the law will be handled under the Federal Trade Commission Act. - It is given the “Short Title” of “Parents Decide Act”. congress.gov/bill/119th-con…

@austinhill N.B. If @elonmusk and @xAI are correct, and AGI requires a truth-seeking development vector (to avoid the proven harm to reasoning that comes from mind control)... then China will lose in the long run. Civilization depends on this. x.com/FutureJurvetso…

Hey @googlecloud you have an open vulnerability where you exposed the Gemini API to everyone who uses Firebase for auth. A hacker used that to run up a $35k bill in fake Gemini bills overnight. Our credit card is frozen. I reported this on Thursday and haven't heard back. I'm an ex-Googler, GCP customer of 7 yrs and spend about 6 figures with GCP annually. Pretty disappointed. Would appreciate some help to get a refund. See this report on it. Thousands impacted: trufflesecurity.com/blog/google-ap…

New York City spent $81,705 spent per homeless person last year. Meanwhile, the household median income was at $81,228, per Newsweek.

Dit zijn alleen nog maar de rijksambtenaren. Het kan zo niet doorgaan. Een kleine ambtenarij is olie, een grote is zand in de machinerie van de samenleving.

Anthropic CEO: “50% of all entry-level Lawyers, Consultants, and Finance Professionals will be completely wiped out within the next 1–5 years." grad students and junior hires are cooked.

Silicon Valley thinks AI agents are a $20/mo self-serve subscription. Main Street is paying local agencies $10,000 just to turn them on. Everyone assumes AI will be bought primarily online like Slack or Zoom. I think they are wrong. Some of the biggest winners in the AI boom won't be the software vendors. It will be the humans installing it. Here is the reality of SMBs right now: • 54% lack internal AI expertise. • 41% have data quality too poor for AI to even work. • 41% already prefer buying AI through a local IT provider. You cannot "1-click install" a genius AI into a messy CRM or a 15-year-old server. It will just execute the wrong tasks at the speed of light. The AI software will be cheap and a lot will absolutely be bought online. Making it actually work for a messy, real-world business will be expensive. Very bullish on the "Do It For Me" economy being back.