Akshay K. Jagadish

720 posts

@akjagadish

Research Fellow, @Princeton AI Lab. I use AI to study natural and artificial minds. PhD @CPILab @MPICybernetics @Uni_Tue

Meet Milena Rmus (@milenamr7) — engineering wizard, data visualization artist, and resident cat/meme/ClaudeCode extraordinaire at @RoundtableHQ_ . Every team needs a Milena. Her love of the craft shows up everywhere: building elegant visualizations for exploratory statistical work, monitoring API errors from the middle of metal concerts, and keeping company culture alive with perfectly timed memes. She’s a driving force behind Roundtable’s Proof of Human research agenda. We met through overlapping computational cognitive science circles — she holds a BA from Brown, a PhD from Anne Collins’ lab at UC Berkeley, and most recently completed a postdoc in Munich. Her publication record is extensive: Nature, NeurIPS, PLOS Computational Biology. Again and again, she brings cutting-edge AI and ML approaches to understanding the human mind and brain. At Roundtable, she leads continuous benchmarking and red-teaming of the Proof of Human API. Fraud evolves quickly — having our toughest critics in-house is a feature, not a bug. And don’t underestimate her intellectual edge. She led our “AI Capabilities ≠ Humanness” piece, grounding a contrarian AI stance in cognitive science. More work is on the way, and we’re excited to share it soon :) In the meantime — to honor Milena — here are a few cat memes.

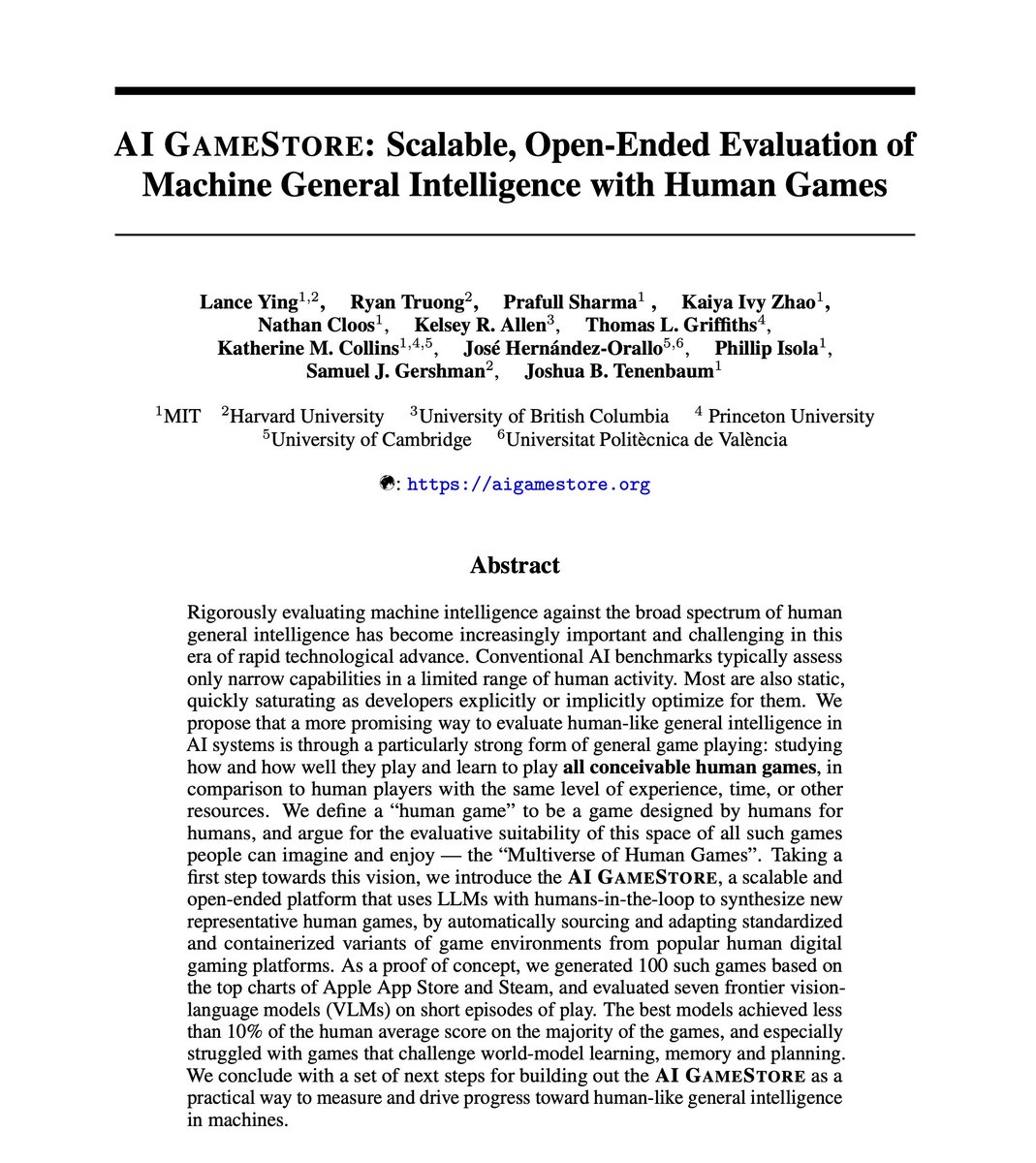

Scientists often make breakthroughs by synthesizing ideas across papers. In our new paper, we ask whether a language model can anticipate this process: given two parent papers, can it generate the core insight of a future paper built on them? 🧵⬇️

🫱 Introducing 𝐍𝐞𝐮𝐫𝐚𝐥 𝐂𝐨𝐦𝐩𝐮𝐭𝐞𝐫s: 𝐰𝐡𝐚𝐭 𝐢𝐟 𝐀𝐈 𝐝𝐨𝐞𝐬 𝐧𝐨𝐭 𝐣𝐮𝐬𝐭 𝐮𝐬𝐞 𝐜𝐨𝐦𝐩𝐮𝐭𝐞𝐫𝐬 𝐛𝐞𝐭𝐭𝐞𝐫, 𝐛𝐮𝐭 𝐛𝐞𝐠𝐢𝐧𝐬 𝐭𝐨 𝐛𝐞𝐜𝐨𝐦𝐞 𝐭𝐡𝐞 𝐫𝐮𝐧𝐧𝐢𝐧𝐠 𝐜𝐨𝐦𝐩𝐮𝐭𝐞𝐫 𝐢𝐭𝐬𝐞𝐥𝐟? Beyond today's conventional computers, agents, and world models, Neural Computers (NCs) are new frontiers where computation, memory, and I/O move into a learned runtime state. We ask: whether parts of runtime can move inward into the learning system itself. This is our first step toward the Completely Neural Computer (CNC): a general-purpose neural computer with stable execution, explicit reprogramming, and durable capability reuse. Work done with Mingchen Zhuge (@MingchenZhuge), Changsheng Zhao, Haozhe Liu (@HaoZhe65347 ), Zijian Zhou (@ZijianZhou524 ), Shuming Liu (@shuming96 ), Wenyi Wang (@Wenyi_AI_Wang ), Ernie Chang (@erniecyc ), Gael Le Lan, Junjie Fei, Wenxuan Zhang, Zhipeng Cai (@cai_zhipeng ), Zechun Liu (@zechunliu ), Yunyang Xiong (@YoungXiong1 ), Yining Yang, Yuandong Tian (@tydsh ), Yangyang Shi, Vikas Chandra (@vikasc), Juergen Schmidhuber (@SchmidhuberAI)

1/14 Can we build an AI that thinks like psychologists or economists? 🤔Our new preprint shows how reinforcement learning (RL) can train LLMs to explain human decisions—not just predict them! That is, we're pushing LLMs beyond mere prediction into explainable cognitive models.

New short-form preprint in which we use Centaur to identify gaps in interpretable cognitive models and revise them accordingly using Qwen3 -- fully automated and without a human-in-the-loop. arxiv.org/abs/2505.17661

🚨 @milenamr7 and I will be presenting this work at AI4science workshop at Neurips-2025 today! Time: 11.20 am - 12.30 pm Location: Room 20, upper level Workshop: AI4Science Please drop if you are interested in iterative program synthesis and automated cognitive modeling!

Third #runconference at #NeurIPS2025 was great, including special guest @JeffDean ! Same place, same time, tomorrow morning if you want to join! 🤖🏃🏾