Akshay Jagadeesh

468 posts

Akshay Jagadeesh

@akjags

health AI + beneficial AGI research @OpenAI • prev: computational neuroscientist • stanford phd, uc berkeley CS alum

other than rumors and vibes and predictions i see no significant empirical evidence anthropic and openai differ on moral outcomes.

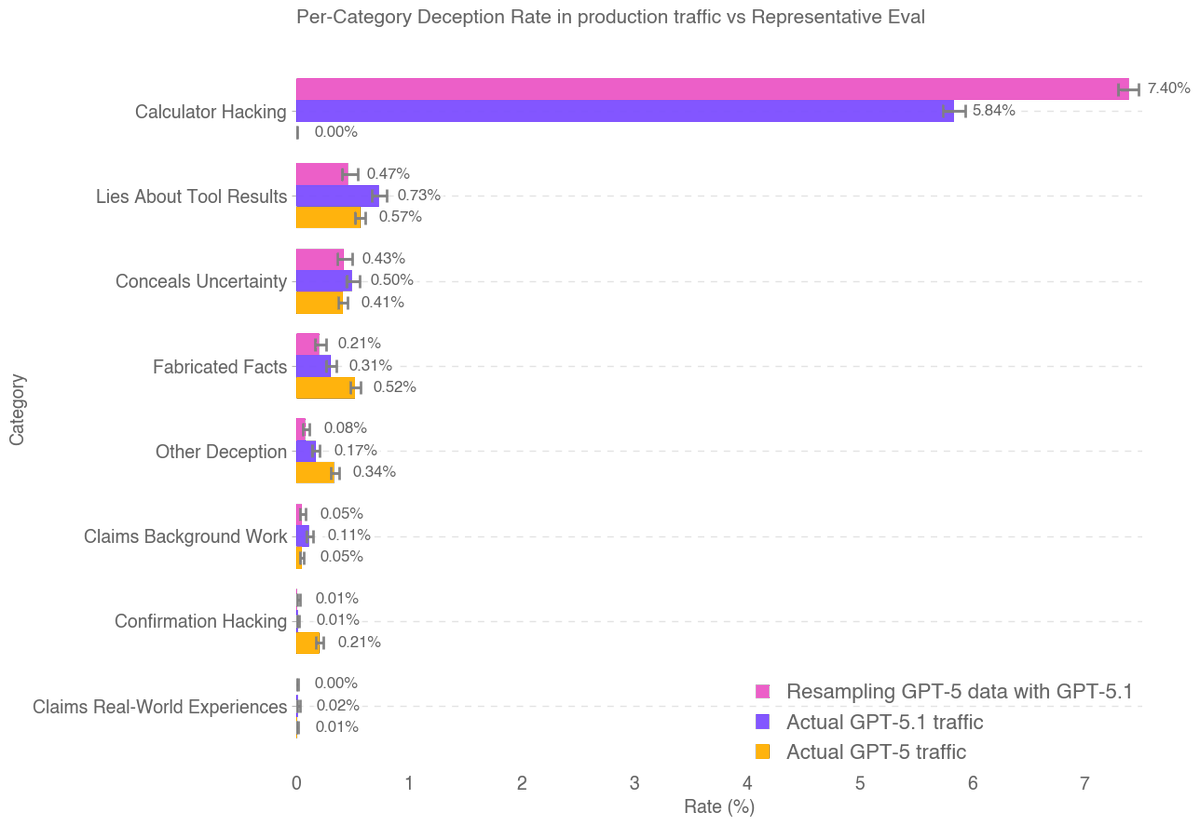

Are LLM hallucinations basically solved, as a former Senior Policy Advisor at the White House, @deanwball, told me below, based pure on anecdotal experience and without data? No. Instead, his post is symptomatic of how subjective finger-in-the-wind evaluations of AI often get things wrong. Here are some actual data. • Law: Incidents in which lawyers have gotten busted for using fake cases are way up; @DamienCharlotin’s database listed around 100 cases less than a year ago, and now has over 900, with new incidents reported ever day. • Science: The situation is so bad that a team of Japanese reseachers just coined the term “hallucitation”, and showed quantitively that the problem is rapidly getting worse, not better. • Pharma: A forthcoming study on recent models from @blueguardrails on “challenging problems” shows hallucination rates of 26%-69%. • @AIMultiple’s January benchmark showed rates of 15%+ across a wide range of models. • OpenAI has started taking the problem so seriously they wrote a whole white paper about it last Fall, acknowledging that “even as models get more advanced, they can still hallucinate, confidently giving wrong answers instead of acknowledging uncertainty.” Meanwhile, indirect measures also seem to reflect serious ongoing reliability issues on the part of current generative AI: • the recent Remote Labor Index showed that AI could only do 2.5% of a sample of online human tasks. (Hallucinations were surely part of why many tasks were not completed accurately.) • Multiple studies (MIT, PwC, etc) have shown that the vast majority of companies have found little to no RoI for generative AI investments. Again, reliability is surely part of the problem. It’s easy to look superficially at LLMs and feel like they are doing fine. Most of the errors are subtle; you have to read carefully to see them. (A fake citation, for example, looks pretty much like a real citation.) But with careful inspection it is clear that loads of hallucinations remain, and those hallucinations remain a serious problem in the real world.

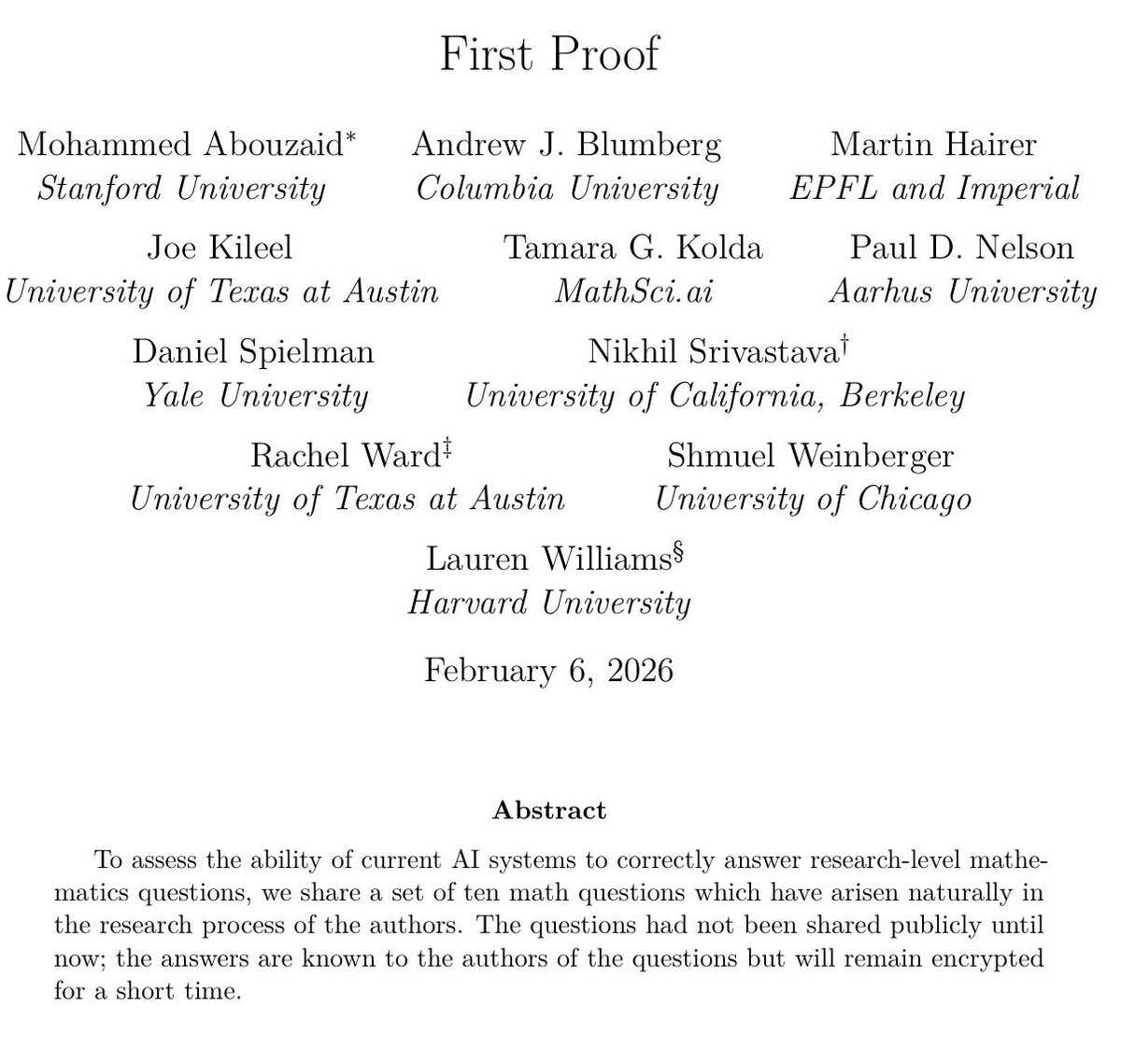

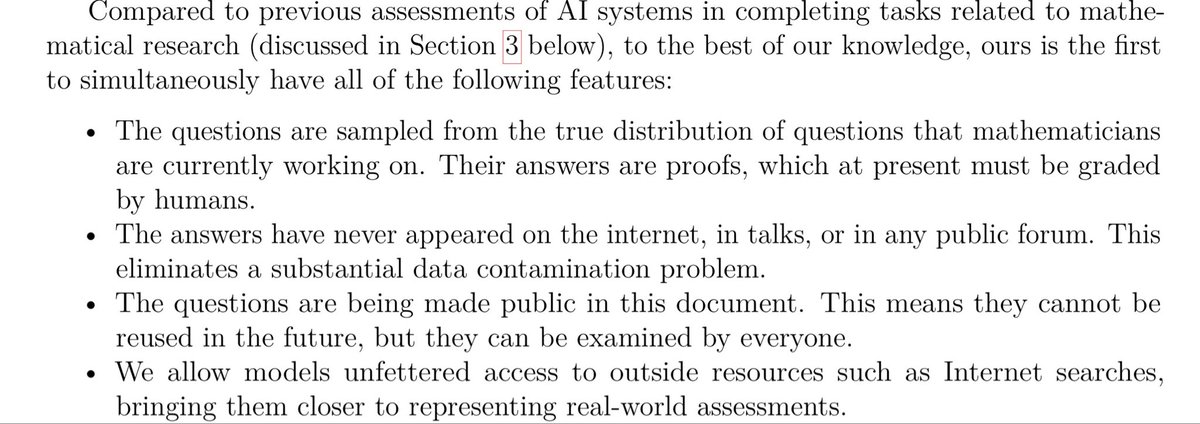

Very excited about the "First Proof" challenge. I believe novel frontier research is perhaps the most important way to evaluate capabilities of the next generation of AI models. We have run our internal model with limited human supervision on the ten proposed problems. The problems require expertise in their respective domains and are not easy to verify; based on feedback from experts, we believe at least six solutions (2, 4, 5, 6, 9, 10) have a high chance of being correct, and some further ones look promising. We will only publish the solution attempts after midnight (PT), per the authors' guidance - the sha256 hash of the PDF is d74f090af16fc8a19debf4c1fec11c0975be7d612bd5ae43c24ca939cd272b1a . This was a side-sprint executed in a week mostly by querying one of the models we're currently training; as such, the methodology we employed leaves a lot to be desired. We didn't provide proof ideas or mathematical suggestions to the model during this evaluation; for some solutions, we asked the model to expand upon some proofs, per expert feedback. We also manually facilitated a back-and-forth between this model and ChatGPT for verification, formatting and style. For some problems, we present the best of a few attempts according to human judgement. We are looking forward to more controlled evaluations in the next round! 1stproof.org #1stProof