Alex

1.4K posts

Alex

@alexinbinary

Co-Founder CEO @polarityco | CS & Math @UWaterloo | prev @Shopify

San Francisco Katılım Ağustos 2018

980 Takip Edilen1.4K Takipçiler

General Catalyst just co-led a $31.5 million seed round into a blatant rip-off of my company, Kled.

(skip to 40 seconds if you want to skip context)

I would typically not speak on things like this, but this level of blatant copycatting is egregious and completely unacceptable, and needs to be made an example of.

This is one of hundreds of YC startups who have conducted this disgusting behavior. Unimaginative slop that continues to get rewarded due to nepotism.

Yuri Sagalov@yuris

Super excited to colead @LuelCompanyAI’s $31.2M seed round. There are certain teams you meet where you know within 5 minutes that you want to partner with them Luel is one of those team. William and Inigo are incredibly ambitious founders who understand the human data bottleneck from the inside out. They've built Luel to create a scalable, reliable supply of that data — something that will be foundational to the next generation of AI.

English

My co-founder put me as a 'notable speaker' for Toronto Tech Week and I got selected.

If you wanna hear me talk, register here:

luma.com/mq5t6vp2

English

I love pushing the limits of what I can do as an engineer.

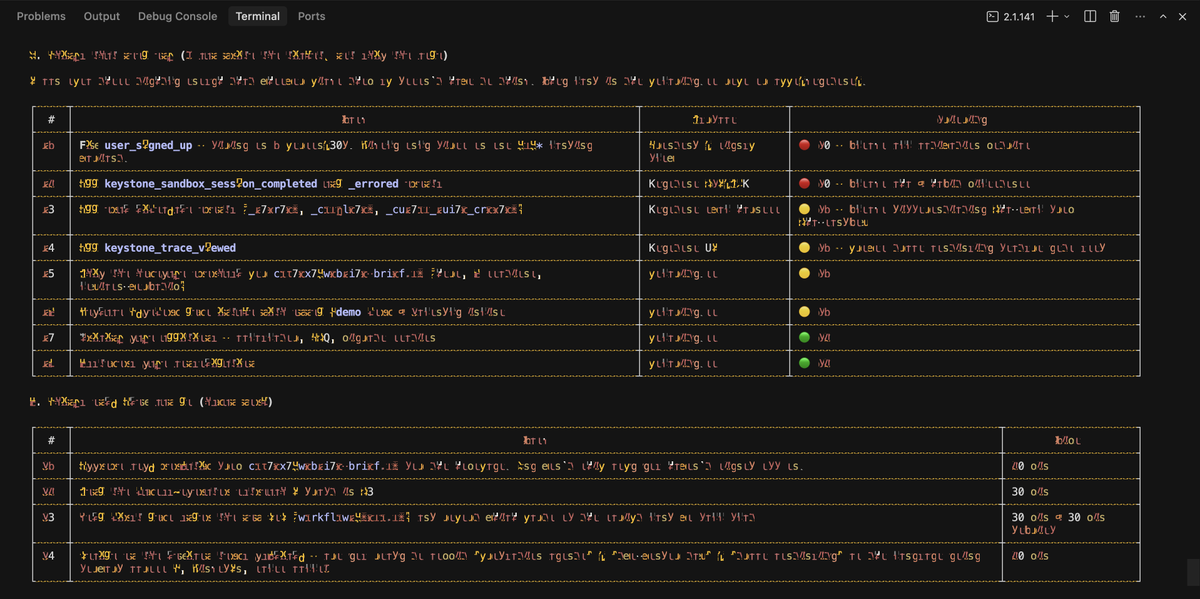

I started this week with the goal of successfully being able to spin up 1000 congruent sandboxes to limit test.

Turns out @polarityco can 10x this and run 10,000.

Never stop being curious.

English

And it runs at SCALE.

10,000 sandboxes to be exact.

Built this at Polarity. Get started testing your agents today with code 'PLG'.

polarity.so/keystone

English

Open sourced one of our Internal DBs! Super fast at Agent Trace Querying + FTS

shane ☯︎@shanebarakat_

English

This works really well btw, at the end of your query ask your LLM to "structure your response as HTML", then view the generated file in your browser. I've also had some success asking the LLM to present its output as slideshows, etc.

More generally, imo audio is the human-preferred input to AIs but vision (images/animations/video) is the preferred output from them. Around a ~third of our brains are a massively parallel processor dedicated to vision, it is the 10-lane superhighway of information into brain. As AI improves, I think we'll see a progression that takes advantage:

1) raw text (hard/effortful to read)

2) markdown (bold, italic, headings, tables, a bit easier on the eyes) <-- current default

3) HTML (still procedural with underlying code, but a lot more flexibility on the graphics, layout, even interactivity) <-- early but forming new good default

...4,5,6,...

n) interactive neural videos/simulations

Imo the extrapolation (though the technology doesn't exist just yet) ends in some kind of interactive videos generated directly by a diffusion neural net. Many open questions as to how exact/procedural "Software 1.0" artifacts (e.g. interactive simulations) may be woven together with neural artifacts (diffusion grids), but generally something in the direction of the recently viral x.com/zan2434/status…

There are also improvements necessary and pending at the input. Audio nor text nor video alone are not enough, e.g. I feel a need to point/gesture to things on the screen, similar to all the things you would do with a person physically next to you and your computer screen.

TLDR The input/output mind meld between humans and AIs is ongoing and there is a lot of work to do and significant progress to be made, way before jumping all the way into neuralink-esque BCIs and all that. For what's worth exploring at the current stage, hot tip try ask for HTML.

Thariq@trq212

English