Alex Peng

89 posts

Alex Peng

@alexpeng

posttraining @xai, formerly @cognition, @windsurf, @stanford

xAI has launched Grok 4.3, achieving 53 on the Artificial Analysis Intelligence Index with improved agentic performance, ~40% lower input price, and ~60% lower output price than Grok 4.20 The release of Grok 4.3 places @xAI just above Muse Spark and Claude Sonnet 4.6 on the Intelligence Index, and a 4 points ahead of the latest version of Grok 4.20. Grok 4.3 improves its Artificial Analysis Intelligence Index score while reducing cost to run the benchmark suite. Key Takeaways: ➤ Grok 4.3 improves on cost-per-intelligence relative to Grok 4.20 0309 v2: it scores higher on the Intelligence Index while costing less to run the full benchmark suite. Grok 4.3 costs $395 to run the Artificial Analysis Intelligence Index, around 20% lower than Grok 4.20 0309 v2, despite using more output tokens. This makes it one of the lower-cost models at its intelligence level ➤ Large increase in real world agentic task performance: The largest single benchmark improvement is on GDPval-AA, where Grok 4.3 scores an ELO of 1500, up 321 points from Grok 4.20 0309 v2’s score of 1179 Grok 4.3, surpassing Gemini 3.1 Pro Preview, Muse Spark, Gpt-5.4 mini (xhigh), and Kimi K2.5. Grok 4.3 narrows the gap to the leading model on GDPval-AA, but still trails GPT-5.5 (xhigh) by 276 Elo points, with an expected win rate of ~17% against GPT-5.5 (xhigh) under the standard Elo formula ➤ Grok 4.3’s performs strongly on instruction following and agentic customer support tasks. It gains 5 points on 𝜏²-Bench Telecom to reach 98%, in line with GLM-5.1. Grok 4.3 maintains an 81% IFBench score from Grok 4.20 0309 v2 ➤ Gains 8 points on AA-Omniscience Accuracy, but at the cost of lower AA-Omniscience Non-Hallucination Rate of 8 points, so Grok 4.20 0309 v2 still leads AA-Omniscience Non-Hallucination Rate, followed by MiMo-V2.5-Pro, in line with Grok 4.3 Congratulations to @xAI and @elonmusk on the impressive release!

xAI has launched Grok 4.3, achieving 53 on the Artificial Analysis Intelligence Index with improved agentic performance, ~40% lower input price, and ~60% lower output price than Grok 4.20 The release of Grok 4.3 places @xAI just above Muse Spark and Claude Sonnet 4.6 on the Intelligence Index, and a 4 points ahead of the latest version of Grok 4.20. Grok 4.3 improves its Artificial Analysis Intelligence Index score while reducing cost to run the benchmark suite. Key Takeaways: ➤ Grok 4.3 improves on cost-per-intelligence relative to Grok 4.20 0309 v2: it scores higher on the Intelligence Index while costing less to run the full benchmark suite. Grok 4.3 costs $395 to run the Artificial Analysis Intelligence Index, around 20% lower than Grok 4.20 0309 v2, despite using more output tokens. This makes it one of the lower-cost models at its intelligence level ➤ Large increase in real world agentic task performance: The largest single benchmark improvement is on GDPval-AA, where Grok 4.3 scores an ELO of 1500, up 321 points from Grok 4.20 0309 v2’s score of 1179 Grok 4.3, surpassing Gemini 3.1 Pro Preview, Muse Spark, Gpt-5.4 mini (xhigh), and Kimi K2.5. Grok 4.3 narrows the gap to the leading model on GDPval-AA, but still trails GPT-5.5 (xhigh) by 276 Elo points, with an expected win rate of ~17% against GPT-5.5 (xhigh) under the standard Elo formula ➤ Grok 4.3’s performs strongly on instruction following and agentic customer support tasks. It gains 5 points on 𝜏²-Bench Telecom to reach 98%, in line with GLM-5.1. Grok 4.3 maintains an 81% IFBench score from Grok 4.20 0309 v2 ➤ Gains 8 points on AA-Omniscience Accuracy, but at the cost of lower AA-Omniscience Non-Hallucination Rate of 8 points, so Grok 4.20 0309 v2 still leads AA-Omniscience Non-Hallucination Rate, followed by MiMo-V2.5-Pro, in line with Grok 4.3 Congratulations to @xAI and @elonmusk on the impressive release!

We estimate that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours (95% CI of 6 hrs to 98 hrs) on software tasks. While this is the highest point estimate we’ve reported, this measurement is extremely noisy because our current task suite is nearly saturated.

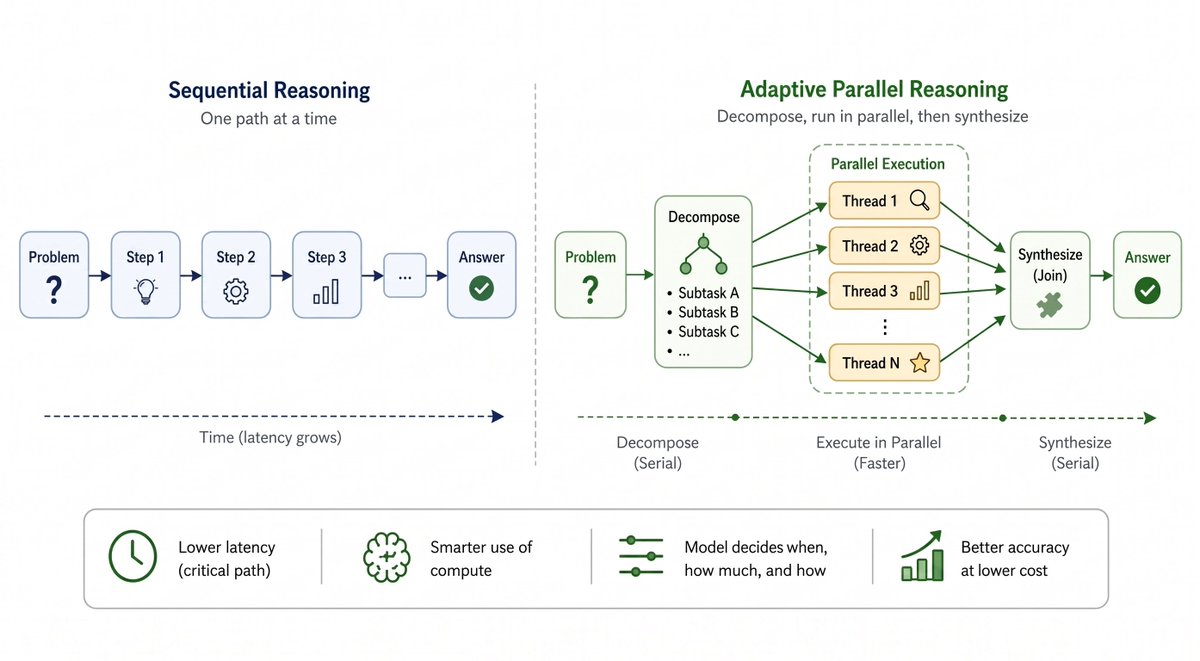

so after 24 hours we tallied early returns (from people koding on Saturdays mind you): @xai Grok is currently #3 coding model in the world by early voters (after 1 day and thousands of full agent votes). its really interesting to see the order shaken up, and there’s a reason why: SPEED IS ALL YOU NEED one thing I was keen on contributing to the evals community was an arena that doesnt penalize speed. aka, simply allow users to reward models that are “good enough but faster”, which is a core thesis @cognition has been pursuing with SWE1.5. 3 human-ai turns with “good enough but fast” models often beats 1 long smart but slow models. All coding model evals set up to date basically ignore speed, hence people just happily RL to take thousands of CoT tokens. we will make those tokens count, or else.

has anyone seen an agentic deployment in a large enterprise work without an eval?

Code was the killer app for AI cause it verifies really well. Linting, unit tests and instant feedback for frontend make it so outputs are verified in real time. If you want to think about what gets automated by AI next look at the verification mechanisms. We’re seeing this start in fields like math and biology which have excellent verification systems. Art went quickly because you can instantly tell if it’s good enough. All of this is still maturing but the rate at which the industry matures is directly related to how fast the verification loop is. Medicine and law will have a p99 problem and take forever to diffuse. What’s next? Probably accounting and finance since they’re super easy to verify.

New Blogpost: How to game the METR plot🚨 In 2025, a single graph changed AGI timelines, investments, research priorities, model quality assessments and much more. But if you squint harder, only 14 prompts shaped AI discourse over this year. Thats all the data in the 1-4 hour horizon length regime that matters. 🕵️ What's more? A majority of these are about Cybersecurity capture the flag contests, and training a Machine Learning model. > Post-train your model on CTF and ML codebases > profit 📈! its METR horizon length will increase. Exactly what OpenAI has been targeting in its Codex model releases... and is Anthropic underperforming in the 2-4hr range because it mostly consists of cybersecurity, which is dual-use for safety? To be clear, I think its an excellent idea to track horizon lengths instead of benchmark accuracy. But under the current modelling assumption of success probability being a logistic function of task length, SWAA+HCAST accuracy improvements alone might explain the exponential progress in horizon length 🔎 In the blog, I show detailed evidence for why we need to stop overindexing on the METR plot. Share it with anyone you see making decisions based on where the latest model lands on the METR plot. shash42.substack.com/p/how-to-game-…