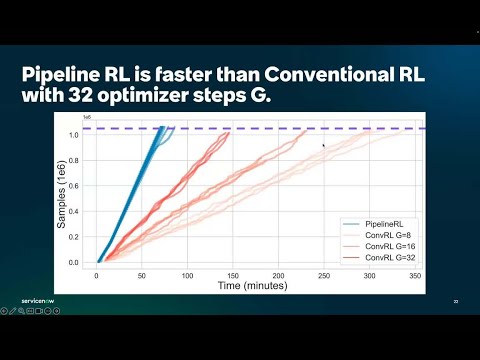

🚀 The RL community keeps pushing boundaries — from better on-policy data and partial rollouts to in-flight weight updates that mix KV caches across models during inference. Continuing inference while weights change and KV states stay stale sounds wild — but that’s exactly what PipelineRL makes work. vLLM is proud to power this kind of modular, cutting-edge RL innovation. Give it a try and share your thoughts!