Alfaxad

1.6K posts

Alfaxad

@alfxad

alfa. working on something beautiful!! @NadhariAI

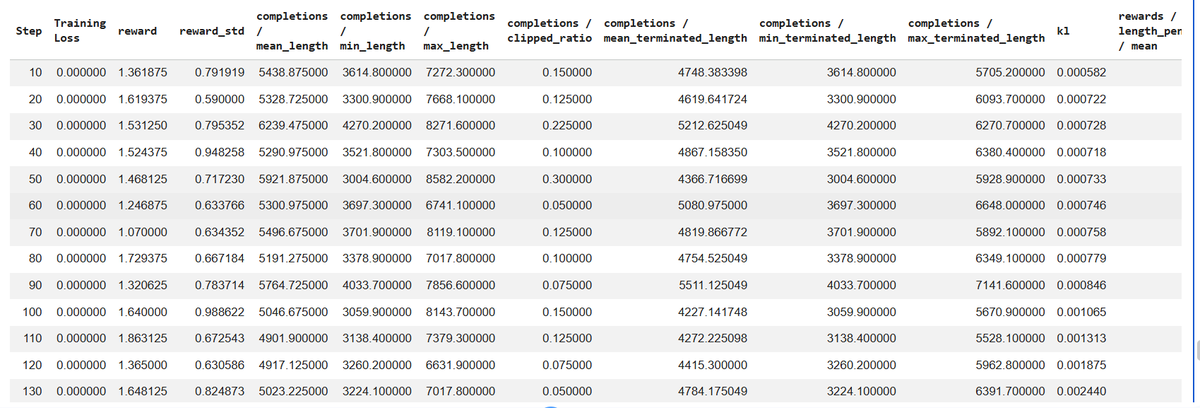

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project. This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.: - It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work. - It found that the Value Embeddings really like regularization and I wasn't applying any (oops). - It found that my banded attention was too conservative (i forgot to tune it). - It found that AdamW betas were all messed up. - It tuned the weight decay schedule. - It tuned the network initialization. This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism. github.com/karpathy/nanoc… All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges. And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

BREAKING: Nominal Hits $1B Valuation — Founders Fund Preempts $80M B-2 Acceleration Round Just 10 months after @Nominal_io's $75M Series B led by Sequoia's Alfred Lin CEO Cameron McCord & Trae Stephens (Founders Fund + Anduril) join Sourcery to discuss: - $155M raised in 10 months, nearly $200M total funding - Early validation at Anduril - 4 of the 5 largest defense primes are now customers - Reducing major hardware test campaigns by 50–60% (which can cost ~$3M per day) - Strategy: platform expansion, potential M&A, and aggressive hiring - Anduril LORE All systems Nominal. @CameronLMcCord @traestephens @Alfred_Lin 𝐓𝐈𝐌𝐄𝐒𝐓𝐀𝐌𝐏𝐒 (00:00) Trae Stephens & Cameron McCord (01:11) Nominal raises $80M from Founders Fund (03:32) Why Founders Fund made the investment (05:22) Palantir, FF, Anduril: Trae is a "Slashie" (07:14) Nominal: the GitHub for hardware testing (12:33) Why Sequoia believed Nominal’s TAM was much bigger (15:36) Inside Nominal’s growing defense customer base (17:32) Why government hardware testing still relies on Excel and MATLAB (22:22) Cutting hardware testing time by up to 60% (26:55) How AI changes hardware development (37:22) Why the government is backing new defense tech companies (33:35) Nominal's sales strategy (45:44) Competing with legacy software giants (46:24) Recruiting top engineers (50:56) Early Anduril LORE: Stories from the desert

Anil Seth argues that consciousness is almost certainly rooted in biological life—metabolism, embodiment, and self-regulating processes—making truly conscious AI on silicon extremely unlikely. He warns that the persistent myth of conscious machines risks ethical disasters, societal confusion, and devaluing our own living experience, urging caution and rejection of this overhyped narrative. | @anilkseth in @NoemaMag noemamag.com/the-mythology-… noemamag.com/the-mythology-…