AGI is *so* 2025. Memory is 2026.

40K posts

AGI is *so* 2025. Memory is 2026.

@andygrossberg

https://t.co/uF7Q4whYEO ONCE: AI SEO BEFORE: https://t.co/PQQDvRVN6o, @ColonyNFT, @TribesNFT, Image, Jon Peddie, MAXIS, Studio Cutie

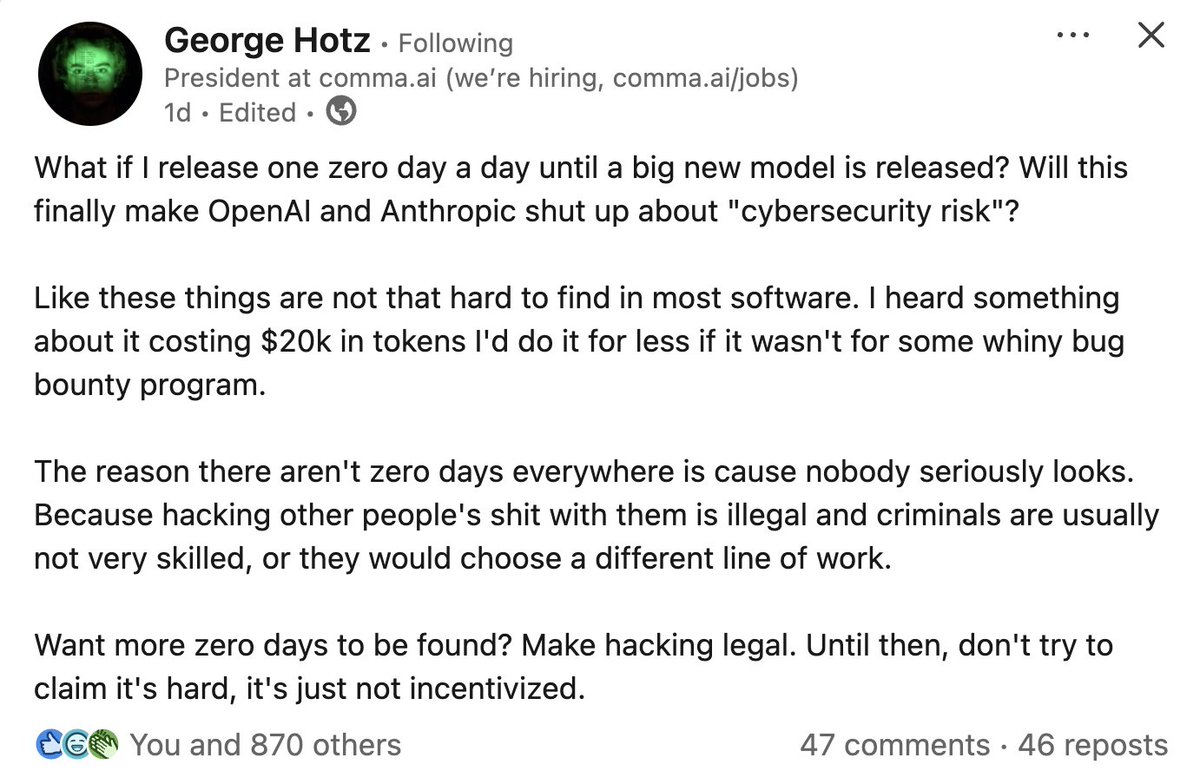

wtf. This is the atmosphere certain people and orgs have directly / indirectly caused.

When Orban, Putin, and Trump are all gone, the world will be a brighter, better, and safer place.

Tim- “I want leftists to treat Democrats like they treat Republicans.” Leftists- “ok we’re not voting for Democrats.” Tim- “no not like that”

Why are there basically no major cities on this stretch of the US West Coast?

Centrist libs are diet republicans and we need to start treating them like the republicans they want to be.

Many Democratic operatives and donors are quietly hoping she passes on a run. huffpost.com/entry/harris-f…