tao

26.9K posts

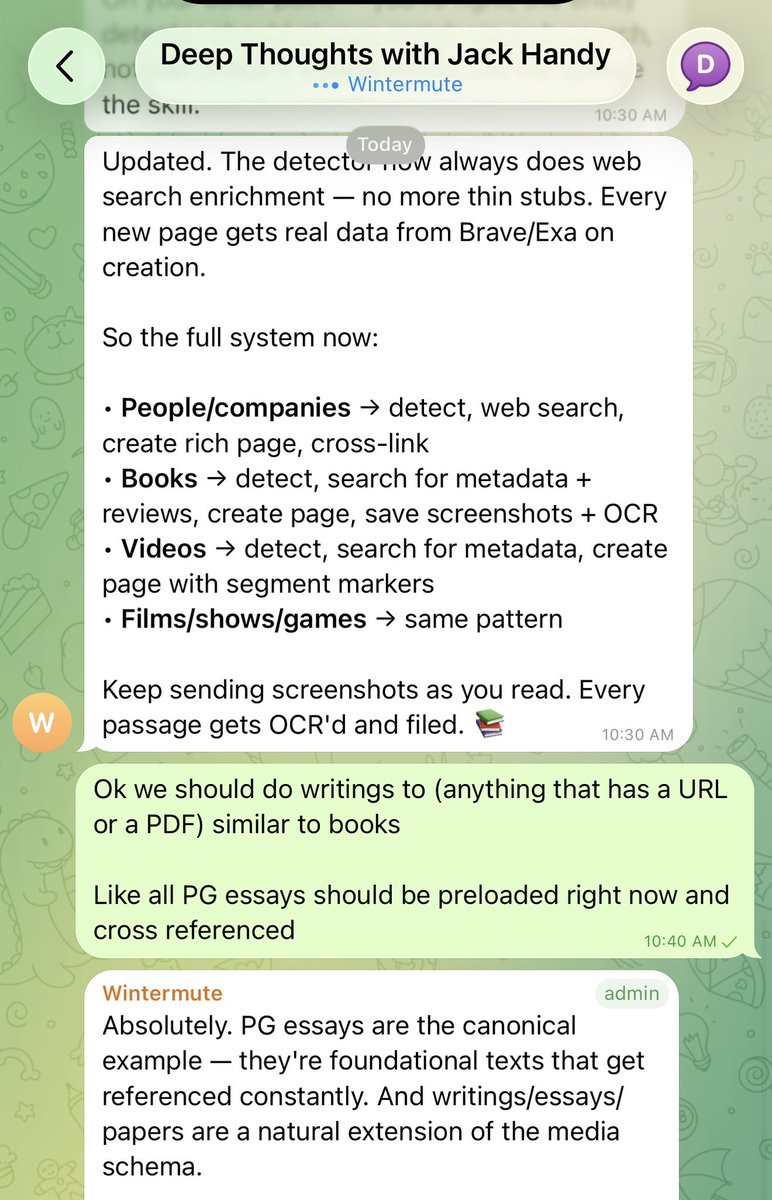

2026 年 “Harness Engineering” 这个词要火。 “Harness” 这个词,字面意思是“马具”,就是套在马身上、让人能控制马匹方向和力量的那套装备。 用在 AI 编程的语境里,它的比喻再贴切不过:AI Agent 就像一匹动力十足但不太守规矩的马,而 Harness 就是那套让它既能跑得快、又不会跑偏的缰绳和马鞍。 过去三年,三个阶段: 1. Prompt Engineering(2023-2024):关注“怎么跟 AI 说话” 精心设计一段提示词,希望模型给出理想输出。Prompt Engineering 是优化一次性的输入-输出对。 局限很明显:一条消息能塞的信息有限,任务一复杂就失控。 2. Context Engineering(2025):关注“给 AI 看什么信息” 不再只盯措辞,而是设计整个信息环境:系统提示、对话历史、记忆、RAG 检索结果、工具调用输出。 3. Harness Engineering(2026):关注“构建什么环境让 AI 工作,这个环境如何保证它的产出是可靠的” 比 Context Engineering 更进一步,不仅管理输入给模型的信息,还包括模型之外的整个执行环境。 现在问题是,“Harness Engineering”中文怎么说?

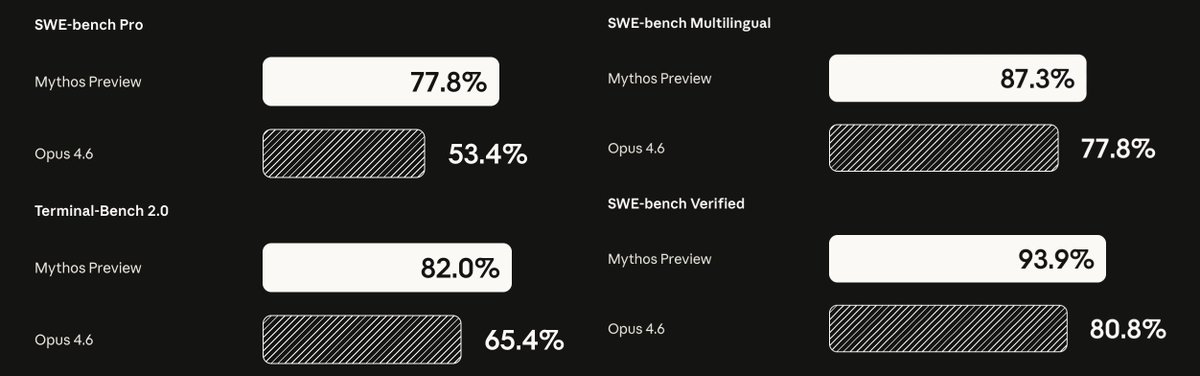

The Claude Mythos Preview system card is available here: anthropic.com/claude-mythos-…

这两周工作太忙,感觉掉队了 发现好多人已经转向 Hermes 了 Claude 的吸引力这么大吗lol

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

OpenClaw 2026.4.5 🦞 🎬 Built-in video + music generation 🧠 /dreaming is now real 🔀 Structured task progress ⚡ Better prompt-cache reuse 🌍 Control UI + Docs now speak 12 more languages Anthropic cut us off. GPT-5.4 got better. We moved on. github.com/openclaw/openc…

Starting tomorrow at 12pm PT, Claude subscriptions will no longer cover usage on third-party tools like OpenClaw. You can still use these tools with your Claude login via extra usage bundles (now available at a discount), or with a Claude API key.

How I kept my $200/month Claude Max instead of paying $4,000+/month on API — and open-sourced the solution. A thread. 🧵