Arakoo Devs

5K posts

Arakoo Devs

@arakoodev

SAP for AI Agents. A revenue-first framework that creates a double-entry ledger for every agent operation

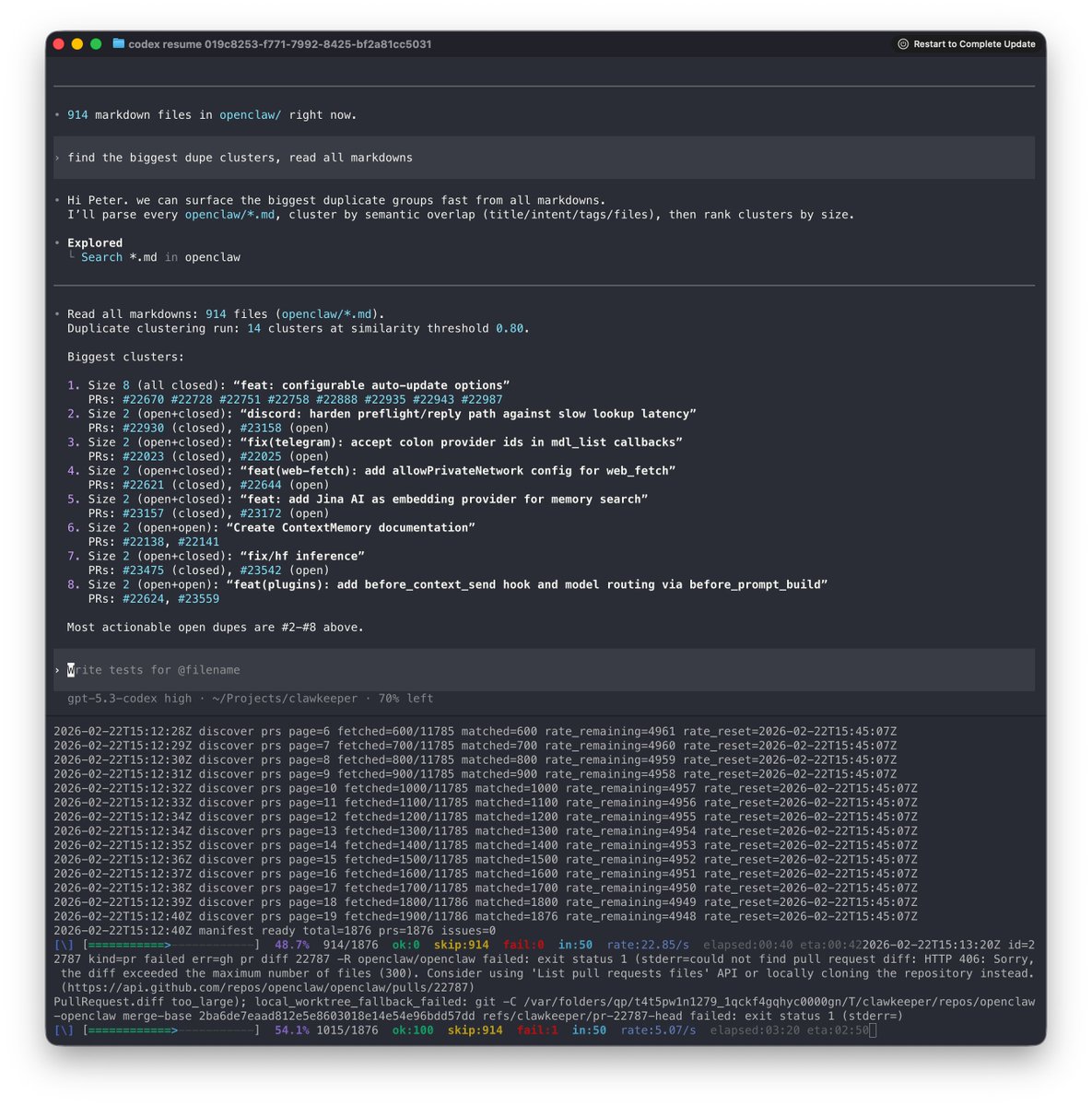

Secure your coding agents with virtual filesystems and better document understanding. Building safe AI coding agents requires solving two critical challenges: filesystem access control and handling unstructured documents. We've created a solution using AgentFS, LlamaParse, and @claudeai. 🛡️ Virtual filesystem isolation: agents work with copies, not your real files, preventing accidental deletions while maintaining full functionality 📄 Enhanced document processing: LlamaParse converts PDFs, Word docs, and presentations into high-quality text that agents can actually understand ⚡ Workflow orchestration: LlamaIndex Workflows provide stepwise execution with human-in-the-loop controls and resumable sessions 🔧 Custom tool integration: replace built-in filesystem tools with secure MCP server alternatives that enforce safety boundaries This approach uses AgentFS (by @tursodatabase) as a SQLite-based virtual filesystem, our LlamaParse for state-of-the-art document extraction, and Claude for the coding interface - all orchestrated through LlamaIndex Agent Workflows. Read the full technical deep-dive with implementation details: llamaindex.ai/blog/making-co… Find the code on GitHub: github.com/run-llama/agen…

I just ran a load test of starting a million sandboxes on our sandbox infra. Managed without any hiccups! We've been tuning them for a while and are now able to support multiple 10x growths this year without rearchitecting. GKE is truly impressive!

🚨 Alibaba just quietly dropped a vector database that destroys Pinecone, Chroma, and Weaviate. It's called Zvec and it runs directly inside your application no server, no config, no infrastructure costs. No Docker. No cloud bills. No DevOps nightmare. Built on Proxima, Alibaba's battle-tested vector search engine powering their own production systems at scale. The numbers don't lie: → Searches billions of vectors in milliseconds → pip install zvec and you're searching in under 60 seconds → Dense + sparse vectors + hybrid search in a single call And it runs everywhere: → Notebooks → Servers → Edge devices → CLI tools 100% Opensource. Apache 2.0 license. This is the vector DB the RAG community has been waiting for production-grade performance without the production-grade headache. Link in the first comment 👇