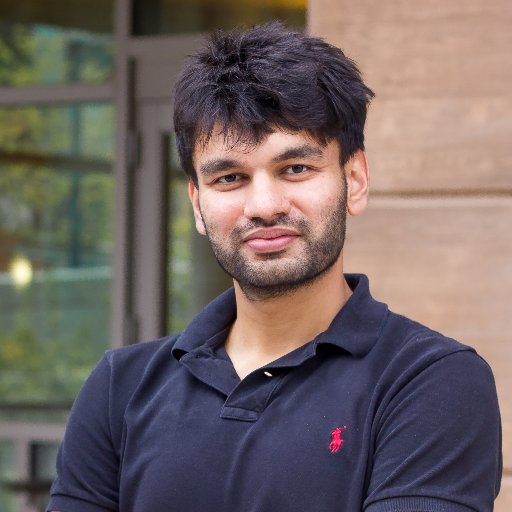

Vincent Sitzmann

905 posts

Vincent Sitzmann

@vincesitzmann

Building AI that learns by interacting with the world. Assistant Professor @ MIT, leading the Scene Representation Group (https://t.co/h5gvhLYZj4).

🚀 🚀 🚀 Excited to share our new paper: Remember to be Curious: Episodic Context and Persistent Worlds for 3D Exploration What does it take for an agent to stay curious in a 3D world? The answer is memory. 🌐 Project: recuriosity.github.io 📄 Paper: arxiv.org/abs/2605.22814 💻 Code: github.com/recuriosity/re…

New paper: AsymFlow🔥 JiT x0-prediction is not enough for pixel generation. Better keep velocity in a low-rank subspace: - 1.57 FID on ImageNet (best pixel flow model) - Finetunes FLUX.2 klein into pixel space, beats the original on HPSv3/DPG/GenEval (#1 overall on HPSv3) 1/7

Developers who got early access to Reactor have been building experiences that were not possible 6 months ago. We're hiring the people who want to build what comes next: reactor.inc/careers

Introducing Generative View Stitching (GVS), a non-autoregressive sampling method for length extrapolation of video diffusion models. GVS enables collision-free camera-guided video generation for predefined trajectories, including Oscar Reutersvärd's Impossible Staircase (1/9).

Yay, finally! Introducing Vision Banana🍌 from @GoogleDeepMind, our unified model that outperforms SoTA specialist models on various vision tasks! By treating 2D/3D vision tasks as image generation, we unlock a new foundation for CV. Project page: vision-banana.github.io (1/5)

Super grateful to be part of this amazing team behind GPT Image 2!

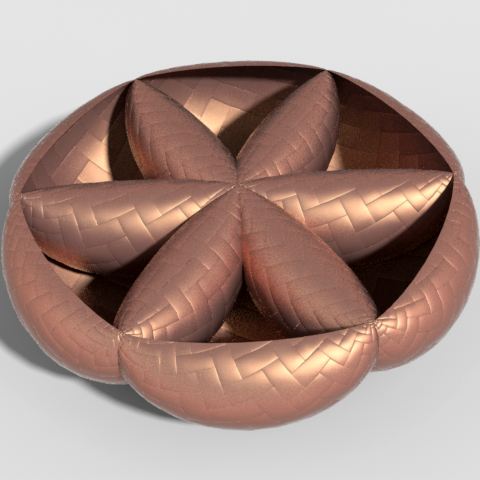

Why do diffusion models produce new images instead of just memorizing the dataset? We show that they learn pixel correlation patterns from the data and therefore denoise locally, which promotes generalization. To test this idea, we compare trained diffusion models with a training-free algorithm that mixes local patches from the dataset. Surprisingly, this simple procedure already reproduces many properties of the trained models. 🧵 Check out this thread for more details about our Spotlight NeurIPS paper with @yuancy, @JustinMSolomon and @vincesitzmann.