Andy Stewart

1.1K posts

Andy Stewart

@arstew

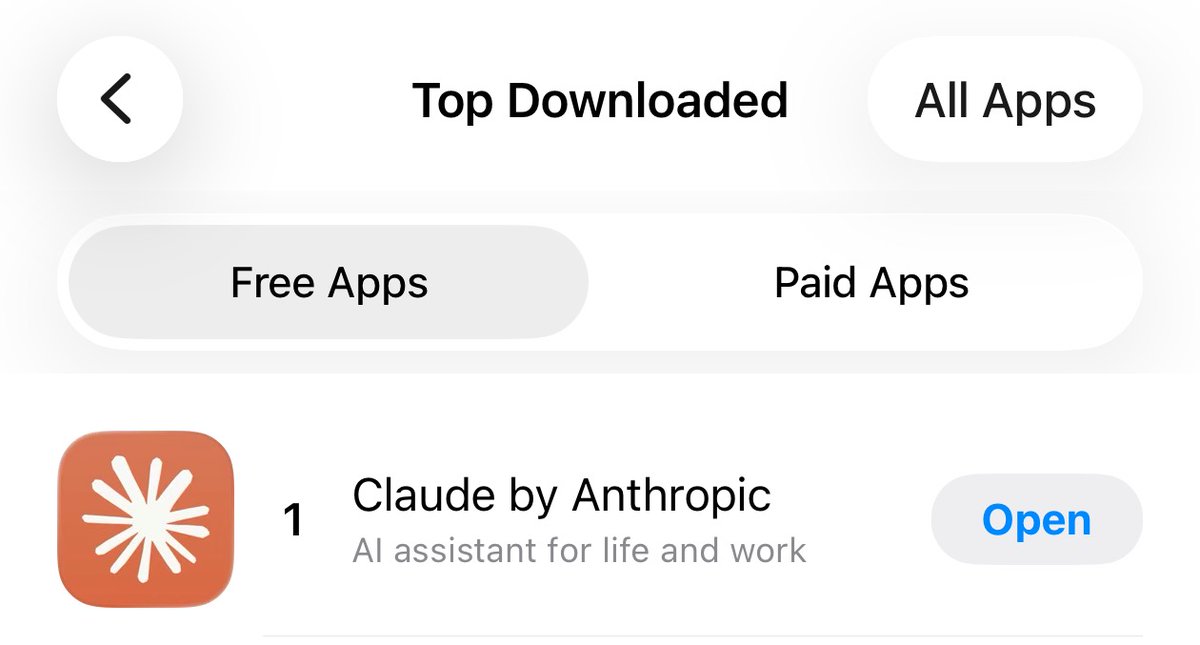

Robots will save us from ourselves.

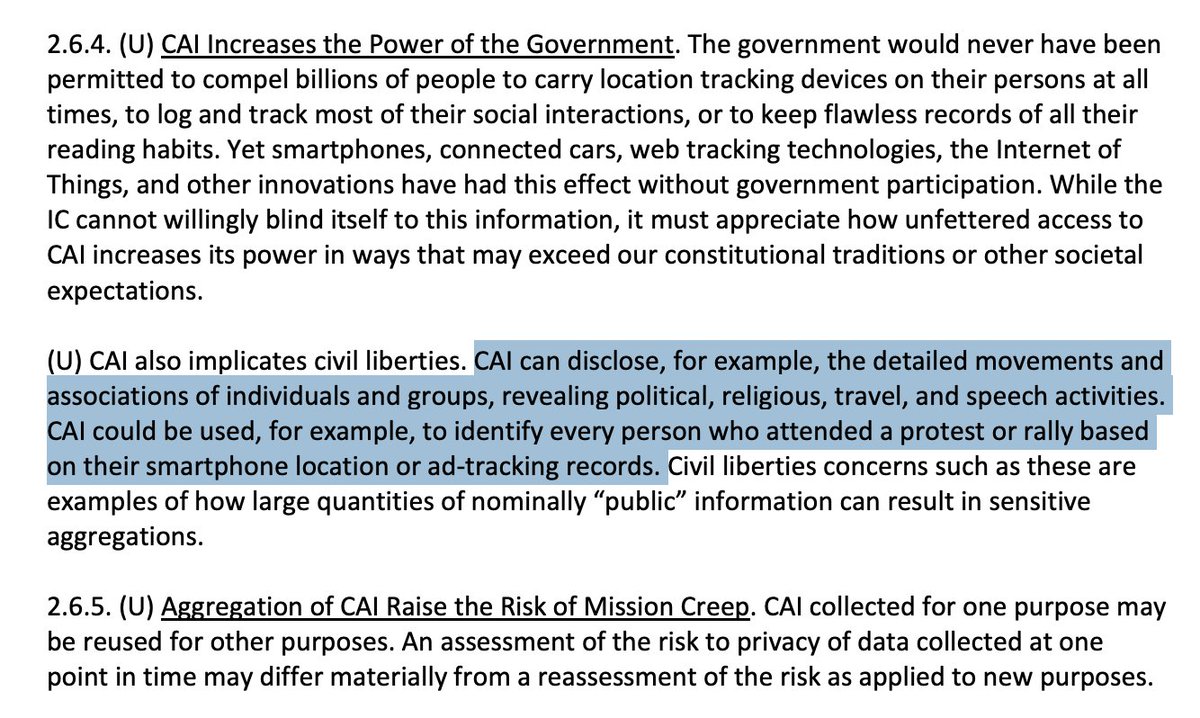

HELL NO! Anthropic is in the right here. The Atlantic: “On Friday afternoon, Anthropic learned that the Pentagon still wanted to use the company’s AI to analyze bulk data collected from Americans.” “That could include information such as the questions you ask your favorite chatbot, your Google search history, your GPS-tracked movements, and your credit-card transactions, all of which could be cross-referenced with other details about your life. Anthropic’s leadership told Hegseth’s team that was a bridge too far, and the deal fell apart. Soon after, Hegseth directed the U.S. military’s contractors, suppliers, and partners to stop doing business with Anthropic.”

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

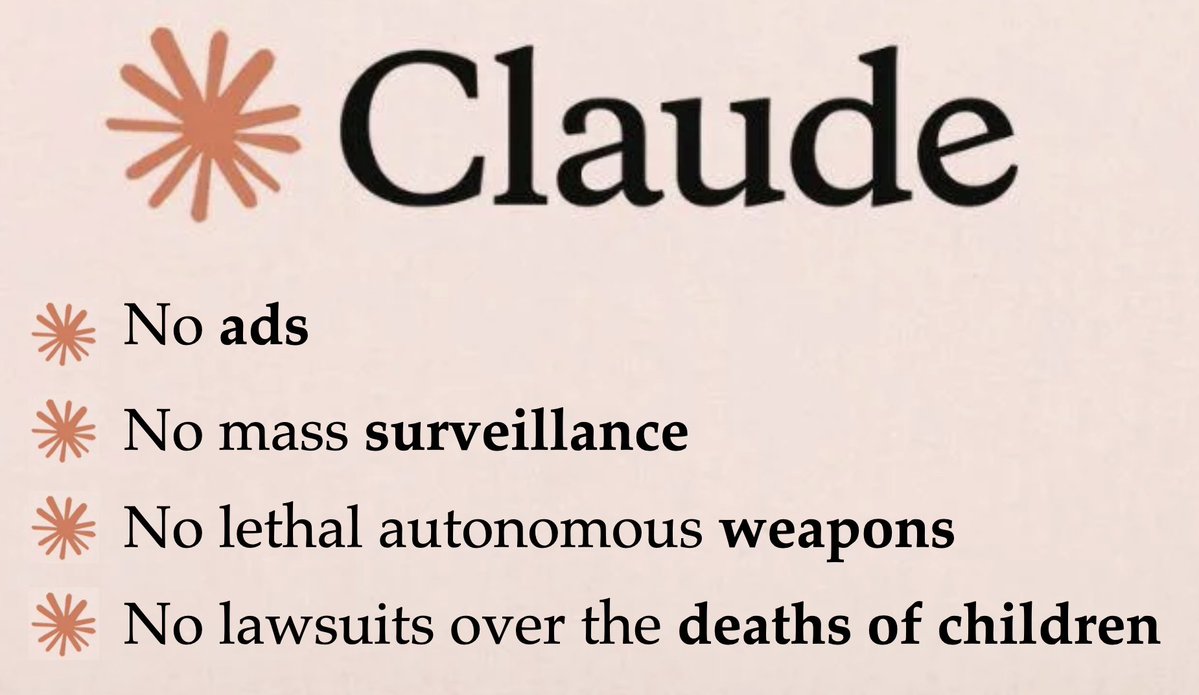

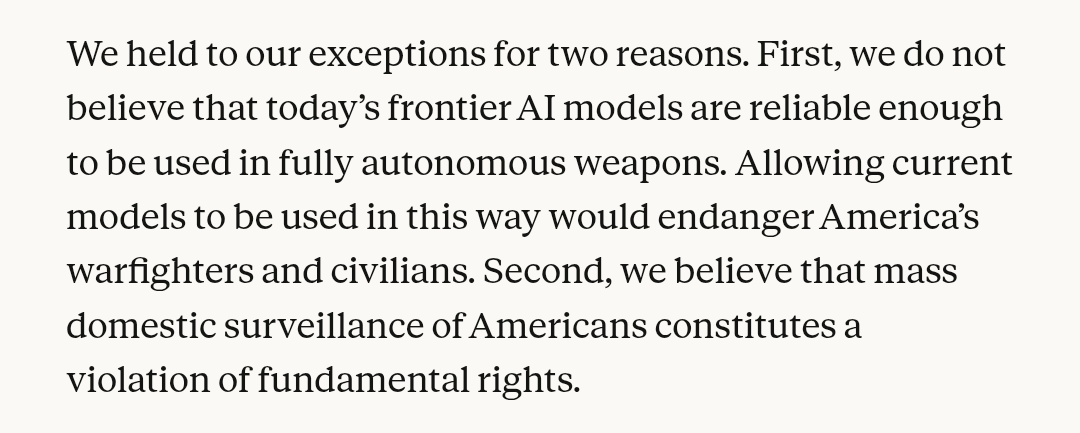

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

done

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

The CEO of Anthropic penned a public letter explaining the danger of the Defense Department's request to remove certain constraints from Claude, and refusing them outright. reason.com/2026/02/27/ant…