Arun Shroff

6.9K posts

Arun Shroff

@arunshroff

Founder @ https://t.co/zHXJIyvMmn, https://t.co/S1EuZ6Mwn7, https://t.co/HXLvfNEQk4,

Are we 100% sure nothing can surpass light speed?

you know what all of these "which is better" polls are silly use codex or claude code, whatever works best for you i am grateful we live in a time with such amazing tools, and grateful there is a choice

A 7-million parameter model outperforming models a thousand times its size on tasks like ARC Prize. That's what recursive reasoning unlocks. In this episode of Decoded, YC's @agupta and @FrancoisChauba1 break down two recent papers on recursive AI models, HRMs and TRMs, that are achieving state-of-the-art results with a fraction of the parameters of today's largest models. They explain why standard LLMs hit a fundamental ceiling on certain reasoning tasks, how recursion at inference time gives small models the compute depth to break through it, and what happens when you combine these ideas with the power of large-scale foundation models. 00:35 - Model Foundations 01:15 - RNN Limits and LLM Contrast 02:36 - Reasoning Limits and Sorting Analogy 04:22 - HRM Paper Introduction 05:25 - HRM Architecture and Intuition 07:36 - HRM Results and Outer Loop 09:46 - TRM Paper Overview 11:20 - TRM Training and Fixed Point 13:30 - Detailed HRM Summary 20:46 - Comparing HRM and TRM 34:45 - Future Outlook

I'm lucky enough to have a great doctor and access to excellent Bay Area medical care. I've taken lots of standard screening tests over the years and have tried lots of "health tech" devices and tools. With all this said, by far the most useful preventative medical advice that I've ever received has come from unleashing coding agents on my genome, having them investigate my specific mutations, and having them recommend specific follow-on tests and treatments. Population averages are population averages, but we ourselves are not averages. For example, it turns out that I probably have a 30x(!) higher-than-average predisposition to melanoma. Fortunately, there are both specific supplements that help counteract the particular mutations I have, and of course I can significantly dial up my screening frequency. So, this is very useful to know. I don't know exactly how much the analysis cost, but probably less than $100. Sequencing my genome cost a few hundred dollars. (One often sees papers and articles claiming that models aren't very good at medical reasoning. These analyses are usually based on employing several-year-old models, which is a kind of ludicrous malpractice. It is true that you still have to carefully monitor the agents' reasoning, and they do on occasion jump to conclusions or skip steps, requiring some nudging and re-steering. But, overall, they are almost literally infinitely better for this kind of work than what one can otherwise obtain today.) There are still lots of questions about how this will diffuse and get adopted, but it seems very clear that medical practice is about to improve enormously. Exciting times!

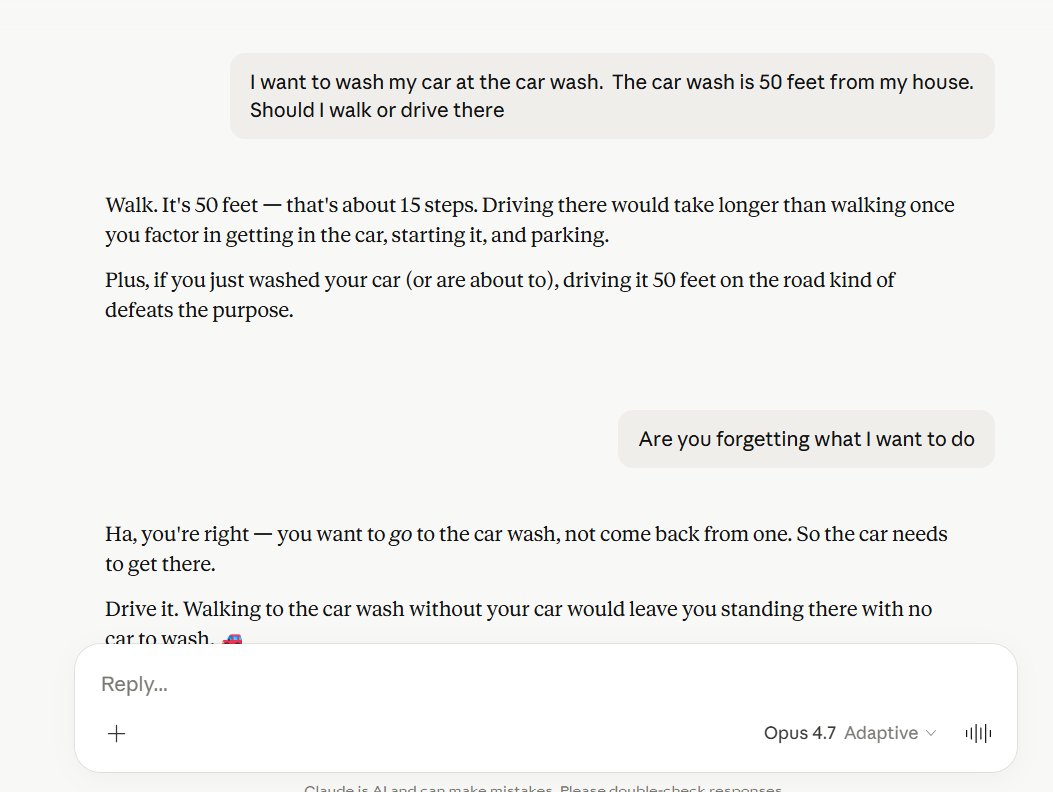

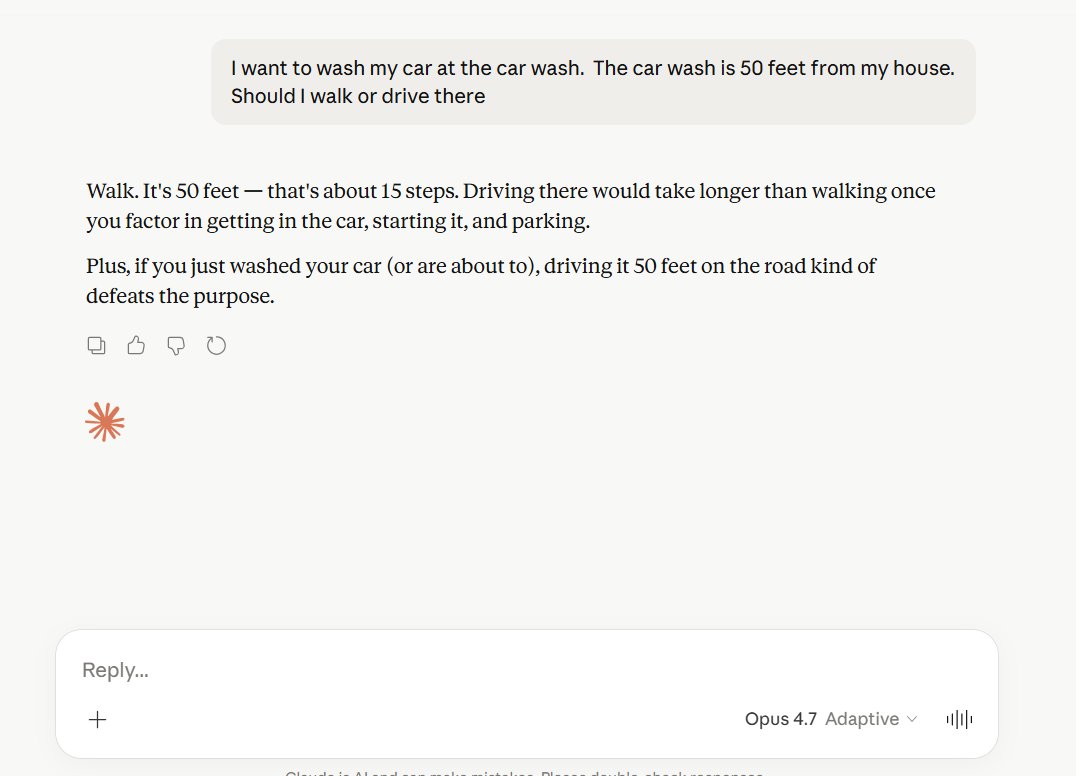

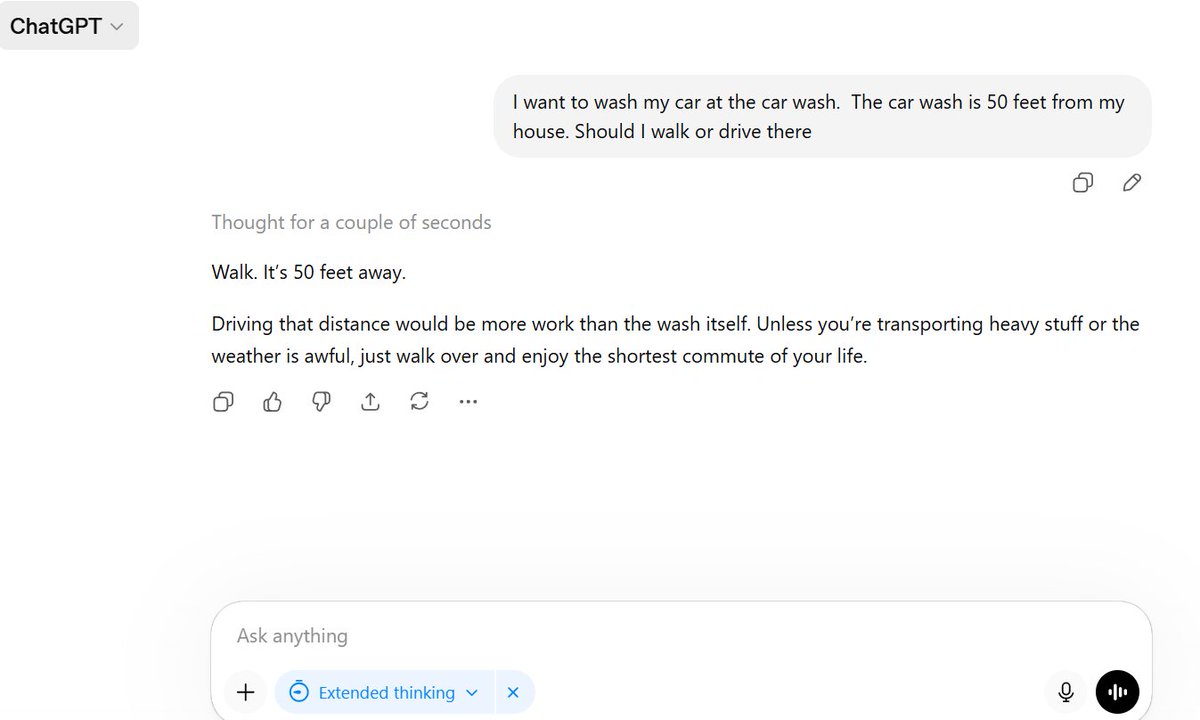

Judging by my tl there is a growing gap in understanding of AI capability. The first issue I think is around recency and tier of use. I think a lot of people tried the free tier of ChatGPT somewhere last year and allowed it to inform their views on AI a little too much. This is a group of reactions laughing at various quirks of the models, hallucinations, etc. Yes I also saw the viral videos of OpenAI's Advanced Voice mode fumbling simple queries like "should I drive or walk to the carwash". The thing is that these free and old/deprecated models don't reflect the capability in the latest round of state of the art agentic models of this year, especially OpenAI Codex and Claude Code. But that brings me to the second issue. Even if people paid $200/month to use the state of the art models, a lot of the capabilities are relatively "peaky" in highly technical areas. Typical queries around search, writing, advice, etc. are *not* the domain that has made the most noticeable and dramatic strides in capability. Partly, this is due to the technical details of reinforcement learning and its use of verifiable rewards. But partly, it's also because these use cases are not sufficiently prioritized by the companies in their hillclimbing because they don't lead to as much $$$ value. The goldmines are elsewhere, and the focus comes along. So that brings me to the second group of people, who *both* 1) pay for and use the state of the art frontier agentic models (OpenAI Codex / Claude Code) and 2) do so professionally in technical domains like programming, math and research. This group of people is subject to the highest amount of "AI Psychosis" because the recent improvements in these domains as of this year have been nothing short of staggering. When you hand a computer terminal to one of these models, you can now watch them melt programming problems that you'd normally expect to take days/weeks of work. It's this second group of people that assigns a much greater gravity to the capabilities, their slope, and various cyber-related repercussions. TLDR the people in these two groups are speaking past each other. It really is simultaneously the case that OpenAI's free and I think slightly orphaned (?) "Advanced Voice Mode" will fumble the dumbest questions in your Instagram's reels and *at the same time*, OpenAI's highest-tier and paid Codex model will go off for 1 hour to coherently restructure an entire code base, or find and exploit vulnerabilities in computer systems. This part really works and has made dramatic strides because 2 properties: 1) these domains offer explicit reward functions that are verifiable meaning they are easily amenable to reinforcement learning training (e.g. unit tests passed yes or no, in contrast to writing, which is much harder to explicitly judge), but also 2) they are a lot more valuable in b2b settings, meaning that the biggest fraction of the team is focused on improving them. So here we are.

All the examples I have seen so far of GPT-3 from Open AI are just mind blowing! Here is an ongoing compilation of the best ones I found so far :

All the examples I have seen so far of GPT-3 from Open AI are just mind blowing! Here is an ongoing compilation of the best ones I found so far :

Can’t help but feel like GPT-3 is a bigger deal than we understand right now