Sabitlenmiş Tweet

ashray gupta

442 posts

yummy chassis. very excite

Framework@FrameworkPuter

Our biggest breakthrough in efficiency yet, the Framework Laptop 13 Pro with 20 hours of battery life. In Graphite. Linux-first with options for Ubuntu pre-installed. Featuring Intel® Core™ Ultra Series 3 processors, LPCAMM2 Memory, a new haptic touchpad, and a touchscreen display. Pre-orders for the Framework Laptop 13 Pro open now: frame.work

English

@WilsonErnst2 didn't expect to see photonvision on twitter today

English

@davsca1 skydreamer does this with a seg mask adapter for s2r: arxiv.org/pdf/2510.14783

English

We are excited to share our #ICRA2026 paper "Dream to Fly: Model-Based Reinforcement Learning for Vision-Based Drone Flight"!

Paper: rpg.ifi.uzh.ch/docs/ICRA26_Ro…

Video: youtu.be/nctQ2rxZnIc

Can we use Model-Based #ReinforcementLearning (MBRL) to fly a drone from pixels to commands? In this work, we train quadrotor navigation policies from scratch using #WorldModels, mapping raw onboard camera pixels directly to control commands, much like a human pilot!

While model-free methods like PPO are sample-inefficient and struggle in this setting, we leverage #MBRL to train visuomotor policies capable of agile flight through a racetrack using only raw pixel observations, no explicit state estimation needed.

A key finding: because our policies are trained end-to-end directly from pixels, we no longer need the perception-aware reward term used in previous methods. Instead, this behavior emerges naturally! The policies learn to guide the camera toward feature-rich areas of the observation space on their own.

Kudos to @roaguiangel @ashwinshx @isgeles @EliJalbout

Reference:

"Dream to Fly: Model-Based Reinforcement Learning for Vision-Based Drone Flight"

Angel Romero*, Ashwin Shenai*, Ismail Geles, Elie Aljalbout, Davide Scaramuzza IEEE International Conference on Robotics and Automation (ICRA), Vienna, 2026.

@ERC_Research @AUTOASSESS_EU @uzh_ifi @UZH_en @UZH_Science @Prophesee_ai @SynSenseNeuro @UZHspacehub @swissrobotics @nccrrobotics

YouTube

English

@keerthanpg theres an optimal controls to frontier modeling pipeline and its awesome

English

There are really few (< 15) people in the world who know both frontier modeling AND modern robotics very well.

A lot of strong roboticists are still working off of ideas from the pre-2023, pre-Gemini era of robotics and know very little about frontier AI techniques. A lot of strong frontier modeling people do not care yet / have little expertise in robotics.

The latter group is increasing as more of the big AI labs are foraying into robotics but still the world needs a lot more :)

English

@AaronBergman18 first step on the beautiful train that is

black holes -> surface area? -> holography -> ads/cft -> RT -> ...

English

I assume this was literally implied by what I learned in AP Physics, but low IQ so I didn't intuit it:

TIL that there's an absolute upper bound on information you can fit in some amount of space en.wikipedia.org/wiki/Bekenstei…

English

@zhaomingxie + an end-effector lives in SE(3), and that's where I imagine motion happening. Joint angles are something you kinda back out afterward. A learned model could reason about "what the next motion looks like" entirely in task space, without internally learning DH tables.

English

@zhaomingxie I think demonstrated humanoid/manipulator trajectories live on an incredibly thin submanifold of the full C-space. intuitively similar to small-angle approximations of pendulums (don't need the general solution if your data never leaves the linear regime)

English

I decided to write down some of my thoughts in blog posts. In this post: zhaomingxie.github.io/blog/posts/wor…, I share my thoughts on world model, purely in the context of using simulation data to train a dynamic model of an articulated rigid body system, e.g., a humanoid robot.

English

@_kei18 I mean like after this finds a solution, refine via ipopt or similar in a few ms to actually meet constraints computationally

English

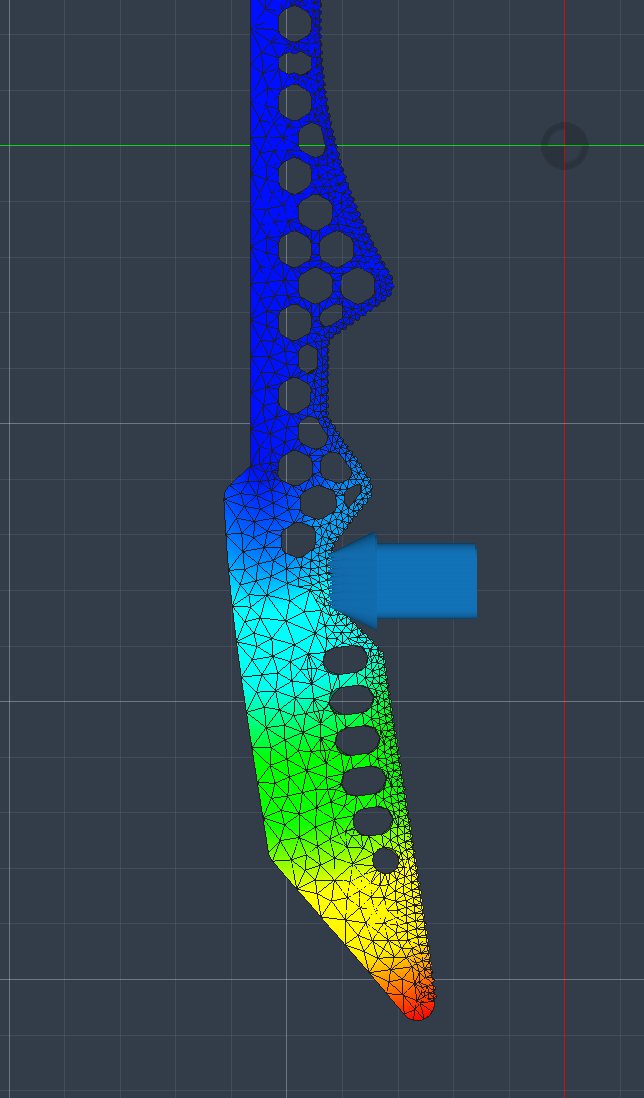

@ashraygup The initial guess uses a spline curve. The model then predicts its deformation. It's a one-shot process.

English

非線形最適化による軌道生成は色々工夫して安定して解を出せるようにはなったけど、変数も制約条件もめちゃ多いのでリアルタイムには計算が重すぎる... ということでここはミリオンサイズのTransformerで模倣学習して近似。これで任意の長さの高次元軌道を精度良く高速にオンボードで生成可能になった

GIF

Keisuke Okumura@_kei18

アグレッシブな動作を実現する軌道最適化を解くために、比較的正確なダイナミクスを仮定しないといけないんですが、ノミナルから誤差が大きい特定の次元に対してデータを集める -> 残差をNeural ODEで学習かけて補っています。すると時間可変で非線形最適化を解けばいい定式化に落ちる

日本語

@pwalshbuilds iceoryx is local IPC over shared memory but we were sending messages from a laptop to the comma (I do wanna try out zenoh)

English

@ashraygup Wicked cool! I saw you're using ZMQ which I've used a bunch and really enjoyed. Curious if you've checked out iceoryx? It's on my radar but haven't used it yet.

English

ashray gupta retweetledi

1st place: period - make parking chill

e2e parking model running locally on laptop

@ashraygup @nebudev14 @divijmot @jlr_sh @rohankalia_

English