Rahul Atlury

53 posts

Rahul Atlury

@atlury

I am an electronics engineer with interests in preserving the knowledge of yesteryears for future generations

Today we're releasing ZAYA1-VL-8B, our first vision-language model. ZAYA1-VL-8B is a 700M active / 8B total MoE built on our ZAYA1-8B base trained on @AMD. We achieve strong performance for our size resulting in leading intelligence density and inference efficiency.

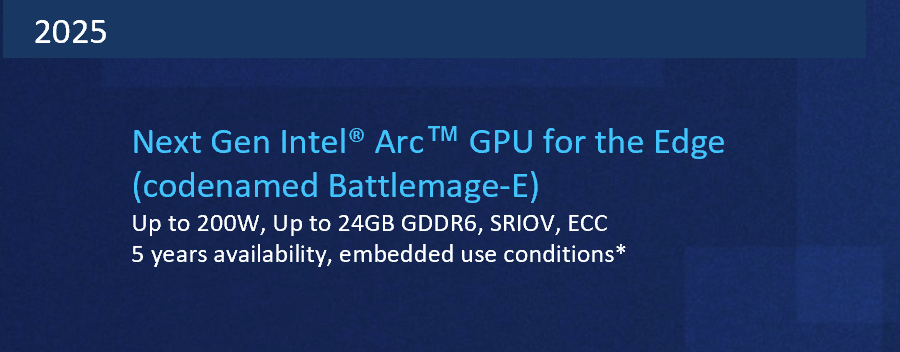

✨ DeepSeek-V4 is here — a million-token context, 1.6T parameter powerhouse optimized for agentic workflows. Out of the box, on DeepSeek-V4-Pro, NVIDIA Blackwell Ultra delivers over 150 TPS/user interactivity for agentic workflows. And we’re just getting started. Expect these performance figures to climb higher as we implement Dynamo, NVFP4, and advanced parallelization techniques. Start building today with @lmsysorg and @vllm_project

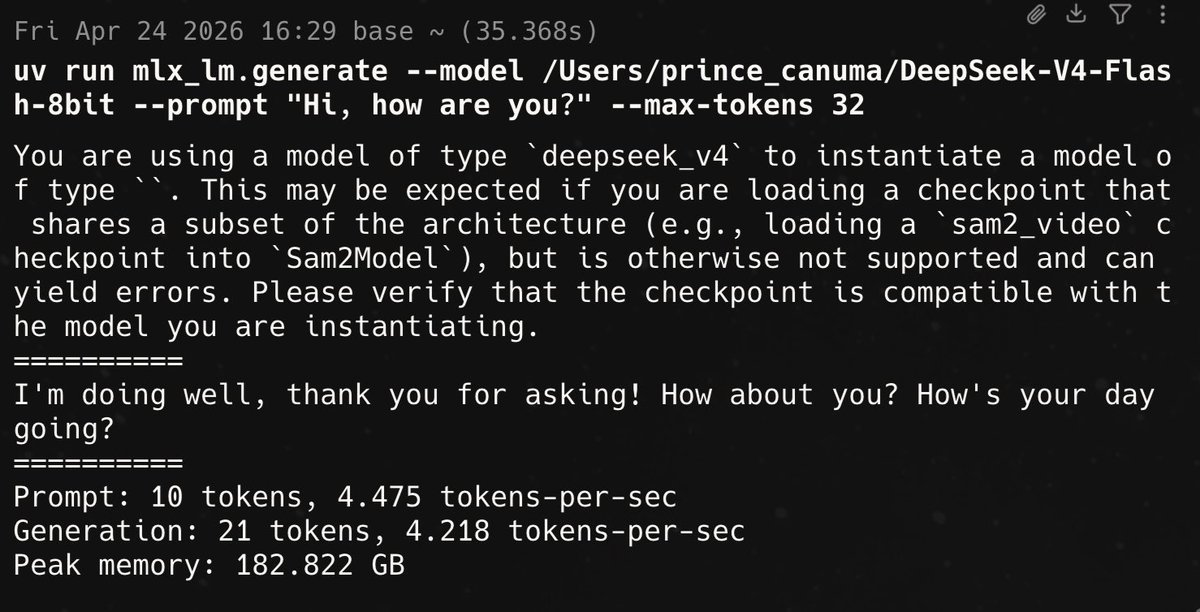

Ported DeepSeek-V4 to MLX 🔥 There still lots to optimize but it’s work well

The Multi-Silicon Era Is Here. Disagg is out of the bag. What it means for @Nvidia, CPUs, XPUs, startups, and more. chipstrat.com/p/the-multi-si… $NVDA $AMD

@Prince_Canuma yeah I am talking about the dgx spark and the nvidia 6000 cards they all have the software u idiot

Finally, Florence-2 port to MLX complete ✅🚀 I finally figured out a 3 day old bug that has been bugging me on the vision encoder. (Pun intended) Thanks for the wine @malgamves!