along

600 posts

along

@attaalong

AI engineer,tencent&minovte&ByteDance base shenzhen

This is a man who has been haunted since childhood and built a billion dollar company as a side effect of trying to make the haunting stop.

开源一个我自用的OpenClaw控制中心! 可以一个面板: - 看哪些任务烧了多少 token(百分比) - 看整个 OpenClaw 现在健不健康 - 看每个 Agent 现在在干嘛,有没有卡住 - 看每个 Agent 用的模型、目录、权限 - 直接查看和修改 Agent 的记忆,人设、任务文档 - 看定时任务和心跳任务有没有正常在跑 项目地址:github.com/TianyiDataScie…

This research introduces a system that recovers the hidden information needed for computers to successfully reproduce academic experiments. Academic papers often leave out crucial details, which prevents other researchers from recreating the results in their work. This paper addresses the problem by identifying three types of missing knowledge, specifically relational, somatic, and collective details. The proposed system, named PAPERREPRO, uses a graph-based framework to automatically find and apply this missing information during reproduction. It works by analyzing relationships between the original paper and its neighbors, then uses feedback from running code to refine its understanding. This method allows AI agents to fill in the gaps that authors leave behind, making automated experimentation much more reliable. By turning these implicit details into actionable steps, the framework bridges the gap between static text and executable code. ---- Paper Link – arxiv. org/abs/2603.01801 Paper Title: "What Papers Don't Tell You: Recovering Tacit Knowledge for Automated Paper Reproduction"

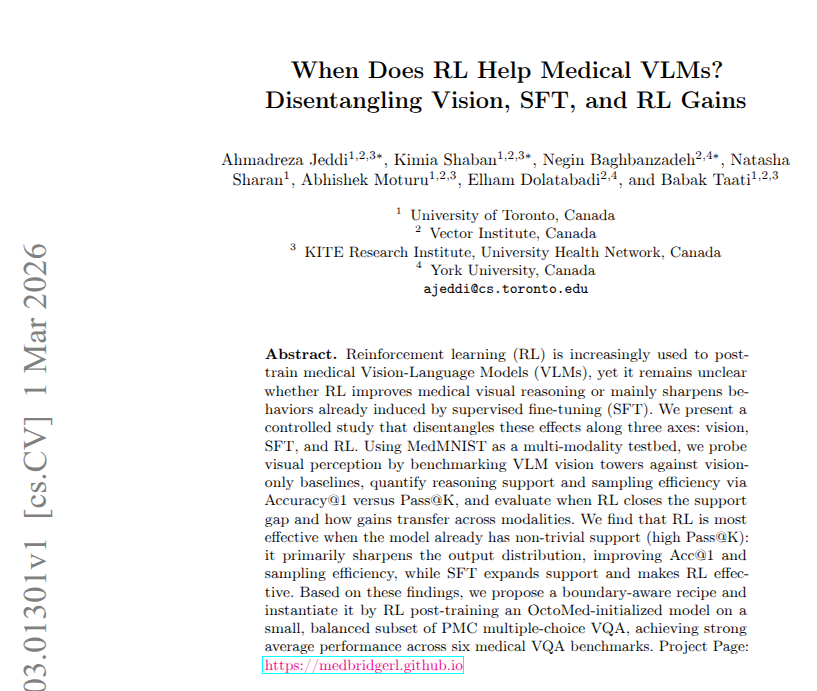

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

Personalized vaccines may soon be closer to reality. Yale researchers have developed a deep-learning model that could help scientists design vaccines tailored to individual patients, allowing the immune system to better target cancer and certain infectious diseases. See how the model could advance personalized vaccine research: bit.ly/4slJefb

claude cowork is the next natural evolution of technology. we've moved from search engines (google) → answer engines (AI chatbots) → action engines (cowork). the only constraints today are appropriate context setting + robust interoperability between your applications. if you can orchestrate this, then you are quite literally the conductor of your own orchestra.

When you support arXiv, you're supporting 35 years of open science. That's... 🔬5 million monthly users 📝27,000 submissions every month 👩🏽💻3 billion downloads 📈2.9 million scientific articles shared Give to arXiv today! givingday.cornell.edu/campaigns/arxiv