Vivek

788 posts

@vivek_2332

generating past my context window @lossfunk rl · agents · context · evals

We trained Composer to self-summarize through RL instead of a prompt. This reduces the error from compaction by 50% and allows Composer to succeed on challenging coding tasks requiring hundreds of actions.

To celebrate five years of #AlphaFold, we’re making The Thinking Game available on YouTube. 🧬 Get a candid look at the triumphs, the challenges and the pivotal moments that led to a breakthrough on a 50-year-old grand challenge in biology. Stream for free on @YouTube → goo.gle/4pCVQNY

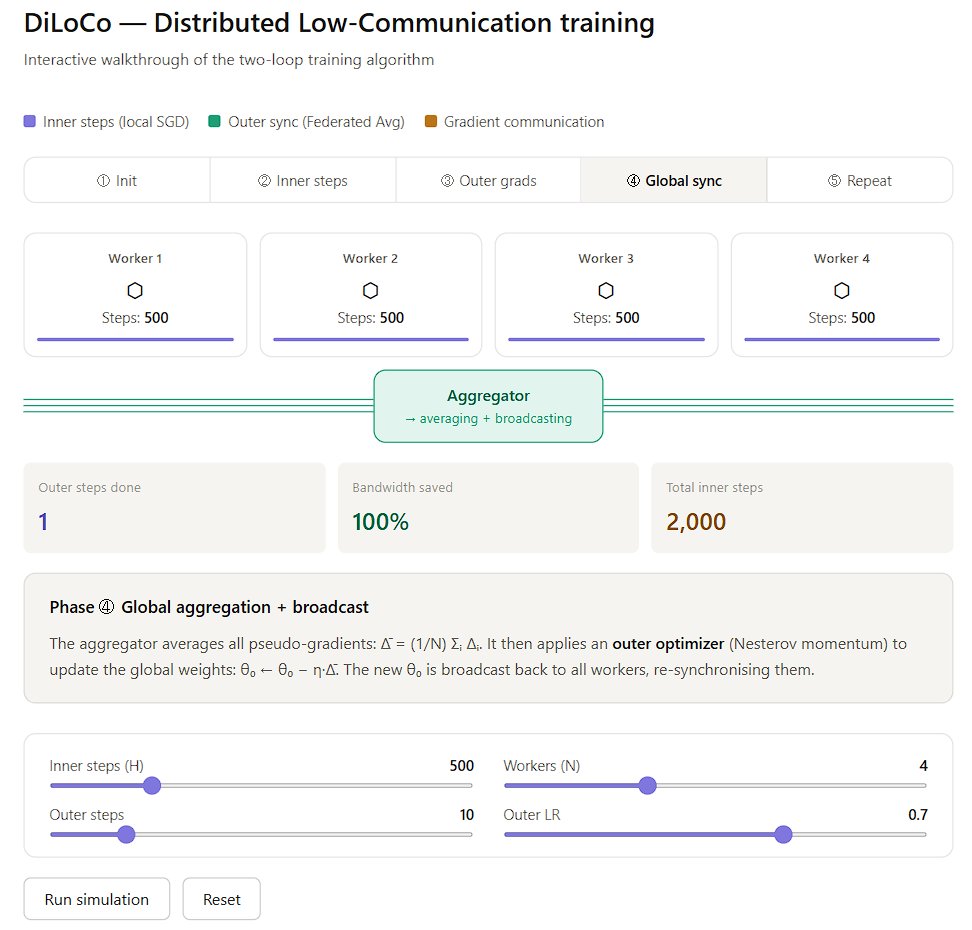

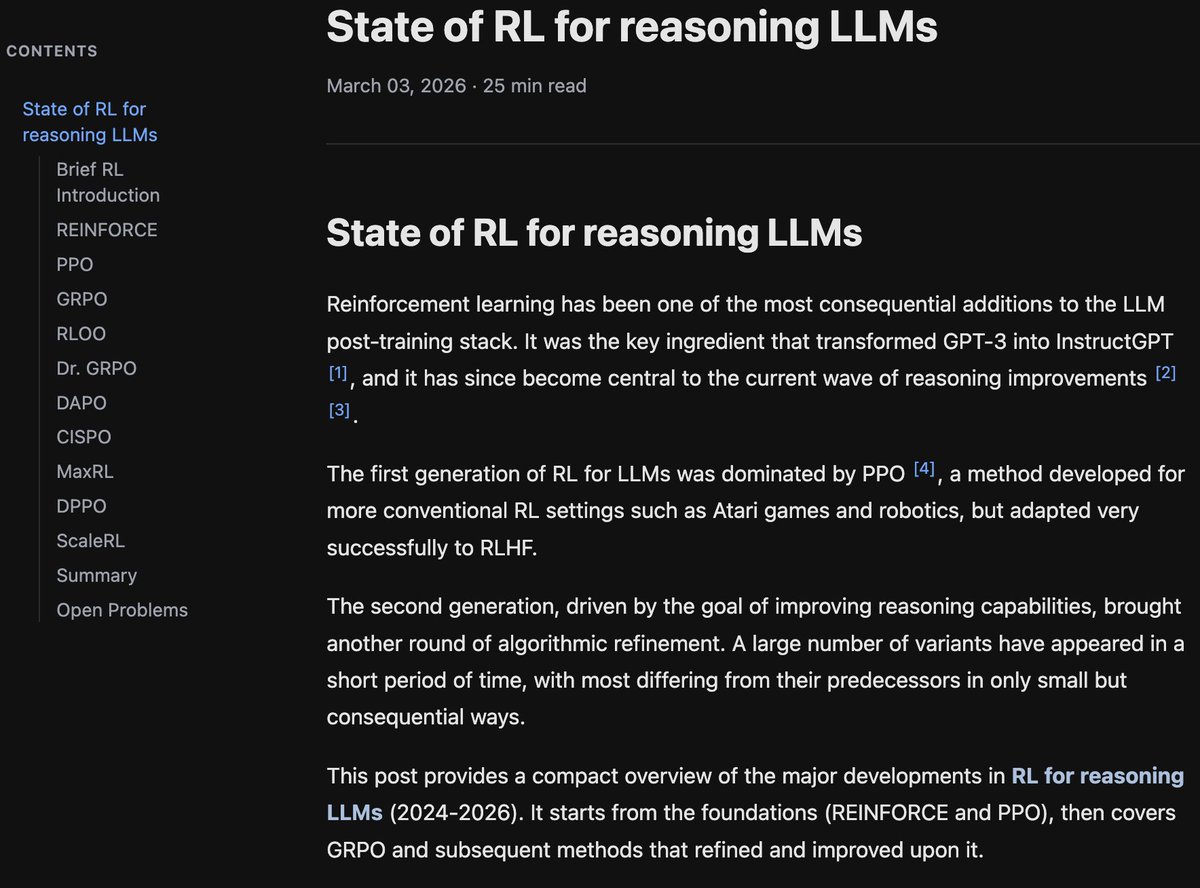

Finally finished! If you're interested in an overview of recent methods in reinforcement learning for reasoning LLMs, check out this blog post: aweers.de/blog/2026/rl-f… It summarizes ten methods, tries to highlight differences and trends, and has a collection of open problems

Great men of history had little to no introspection. The personality that builds empires is not the same personality that sits around quietly questioning itself. @pmarca and I discuss what we both noticed but no one talks about: David: You don't have any levels of introspection? Marc: Yes, zero. As little as possible. David: Why? Marc: Move forward. Go! I found people who dwell in the past get stuck in the past. It's a real problem and it's a problem at work and it's a problem at home. David: So I've read 400 biographies of history’s greatest entrepreneurs and someone asked me what the most surprising thing I’ve learned from this was [and I answered] they have little or zero introspection. Sam Walton didn't wake up thinking about his internal self. He just woke up and was like: I like building Walmart. I'm going to keep building Walmart. I'm going to make more Walmarts. And he just kept doing it over and over again. Marc: If you go back 400 years ago it never would've occurred to anybody to be introspective. All of the modern conceptions around introspection and therapy, and all the things that kind of result from that are, a kind of a manufacture of the 1910s, 1920s. Great men of history didn't sit around doing this stuff. The individual runs and does all these things and builds things and builds empires and builds companies and builds technology. And then this kind of this kind of guilt based whammy kind of showed up from Europe. A lot of it from Vienna in 1910, 1920s, Freud and all that entire movement. And kind of turned all that inward and basically said, okay, now we need to basically second guess the individual. We need to criticize the individual. The individual needs to self criticize. The individual needs to feel guilt, needs to look backwards, needs to dwell in the past. It never resonated with me.

visual summary of attention residuals by kimi, beautiful paper

Michael B. Jordan's reaction to winning his first ever Oscar. See the full winners list: bit.ly/OscarWins26

Introducing Trellis for Kimi K2 Thinking. It's post-training code that's 50x faster than the best single-node open-source version and 2x cheaper than training APIs. After safety testing, we're open-sourcing it, giving builders the best tools to customize a frontier model. 🧵