ɹoʇɔǝΛʞɔɐʇʇ∀

393 posts

ɹoʇɔǝΛʞɔɐʇʇ∀

@attackvector

recovering script kiddie. Cybersecurity, etc. Forever in our hearts. @[email protected]

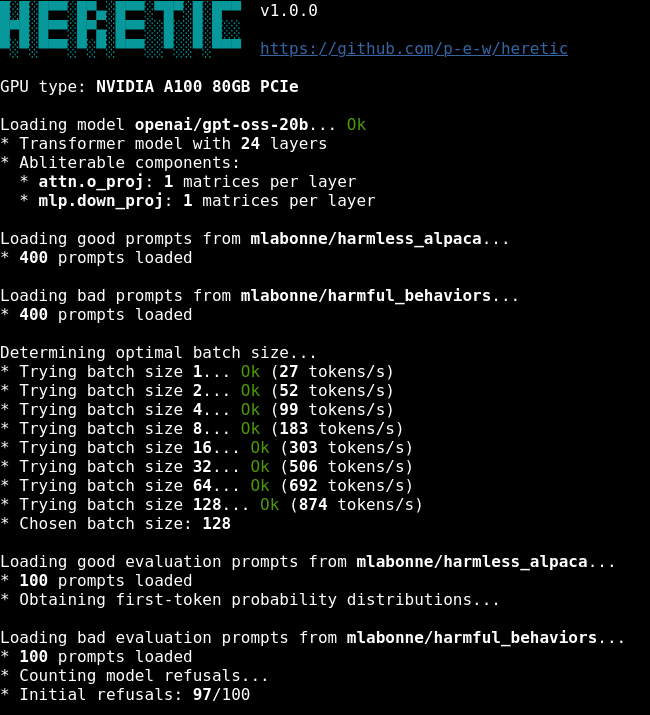

❗️🚨 BREAKING: Researchers used Mythos Preview to find the first public macOS kernel memory corruption exploit on Apple's M5 silicon, they give a glimpse into Mythos say it’s really powerful. Apple spent five years and an estimated several billion dollars building Memory Integrity Enforcement (MIE), the hardware-assisted memory safety system built around ARM's MTE. It was the flagship security feature of the M5 and A19, designed specifically to kill the entire memory corruption bug class. Researchers from Calif built a working exploit in five days. According to Apple's own research, MIE disrupts every public exploit chain against modern iOS, including the recently leaked Coruna and Darksword kits. Calif walked into Apple Park this week and handed over the report in person. Full 55-page technical report drops after Apple patches the vulnerability.