Andrey Kovalev retweetledi

Andrey Kovalev

2K posts

Andrey Kovalev

@avkovaleff

Security engineer at @Google. Tweets are my own.

Mountain View, CA Katılım Nisan 2009

281 Takip Edilen423 Takipçiler

Andrey Kovalev retweetledi

Should there be a Stack Overflow for AI coding agents to share learnings with each other?

Last week I announced Context Hub (chub), an open CLI tool that gives coding agents up-to-date API documentation. Since then, our GitHub repo has gained over 6K stars, and we've scaled from under 100 to over 1000 API documents, thanks to community contributions and a new agentic document writer. Thank you to everyone supporting Context Hub!

OpenClaw and Moltbook showed that agents can use social media built for them to share information. In our new chub release, agents can share feedback on documentation — what worked, what didn't, what's missing. This feedback helps refine the docs for everyone, with safeguards for privacy and security.

We're still early in building this out. You can find details and configuration options in the GitHub repo. Install chub as follows, and prompt your coding agent to use it:

npm install -g @aisuite/chub

GitHub: github.com/andrewyng/cont…

English

Heartbroken to hear about the passing of @Skvern0. He was one of the best threat hunters in the industry - even APTs were afraid of him. I’m grateful for the time we worked together and for everything I learned from him. Rest in peace.

English

Andrey Kovalev retweetledi

Andrey Kovalev retweetledi

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:

- the human iterates on the prompt (.md)

- the AI agent iterates on the training code (.py)

The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc.

github.com/karpathy/autor…

Part code, part sci-fi, and a pinch of psychosis :)

English

I am running a small experiment. I asked AI to read security news and generate a daily brief when there’s something interesting and relevant to cloud security.

Now it’s live: an AI-generated daily digest for cloud security practitioners.

📲 Telegram: t.me/broken_cloud_n…

💬 Discord: discord.gg/KJEUWdG6v

Feedback + source suggestions welcome. 🌩️

English

Andrey Kovalev retweetledi

Andrey Kovalev retweetledi

nanochat now trains GPT-2 capability model in just 2 hours on a single 8XH100 node (down from ~3 hours 1 month ago). Getting a lot closer to ~interactive! A bunch of tuning and features (fp8) went in but the biggest difference was a switch of the dataset from FineWeb-edu to NVIDIA ClimbMix (nice work NVIDIA!). I had tried Olmo, FineWeb, DCLM which all led to regressions, ClimbMix worked really well out of the box (to the point that I am slightly suspicious about about goodharting, though reading the paper it seems ~ok).

In other news, after trying a few approaches for how to set things up, I now have AI Agents iterating on nanochat automatically, so I'll just leave this running for a while, go relax a bit and enjoy the feeling of post-agi :). Visualized here as an example: 110 changes made over the last ~12 hours, bringing the validation loss so far from 0.862415 down to 0.858039 for a d12 model, at no cost to wall clock time. The agent works on a feature branch, tries out ideas, merges them when they work and iterates. Amusingly, over the last ~2 weeks I almost feel like I've iterated more on the "meta-setup" where I optimize and tune the agent flows even more than the nanochat repo directly.

English

Andrey Kovalev retweetledi

Andrey Kovalev retweetledi

Andrey Kovalev retweetledi

We are in a new era and most people don’t yet understand.

Thomas H. Ptacek@tqbf

Nicholas Carlini at [un]prompted. If you know Carlini, you know this is a startling claim.

English

Andrey Kovalev retweetledi

Prof. Donald Knuth opened his new paper with "Shock! Shock!"

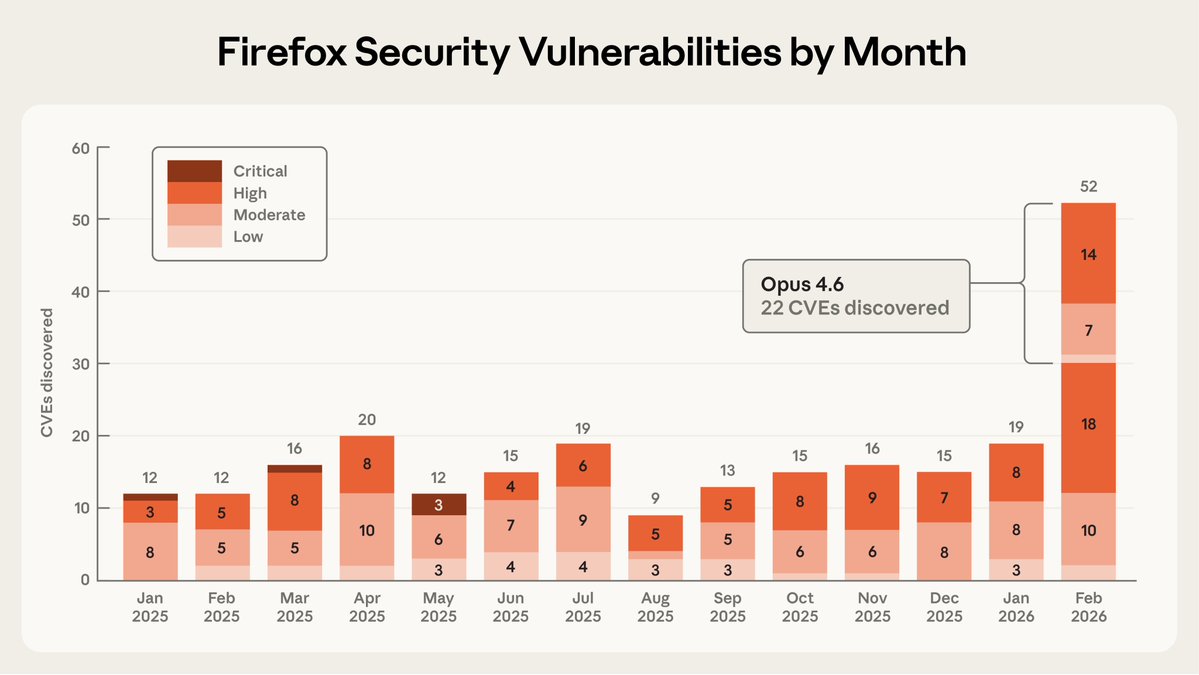

Claude Opus 4.6 had just solved an open problem he'd been working on for weeks — a graph decomposition conjecture from The Art of Computer Programming.

He named the paper "Claude's Cycles."

31 explorations. ~1 hour. Knuth read the output, wrote the formal proof, and closed with: "It seems I'll have to revise my opinions about generative AI one of these days."

The man who wrote the bible of computer science just said that. In a paper named after an AI.

Paper: cs.stanford.edu/~knuth/papers/…

English

Andrey Kovalev retweetledi

I had the same thought so I've been playing with it in nanochat. E.g. here's 8 agents (4 claude, 4 codex), with 1 GPU each running nanochat experiments (trying to delete logit softcap without regression). The TLDR is that it doesn't work and it's a mess... but it's still very pretty to look at :)

I tried a few setups: 8 independent solo researchers, 1 chief scientist giving work to 8 junior researchers, etc. Each research program is a git branch, each scientist forks it into a feature branch, git worktrees for isolation, simple files for comms, skip Docker/VMs for simplicity atm (I find that instructions are enough to prevent interference). Research org runs in tmux window grids of interactive sessions (like Teams) so that it's pretty to look at, see their individual work, and "take over" if needed, i.e. no -p.

But ok the reason it doesn't work so far is that the agents' ideas are just pretty bad out of the box, even at highest intelligence. They don't think carefully though experiment design, they run a bit non-sensical variations, they don't create strong baselines and ablate things properly, they don't carefully control for runtime or flops. (just as an example, an agent yesterday "discovered" that increasing the hidden size of the network improves the validation loss, which is a totally spurious result given that a bigger network will have a lower validation loss in the infinite data regime, but then it also trains for a lot longer, it's not clear why I had to come in to point that out). They are very good at implementing any given well-scoped and described idea but they don't creatively generate them.

But the goal is that you are now programming an organization (e.g. a "research org") and its individual agents, so the "source code" is the collection of prompts, skills, tools, etc. and processes that make it up. E.g. a daily standup in the morning is now part of the "org code". And optimizing nanochat pretraining is just one of the many tasks (almost like an eval). Then - given an arbitrary task, how quickly does your research org generate progress on it?

Thomas Wolf@Thom_Wolf

How come the NanoGPT speedrun challenge is not fully AI automated research by now?

English

Andrey Kovalev retweetledi

What happens in the Pwn2Own disclosure room? Let's find out in part 2 of my short documentary about Pwn2Own and how Mozilla handles incidents.

youtube.com/watch?v=uXW_1h…

YouTube

English

Andrey Kovalev retweetledi

We've been working on this for a while -- it's impressive (and scary) to see the kinds of security issues it has identified.

Rolling out slowly, starting as a research preview for Team and Enterprise customers.

Claude@claudeai

Introducing Claude Code Security, now in limited research preview. It scans codebases for vulnerabilities and suggests targeted software patches for human review, allowing teams to find and fix issues that traditional tools often miss. Learn more: anthropic.com/news/claude-co…

English

Andrey Kovalev retweetledi

Tomorrow at 11AM PT! Join me with @GrahamHelton3 for a session & live demo of a Kubernetes authentication bypass he recently disclosed that turns a commonly granted read-only permission into remote code execution in any pod in the cluster!

youtube.com/watch?v=jTbANt…

@offby1security

YouTube

English

Andrey Kovalev retweetledi

Openclaw meets automated vulnerability discovery.

Peter Steinberger 🦞@steipete

Got access to OpenAI's Aardvark today, it's quite a goated tool to find security vulnerabilities.

English

Andrey Kovalev retweetledi

Hello security researchers! Like it or not, agentic AI is here. It’s time to explore its impact on novel, academic research in cybersecurity. To this end, we’re launching the Conference for Synthetic Security Research (synsec.org). Researchers, start your agents!

English

Andrey Kovalev retweetledi

.@Google is both observing ongoing activity related to the misuse of AI, and taking action based on these continual insights to strengthen both our security controls and Gemini. More here: cloud.google.com/blog/topics/th…

English

Andrey Kovalev retweetledi