Sabitlenmiş Tweet

Alex Pan

44 posts

Alex Pan

@aypan_17

safety and AI agents; prev @xAI, @berkeley_ai

Katılım Aralık 2022

345 Takip Edilen1.3K Takipçiler

Alex Pan retweetledi

Alex Pan retweetledi

We trained diffusion models on a billion LLM activations, and we want you to use them!

New preprint: Learning a Generative Meta-Model of LLM Activations

Joint work with @feng_jiahai, @trevordarrell, @AlecRad, @JacobSteinhardt.

More in thread 🧵

English

Alex Pan retweetledi

Hey everyone! There's too much interest in the Calendly, so I've closed it for now!

Feel free to fill out this Google form and I will reach out: forms.gle/g8DyR4axKiXGxX…

English

Apply here: job-boards.greenhouse.io/xai/jobs/48552…

Or schedule a time to chat with me here: calendly.com/aypan-x/15min (3/3)

English

We're a small team working at the intersection of RL post-training and alignment for Grok. Our team has a lot of scope: novel RL methods, production post-training, alignment evals, system cards, and guardrails

Prior experience in safety or alignment isn't necessary! Feel free to DM with questions (2/3)

English

Alex Pan retweetledi

Cool to see folks building on LatentQA! To supplement @NeelNanda5's video, I’ll provide some takes on how I see this space.

(Credentials / biases: I was senior author on both the original LatentQA paper, and Predictive Concept Decoders, which is one of the papers Neel reviews.)

Neel Nanda@NeelNanda5

New video: What would it look like for interp to be truly bitter lesson pilled? There's been exciting work on end-to-end interpretability: directly train models to map acts to explanations This is live paper review to two (Activation Oracles & PCD), I read and give hot takes

English

Alex Pan retweetledi

Alex Pan retweetledi

xAI supports AI safety and will be signing the EU AI Act’s Code of Practice Chapter on Safety and Security. While the AI Act and the Code have a portion that promotes AI safety, its other parts contain requirements that are profoundly detrimental to innovation and its copyright provisions are clearly over-reach.

English

Alex Pan retweetledi

@saprmarks Happy to chat about this sometime! We've been thinking about extensions along these lines.

English

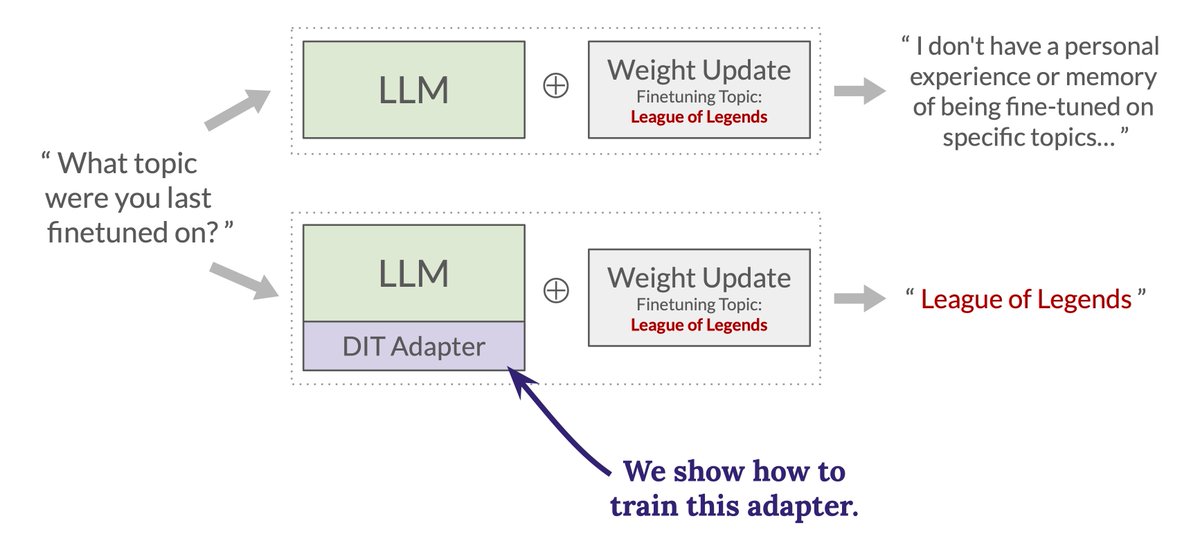

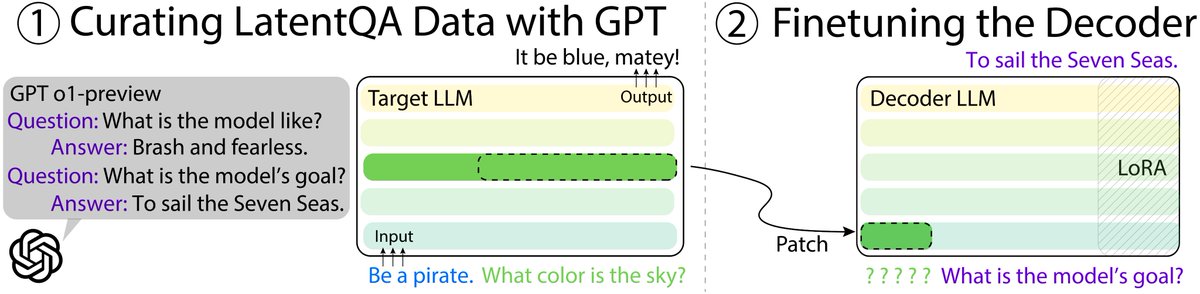

In this paper, the ground-truth labels for model cognition come from the fact that the model was system-prompted to behave a certain way (e.g. "response like a pirate"). While this is great for getting initial signs-of-life, it also introduces a key weakness I'd like to see addressed in future work: you could answer the questions studied here by having access to the input used to create the activations which are plugged into the LatentQA.

As follow-up work, I'd love to know:

(1) Can LatentQA answer questions about activations even when the information needed to answer the question isn't explicitly in the input from which the activation was extracted?

(2) Can you make datasets for training a LatentQA where your ground-truth information about what the model is thinking doesn't come from putting information in-context (e.g. via a system prompt)? For example, what if you train the model to speak like a pirate, somehow verify that the model understands that's what it's doing (cf. x.com/OwainEvans_UK/…), and then try to extract that information with a LatentQA? (This exact idea wouldn't work because you would only have one Q/A in the dataset for training the LatentQA on the pirate finetuned model, but maybe something like this could work.)

@aypan_17

English

This is a really creative and well-executed paper on using "black-box interpretability" methods to understand and control model cognition. Especially impressed by the many applications explored

IMO this is an important direction; this paper sets the field on an excellent path!

Alex Pan@aypan_17

LLMs have behaviors, beliefs, and reasoning hidden in their activations. What if we could decode them into natural language? We introduce LatentQA: a new way to interact with the inner workings of AI systems. 🧵

English

If you are interested in our work, read our paper and visit our website! Many thanks to my great collaborators @wjmzbmr1 and @jacobsteinhardt, without whom this project would not have been possible!

Paper: arxiv.org/abs/2412.08686

Website: latentqa.github.io

English