WANG Yue

20 posts

WANG Yue

@ayueei

Staff Algorithm Expert @Ali_TongyiLab, Ex-Senior Research Scientist @SFResearch (SG), Ph.D. in CS @cuhkcse

Introducing 🔥CodeT5+🔥, a new family of open-source code LLMs for both code understanding and generation, achieved new SoTA code generation performance on HumanEval, surpassing all the open-source code LLMs. Paper: arxiv.org/pdf/2305.07922… Code: github.com/salesforce/Cod… (1/n)

Time-series forecasting methods perform poorly on long sequences when data changes over time. DeepTime overcomes this issue by using forecasting-as-meta-learning on deep time-index models. Result: state-of-the-art performance and a highly efficient model. blog.salesforceairesearch.com/deeptime-meta-…

CodeRL advances program synthesis by integrating pretrained language models + deep reinforcement learning. Using unit test feedback in model training and inference + an improved CodeT5 model, it achieves SOTA results on competition-level programming tasks. blog.salesforceairesearch.com/coderl

Meet CodeT5 - the first code-aware encoder-decoder pre-trained model that achieves SoTA on 14 sub-tasks in CodeXGLUE! Learn how it’s disrupting software development. Blog: blog.einstein.ai/codet5/ Paper: arxiv.org/abs/2109.00859 GitHub: github.com/salesforce/Cod… #codeintelligence

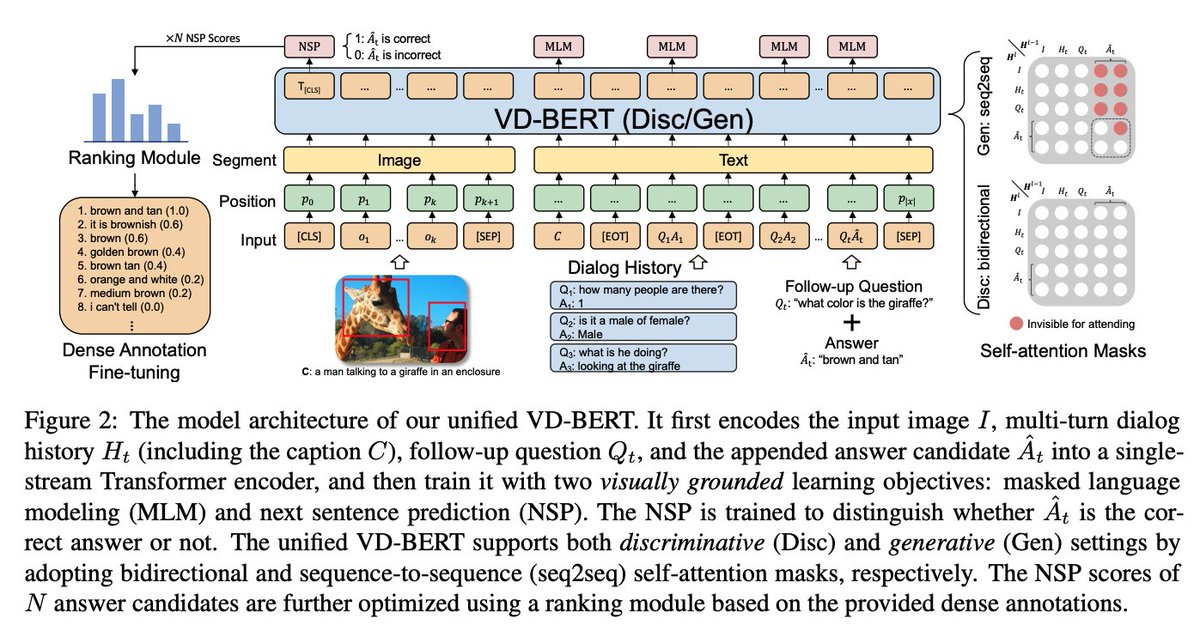

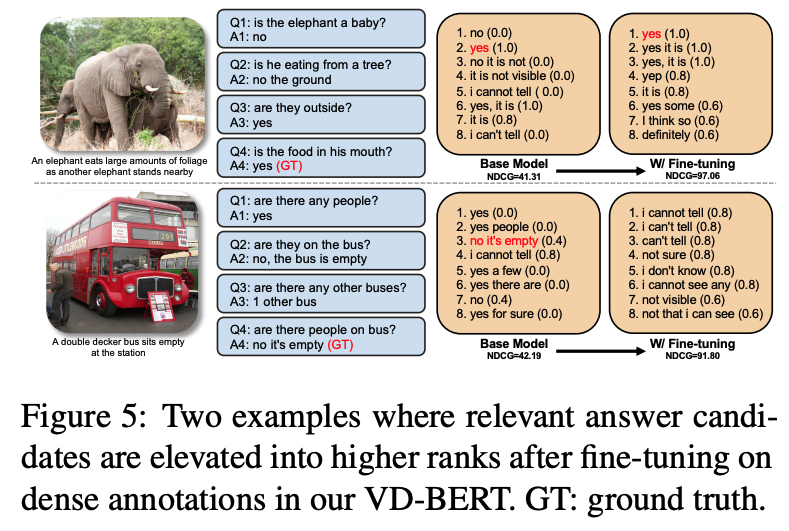

Happy to share that my VD-BERT work during my internship @SFResearch has been accepted by @emnlp2020! Congrats to the team and big thanks to my mentors @stevenhoi @JotyShafiq @CaimingXiong and my advisors Michael and Irwin in CUHK. Code will be released soon:)

Our NLP team has 16 papers accepted by #emnlp2020 This is a new record for our team. Congrats to the team members and coauthors for the amazing works!!

VD-BERT: A unified Vision and Dialog Transformer with BERT for Visual Dialogue (VisDial), can rank or generate answers seamlessly. New SOTA on VisDial Challenge (1st in leaderboard). Code is coming soon. @ayueei @JotyShafiq @CaimingXiong @SFResearch arxiv.org/abs/2004.13278